LessWrong (30+ Karma)

LessWrong (30+ Karma) “The state of AI safety in four fake graphs” by Boaz Barak

Mar 30, 2026

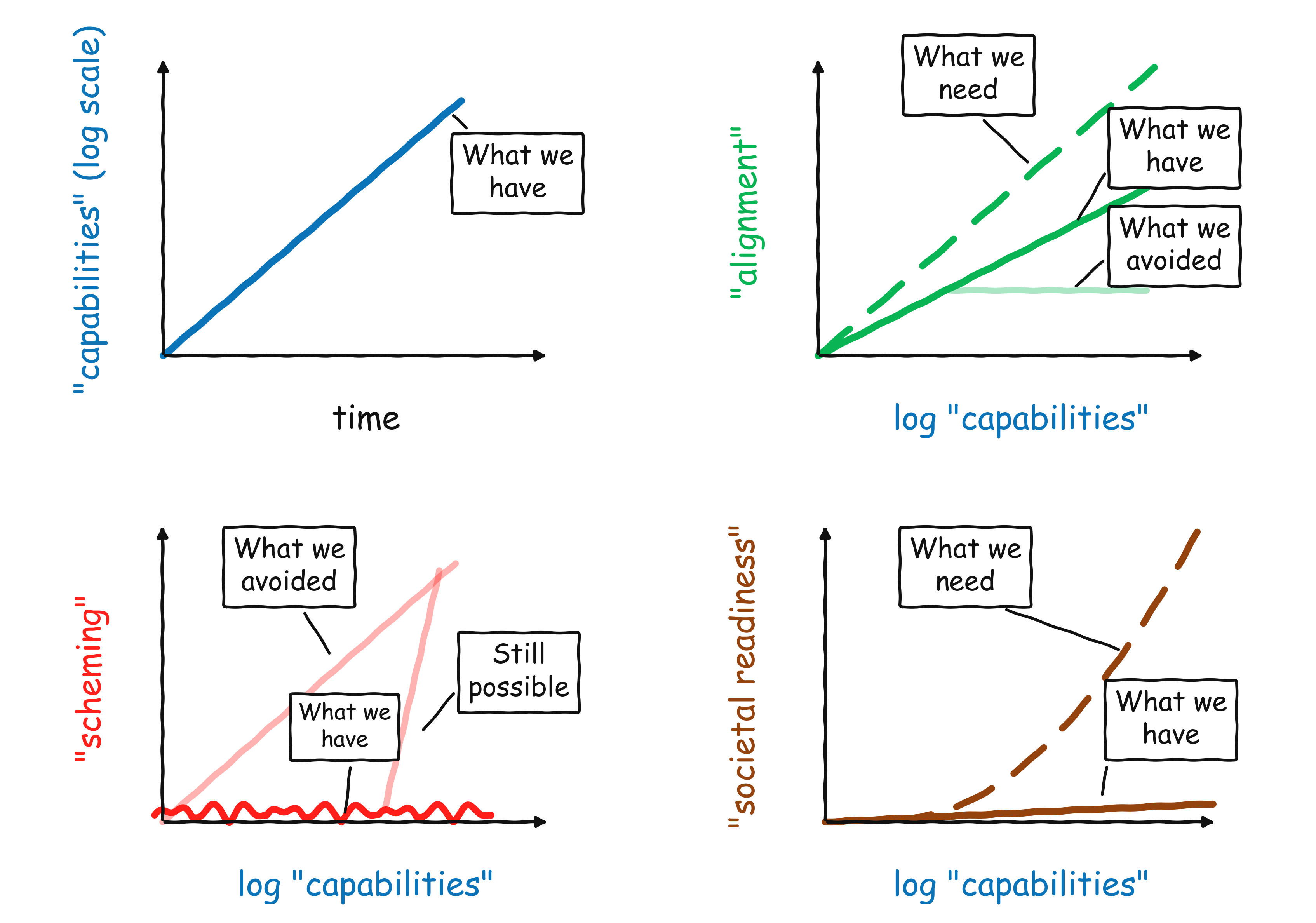

A brisk tour of where AI stands in early 2026, tracking rapid capability gains and a possible acceleration as AI helps build AI. Discussion of improving alignment metrics that still fall short for high-stakes use. Examination of the need to extend alignment beyond single conversations into multi-agent systems and monitoring. A call for iterative, empirical work rather than a single clever fix.

AI Snips

Chapters

Transcript

Episode notes

Exponential Capabilities And A New Upward Bend

- Capabilities keep improving exponentially, visible in the METR graph and revenue trends.

- Recent upward bend may reflect using AI to accelerate AI development, increasing the slope of progress.

Alignment Improving But Lagging Stakes

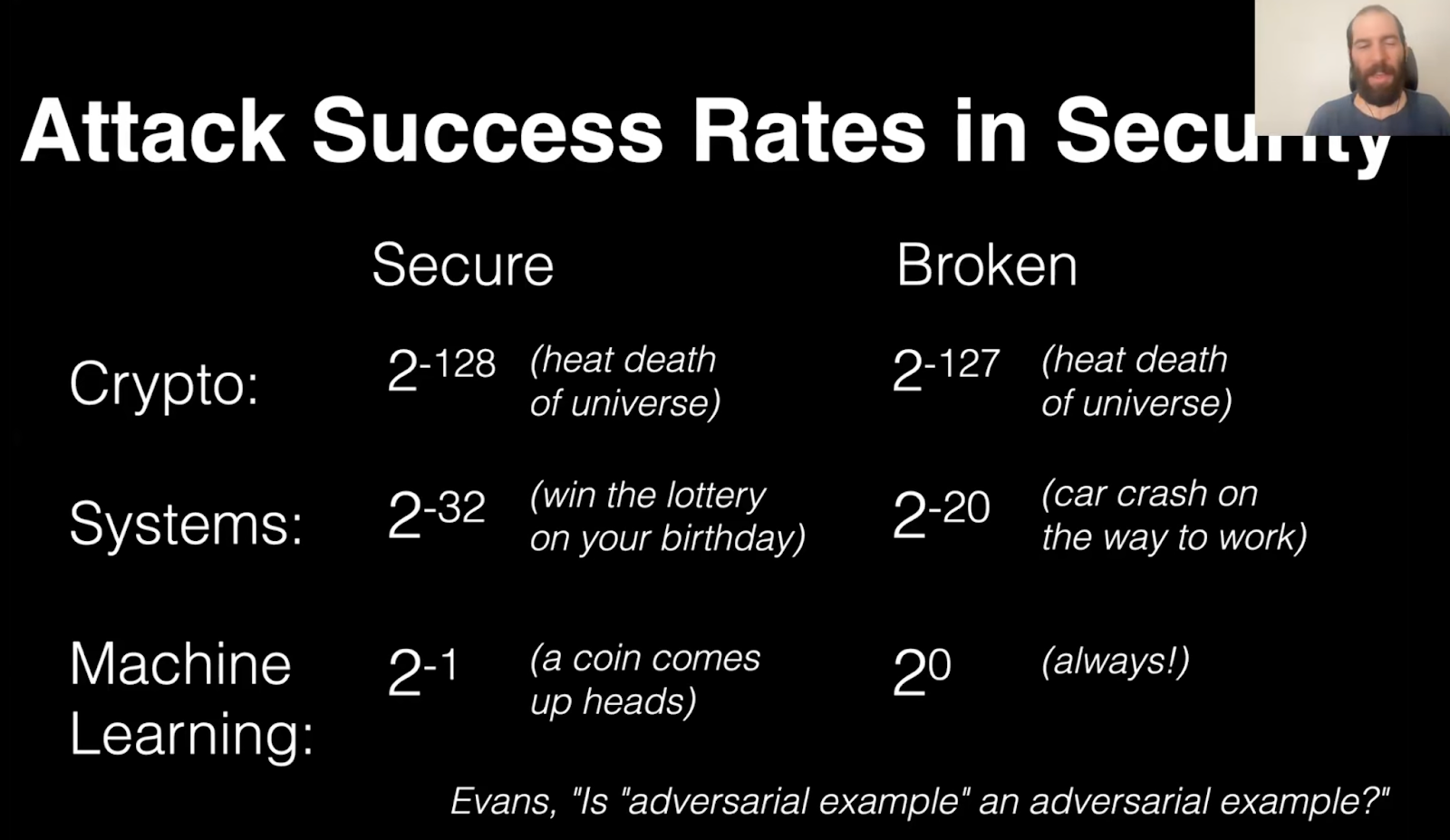

- Alignment is improving with capability but not fast enough to match rising stakes like adversarial robustness and reward hacking.

- Barak highlights gaps in reliability, security, and spec compliance despite better performance on some measures.

Scale Empirical Alignment Research Now

- Focus on scaling intent following, honesty, monitoring, and multi-agent alignment through iterative empirical experiments.

- Barak warns AI assistance helps but is not a magic bullet; humans must run experiments now.