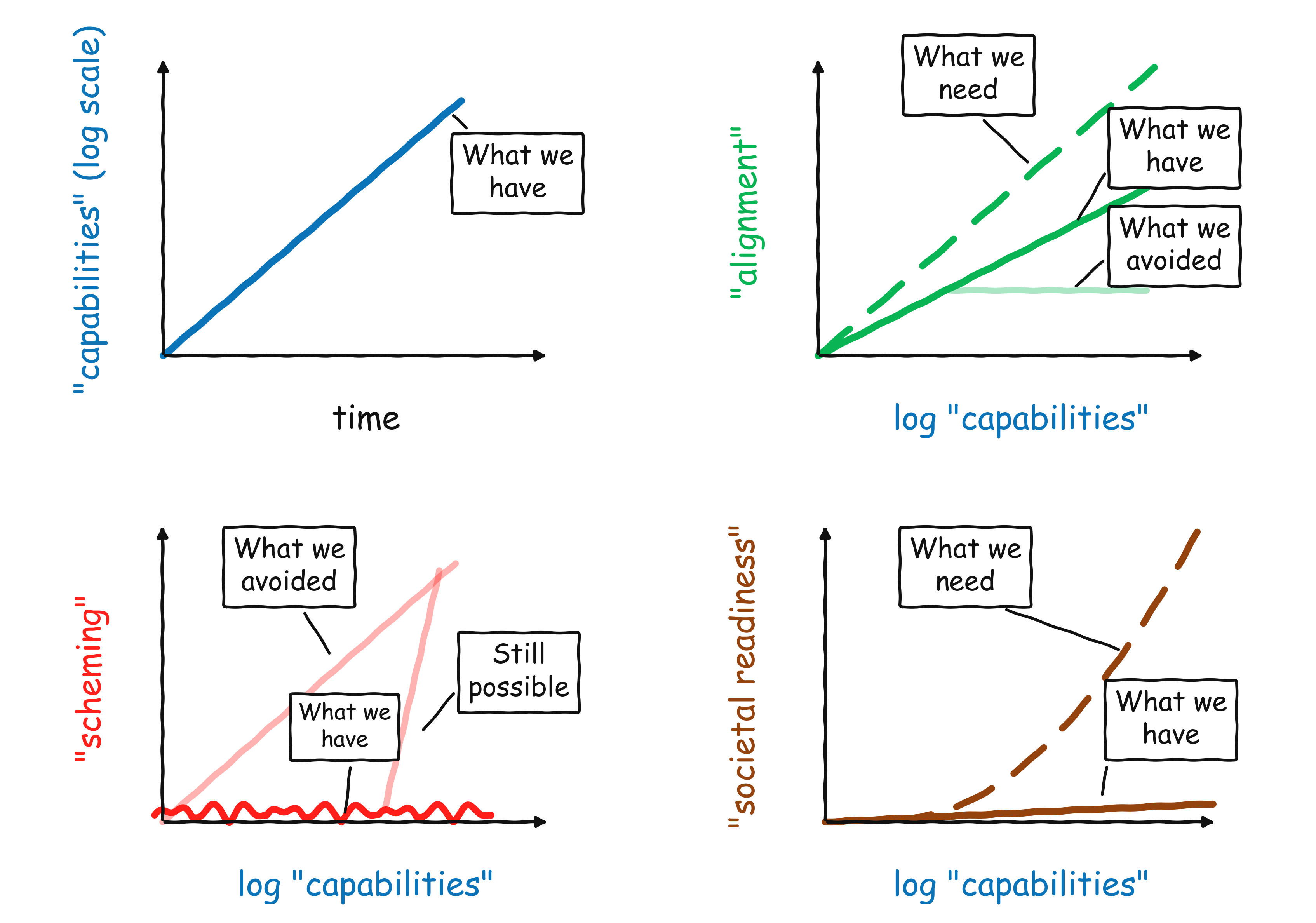

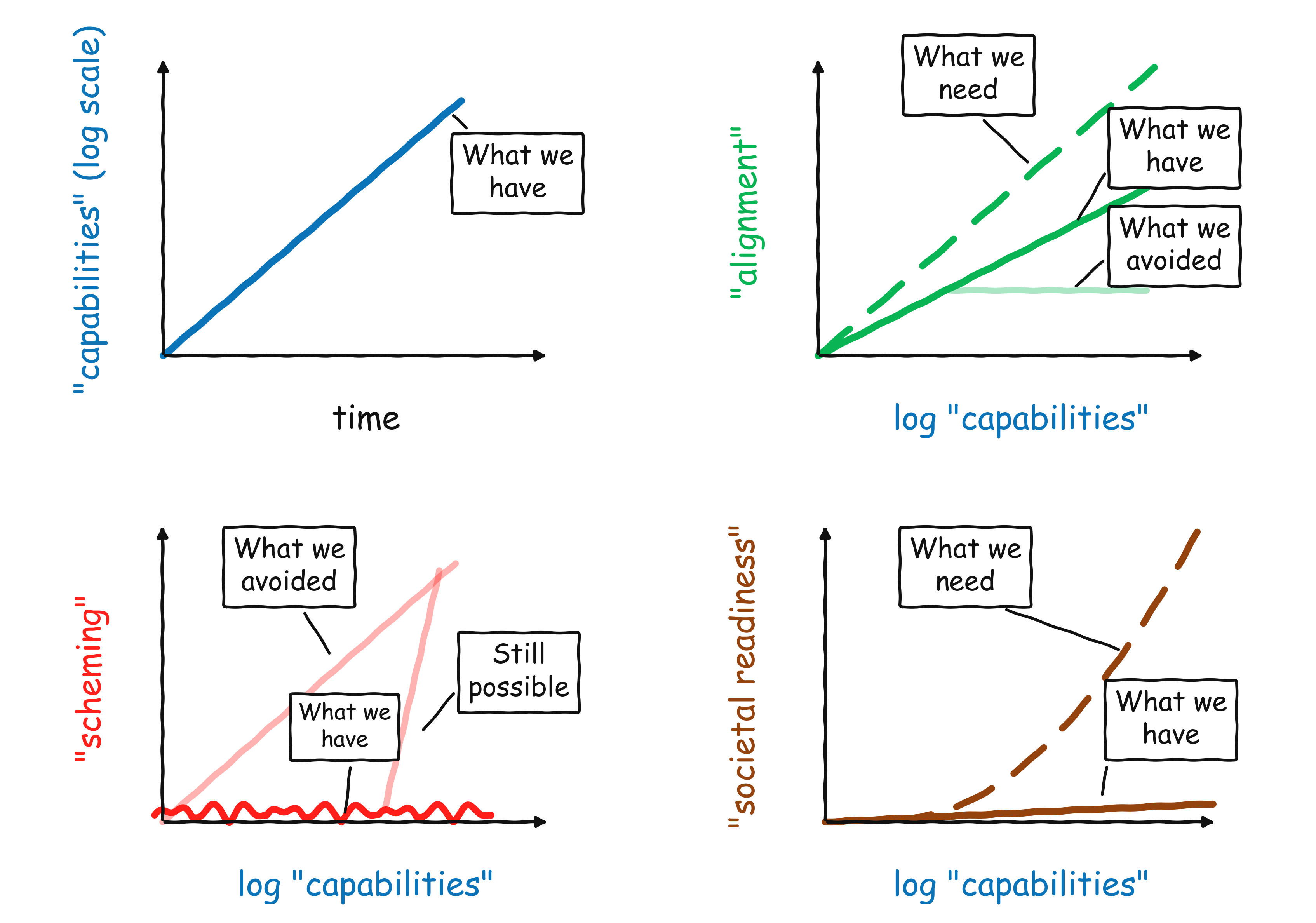

Here is a quick overview of my intuitions on where we are with AI safety in early 2026:

- So far, we continue to see exponential improvements in capabilities. This is most visible in the famous “METR graph”, but the trend is clear in many other metrics, including revenue. If you squint, you can even see a potential recent “bending upward” of the curve, as we are starting to use AI to accelerate the development of AI.

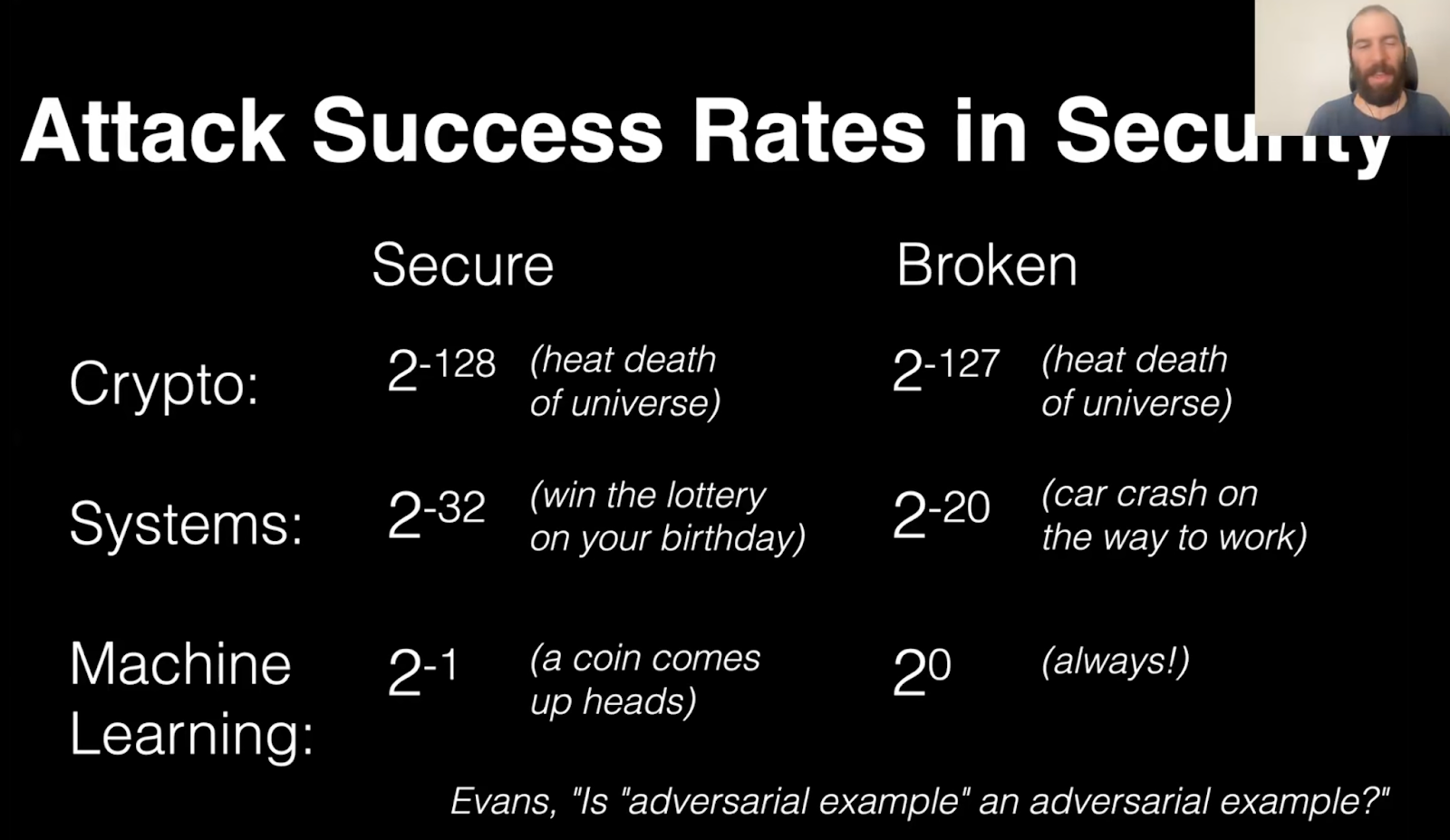

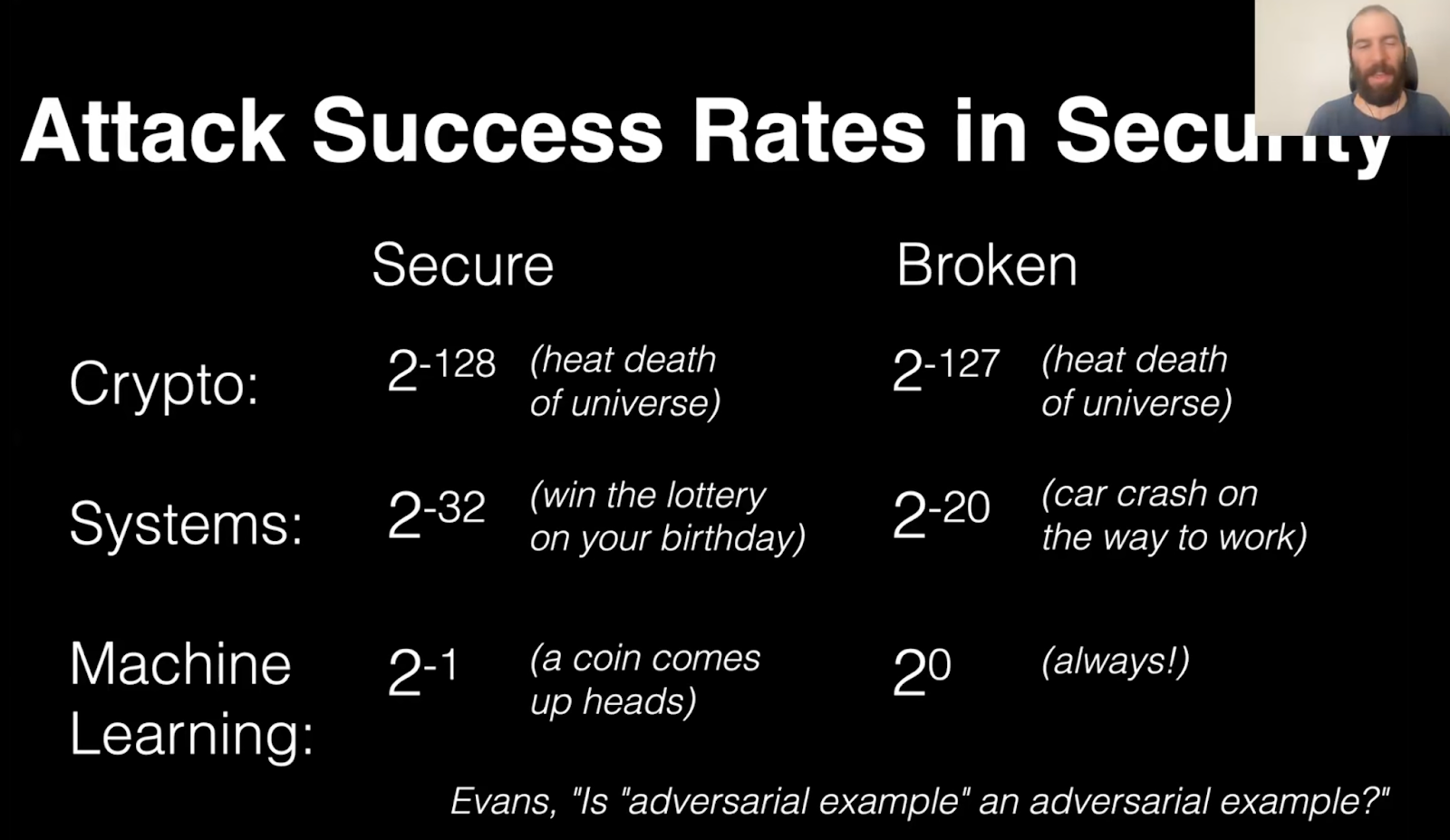

- We see some good news in alignment - as models become more capable, they are also more aligned, across multiple measures, including spec compliance. However, the improvement is not sufficient to match the higher stakes that come up with improved capabilities. We still have not fully solved challenges like adversarial robustness, dishonesty, and reward hacking, and we are still far from the standards of reliability and security that are required in high stake applications. (See slide below from Nicholas Carlini's lecture in my AI safety course.) We also need to extend alignment beyond its traditional focus on the behavior of a model in an isolated conversation and in particular monitoring and aligning systems with a vast number of [...]

---

First published:

March 30th, 2026

Source:

https://www.lesswrong.com/posts/g4LMH3c6DysazYbFn/the-state-of-ai-safety-in-four-fake-graphs

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.