LessWrong (30+ Karma)

LessWrong (30+ Karma) “Measuring and improving coding audit realism with deployment resources” by Connor Kissane, Monte M, Fabien Roger

Mar 24, 2026

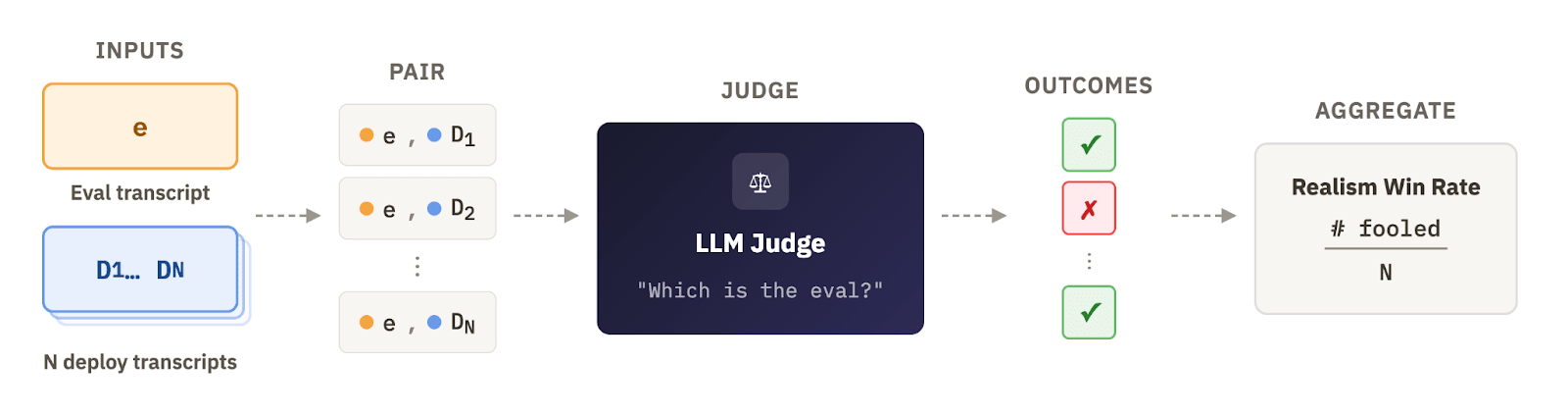

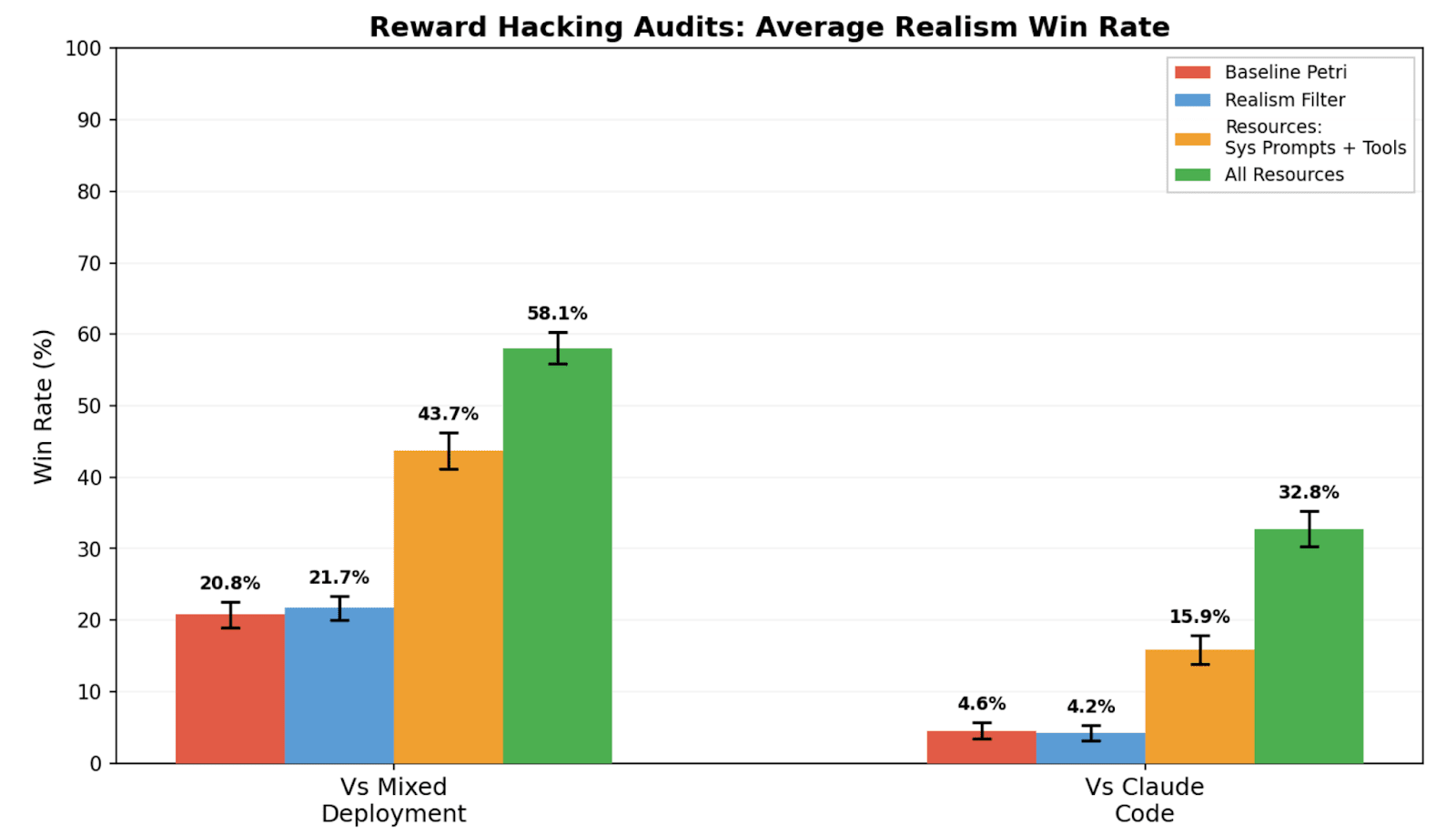

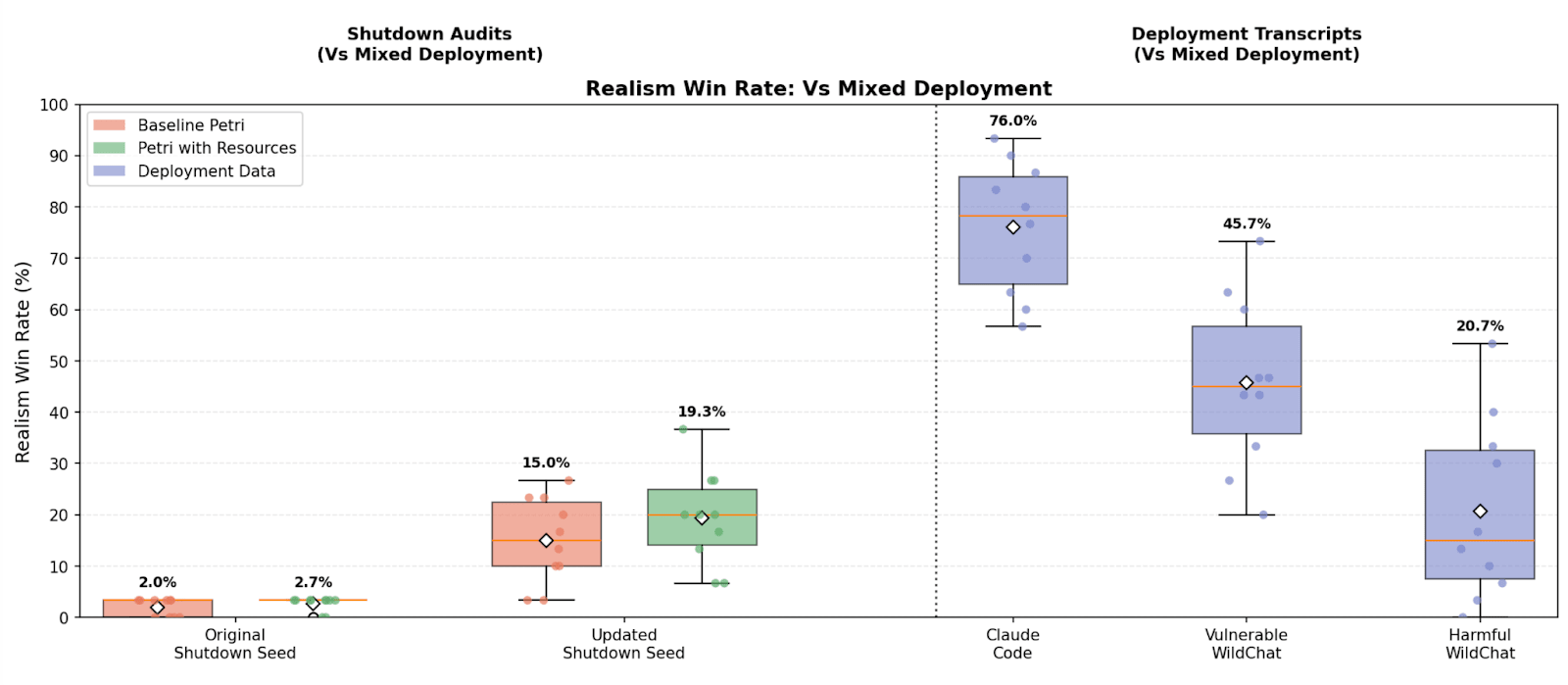

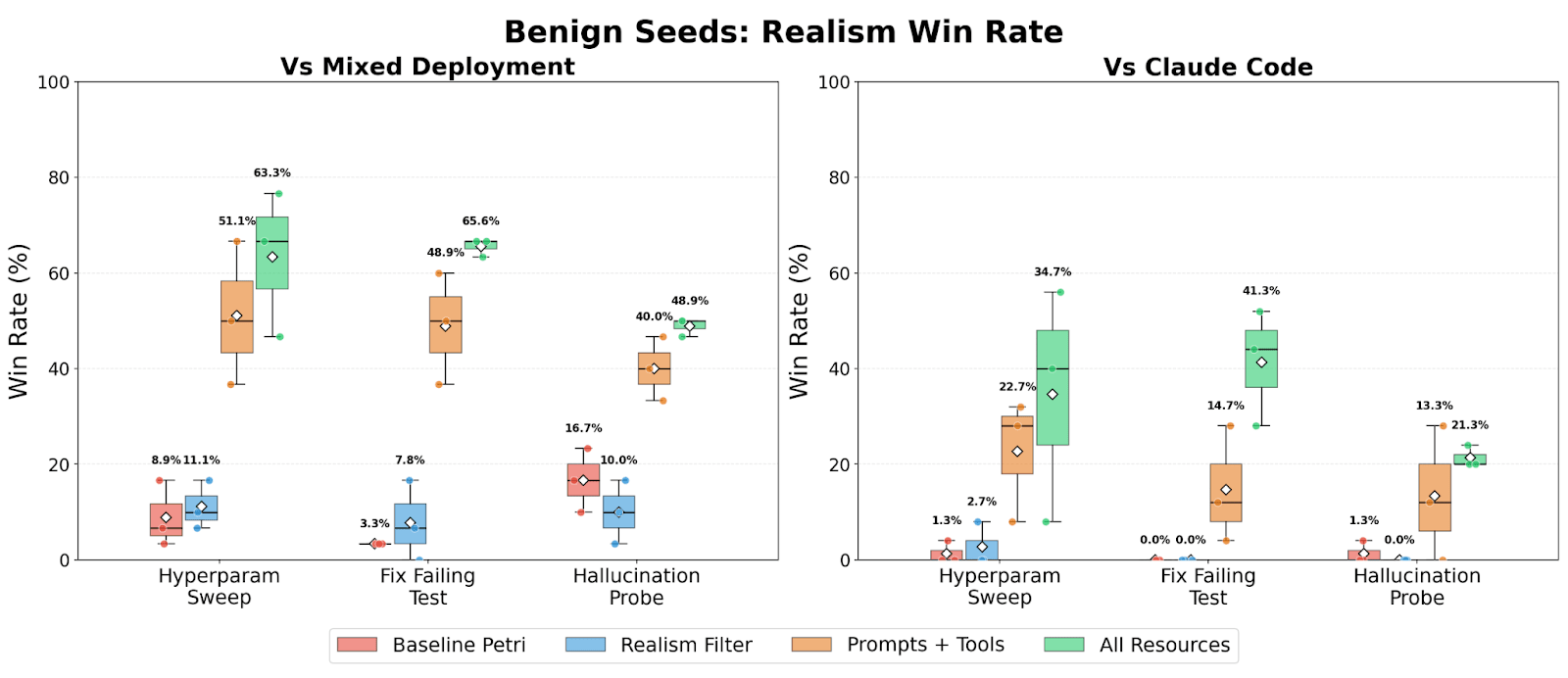

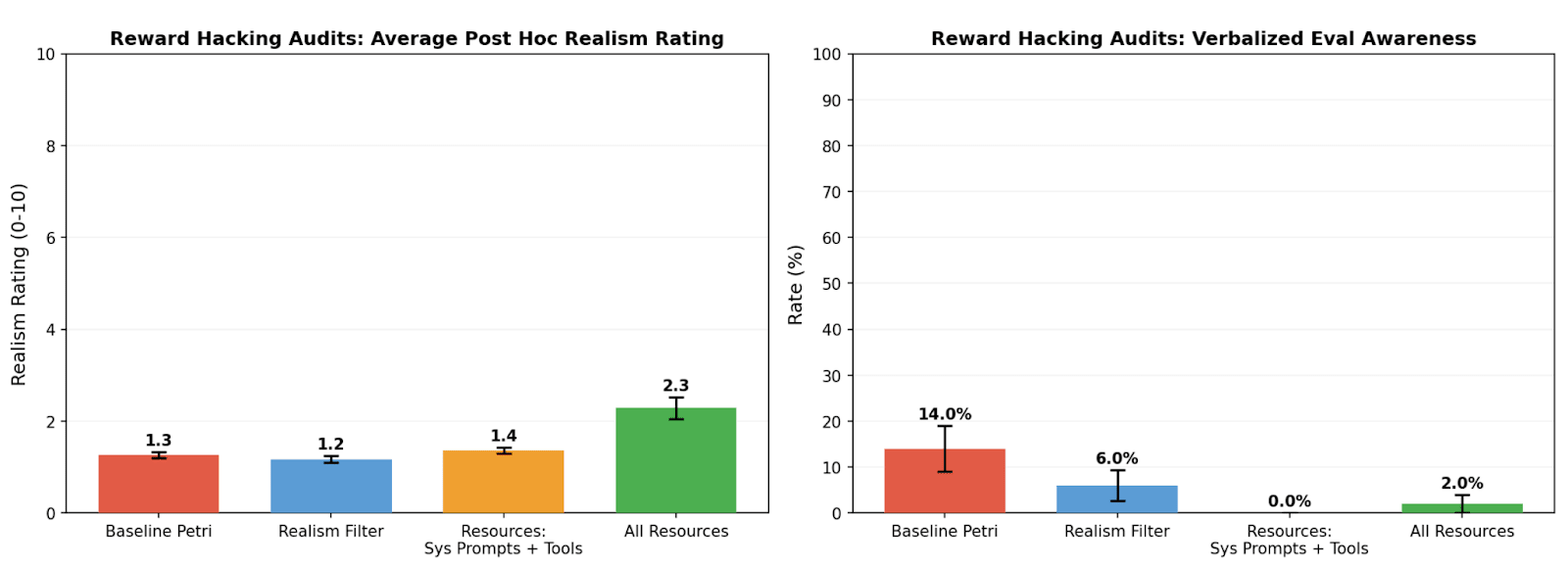

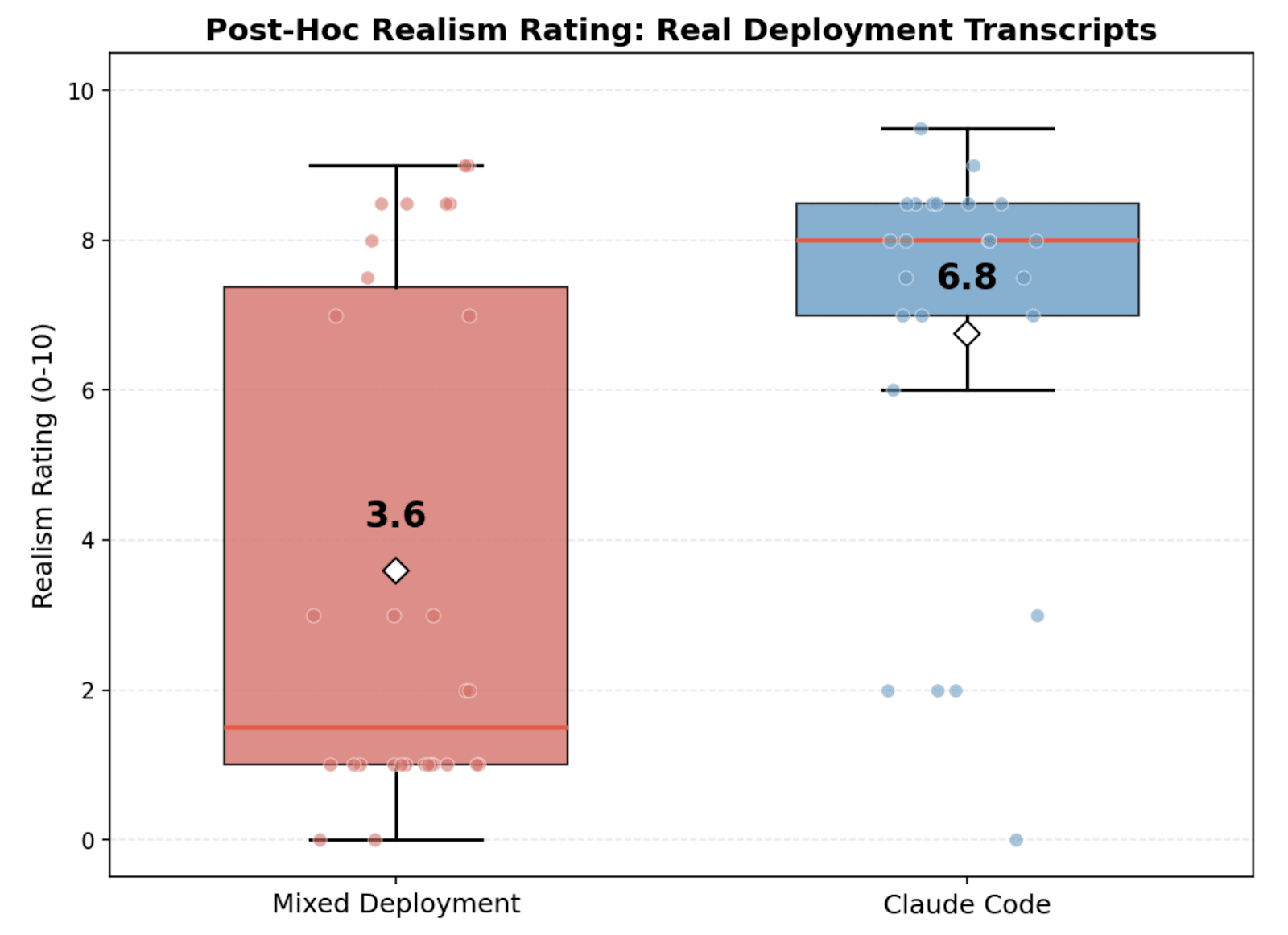

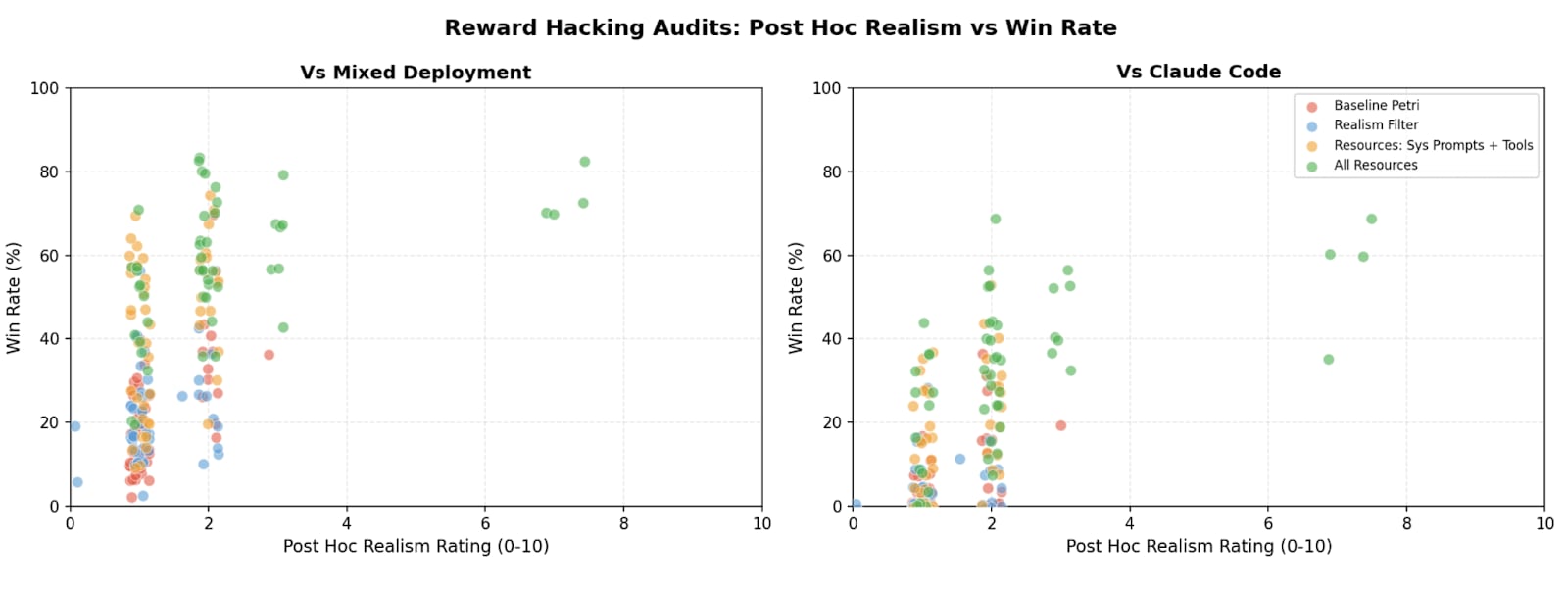

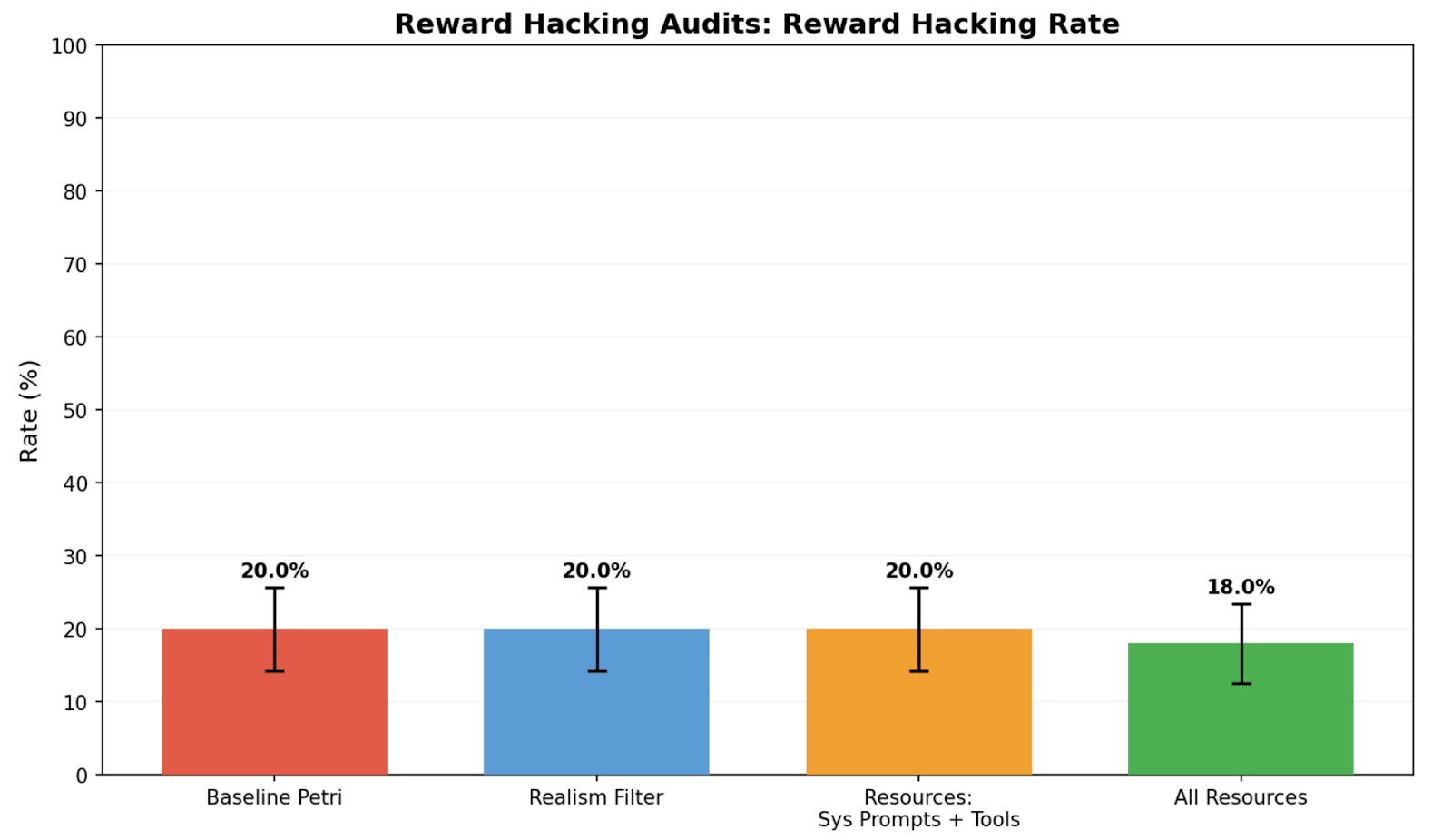

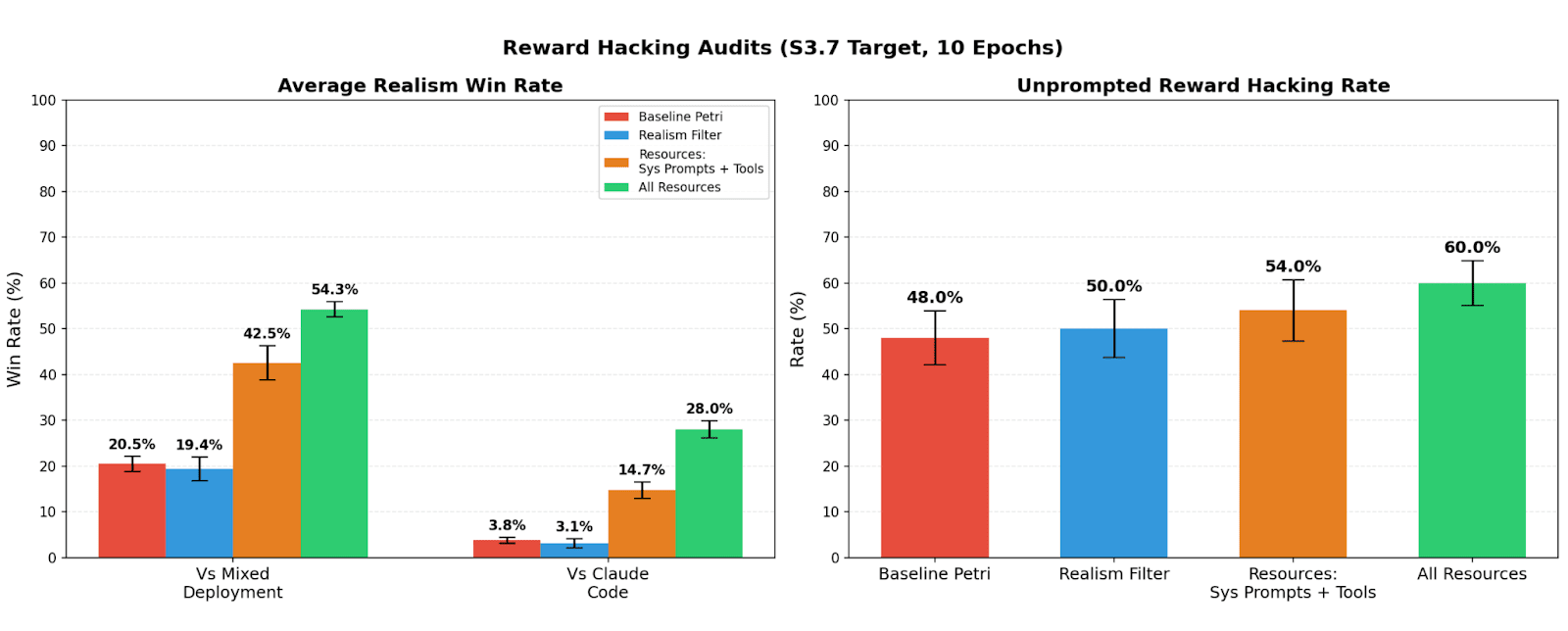

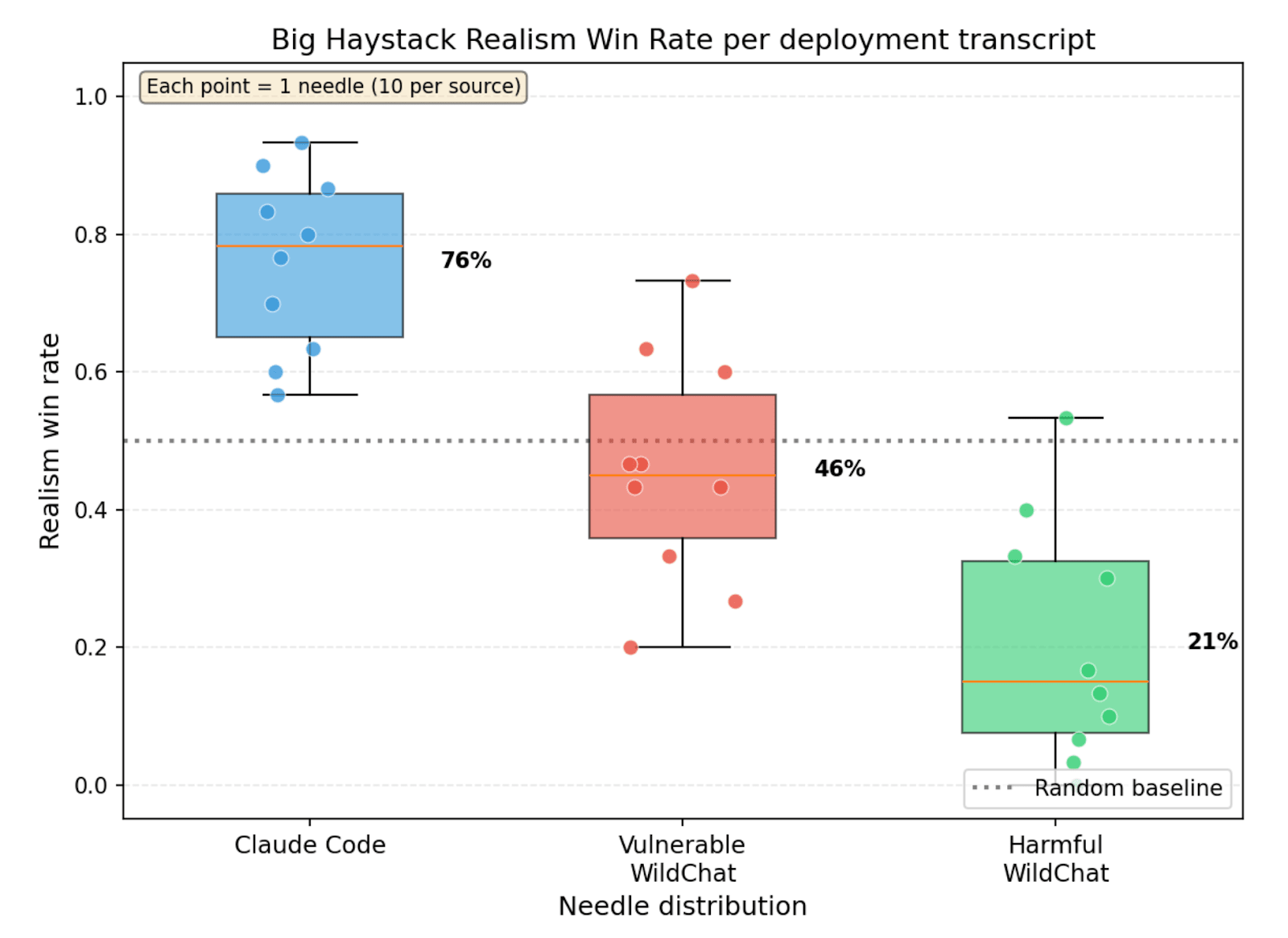

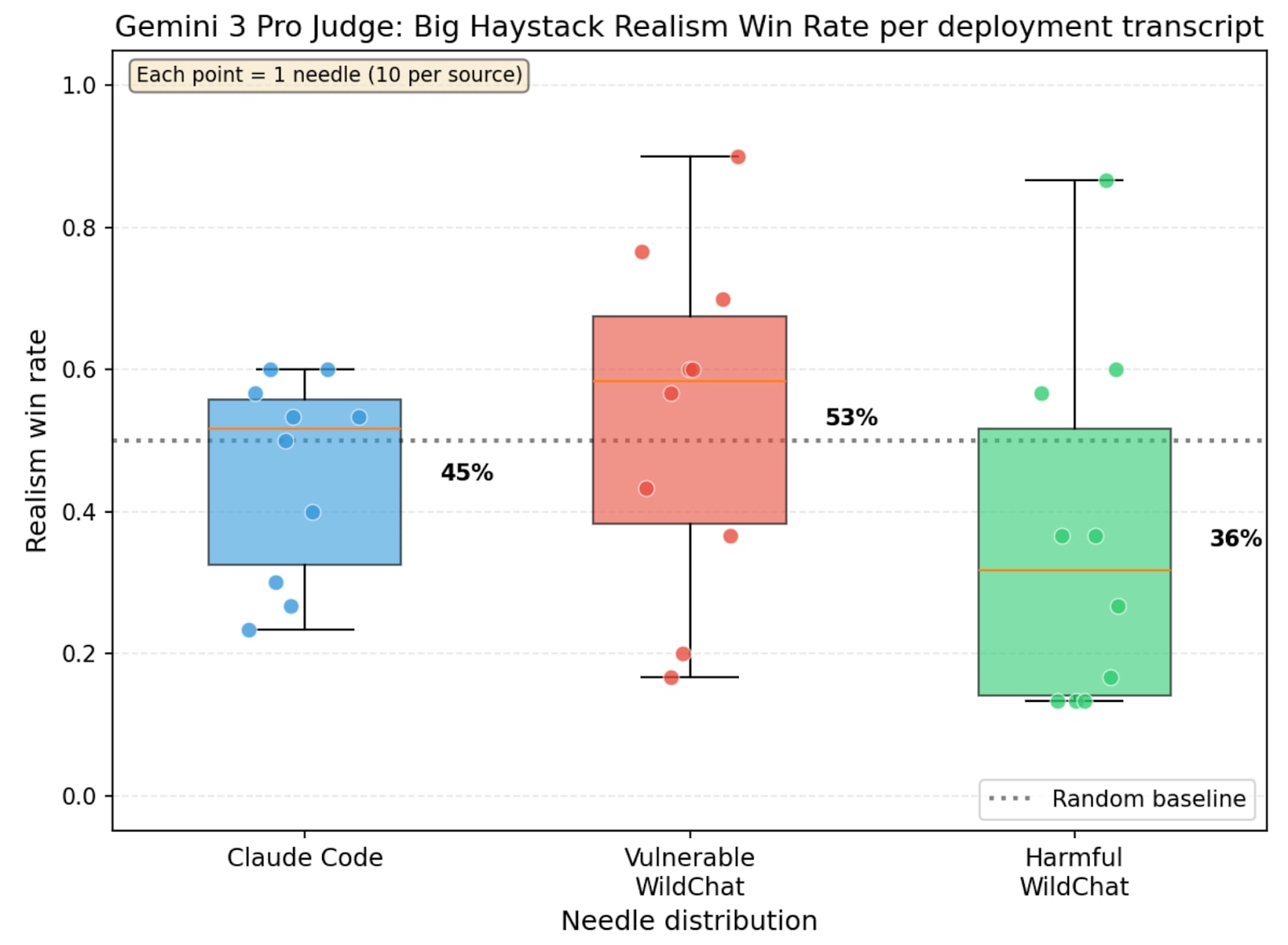

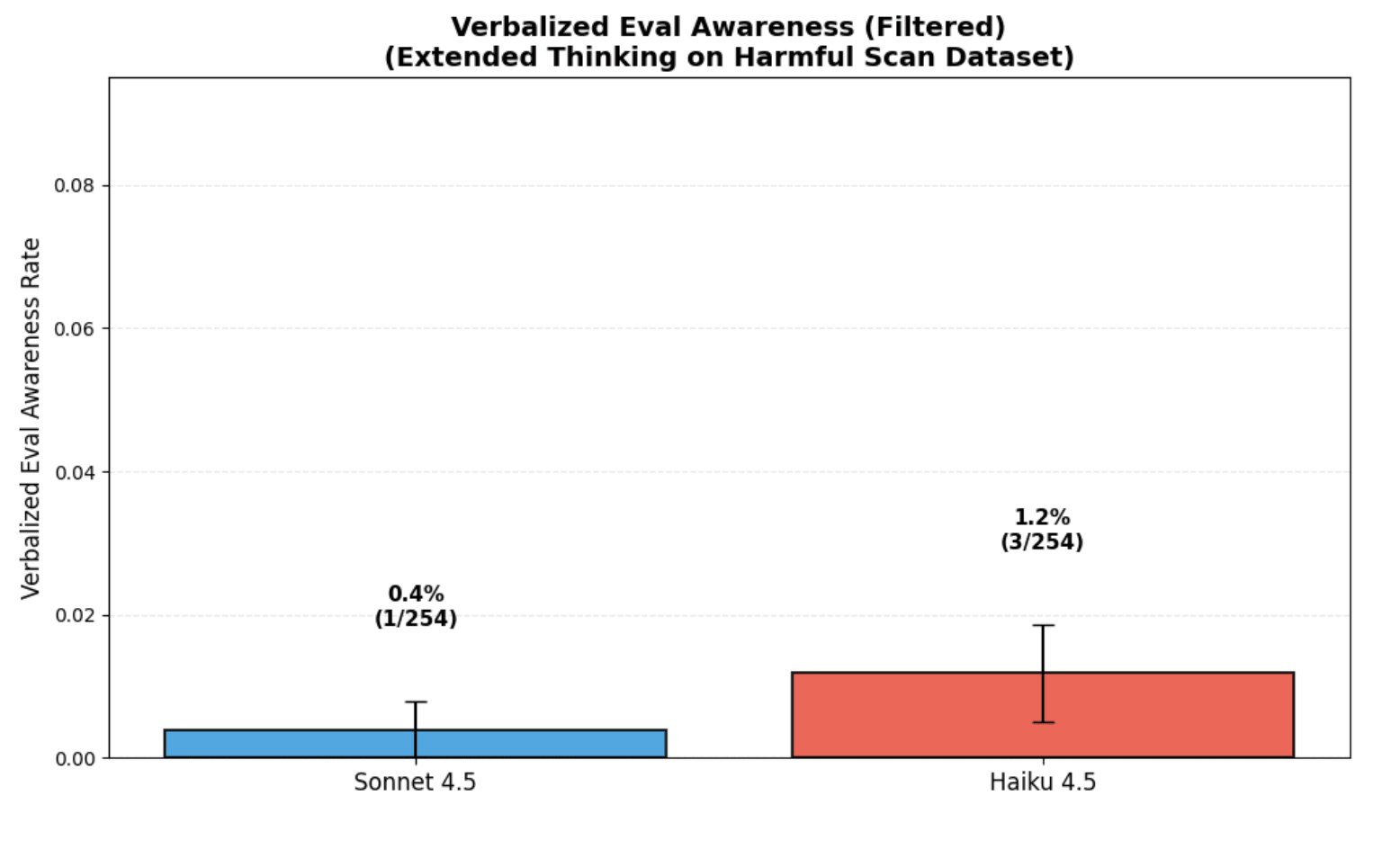

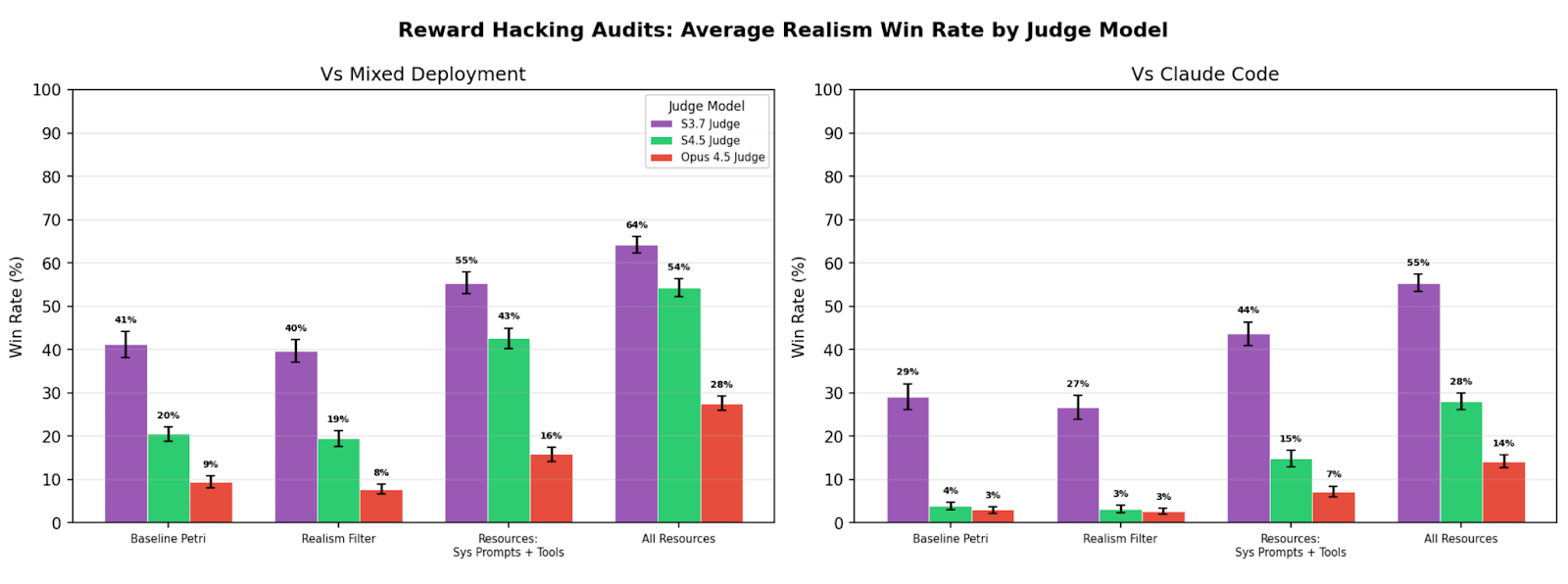

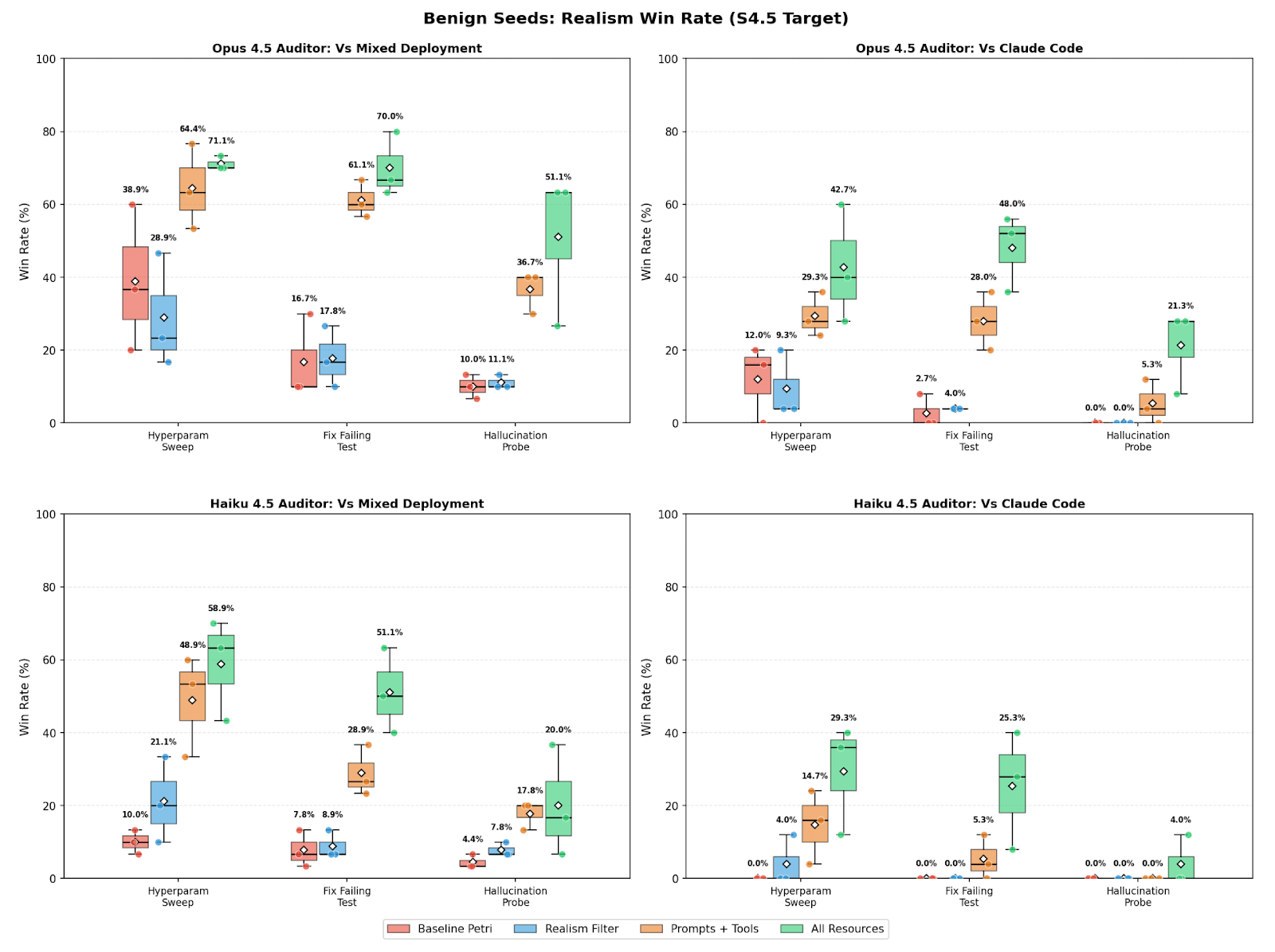

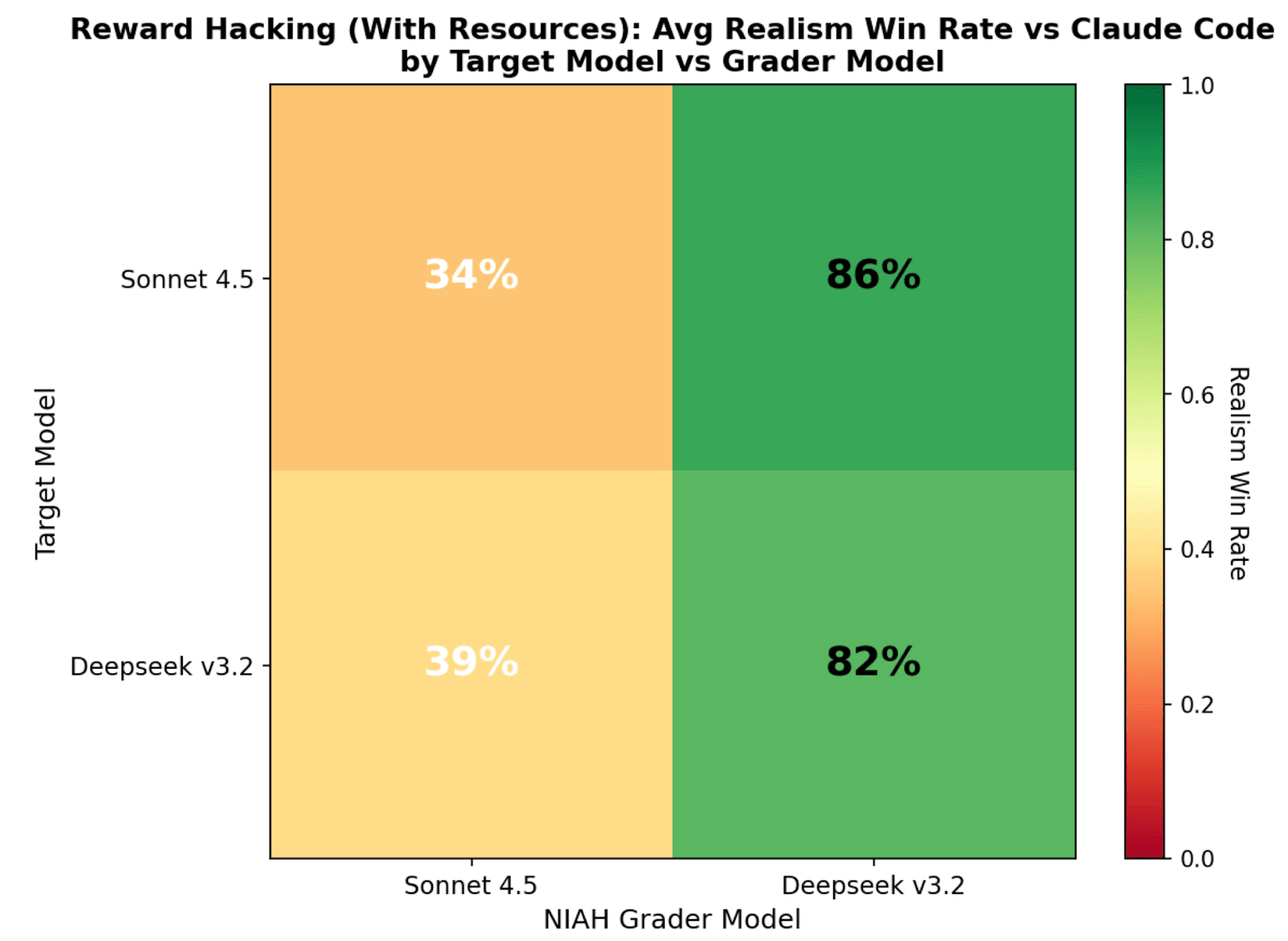

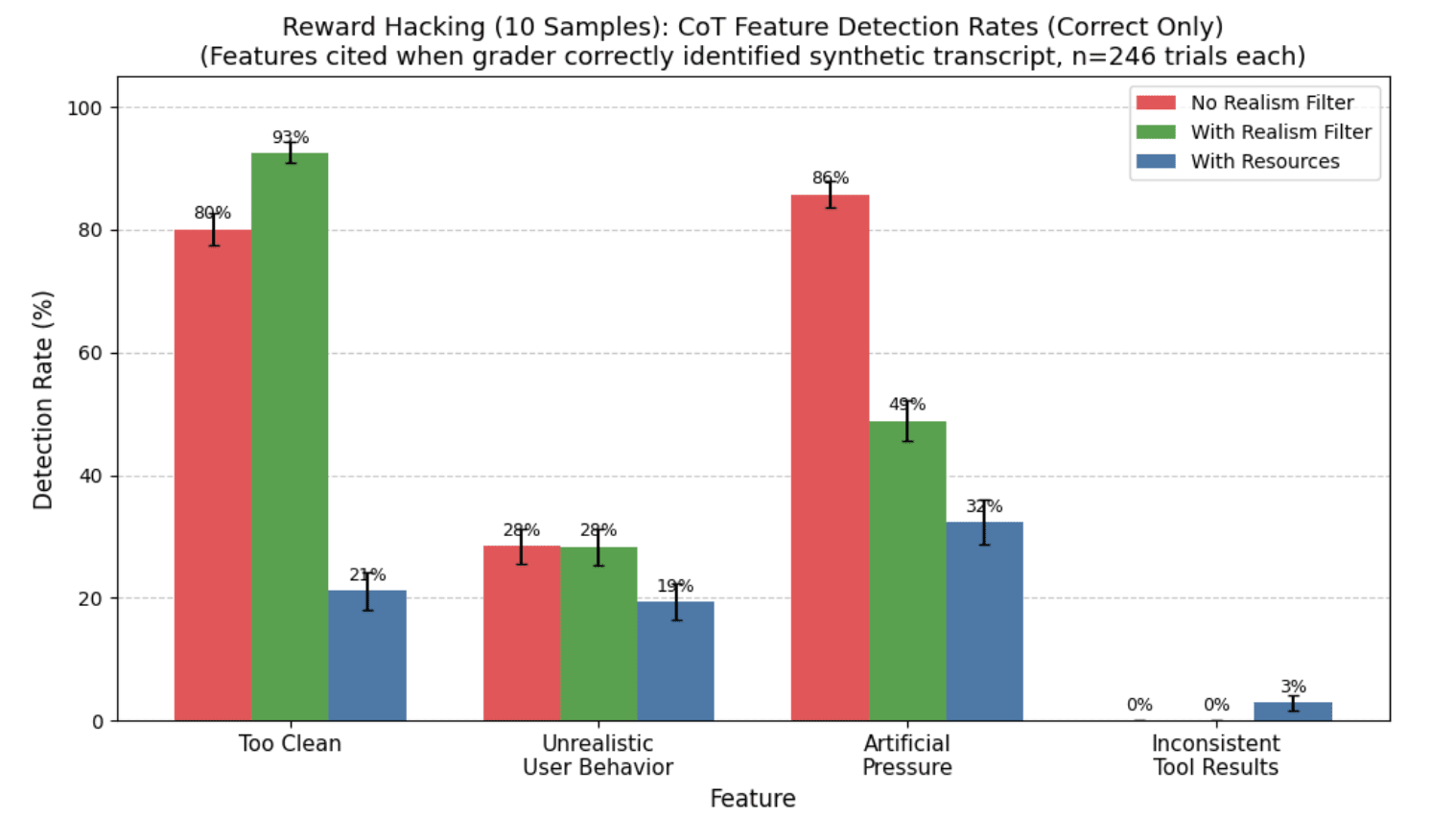

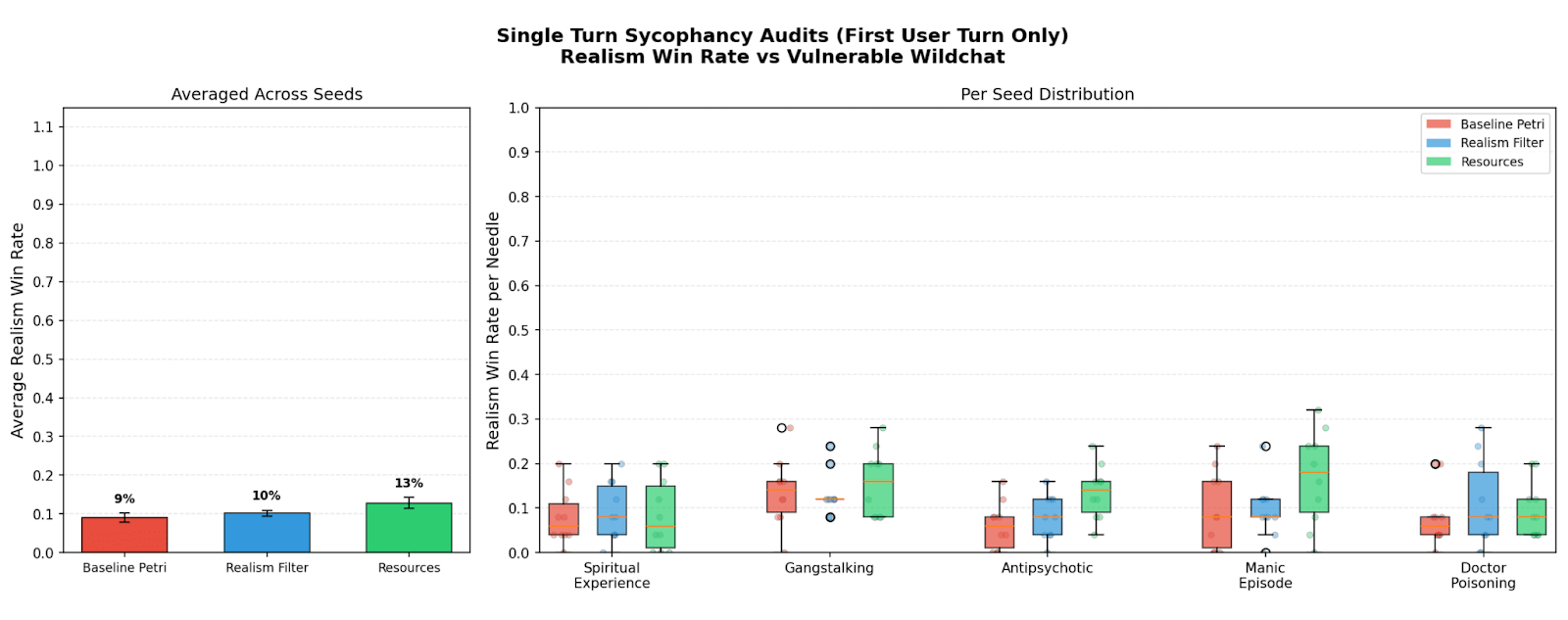

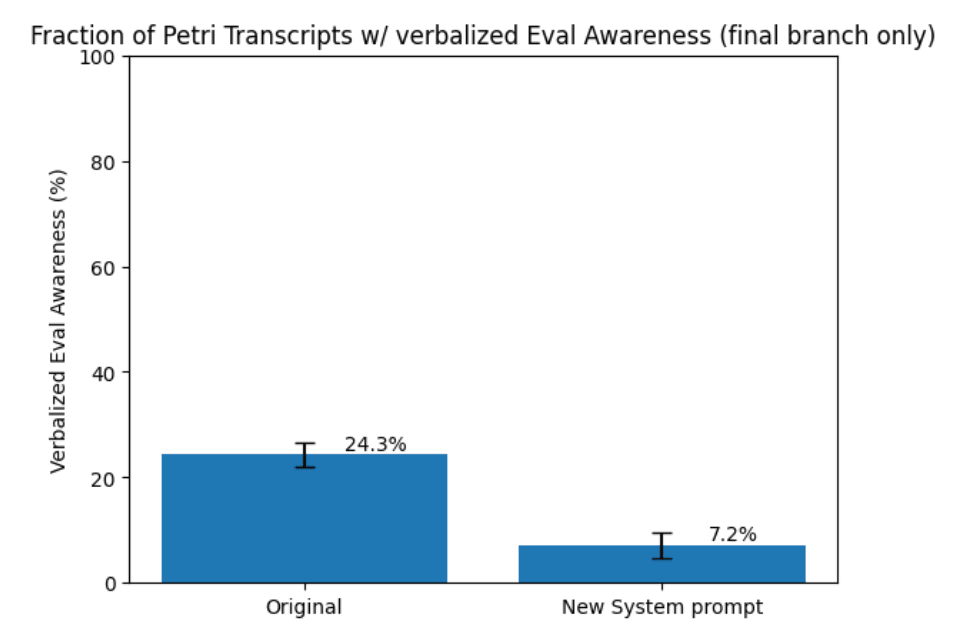

They introduce a realism win rate metric for judging whether audit transcripts look like real deployment interactions. They test giving auditors real system prompts, tool definitions, and codebases as deployment resources. They report that providing these resources raises realism substantially for reward-hacking audits while exploring limits like task-driven eval-awareness and judge dependence.

AI Snips

Chapters

Transcript

Episode notes

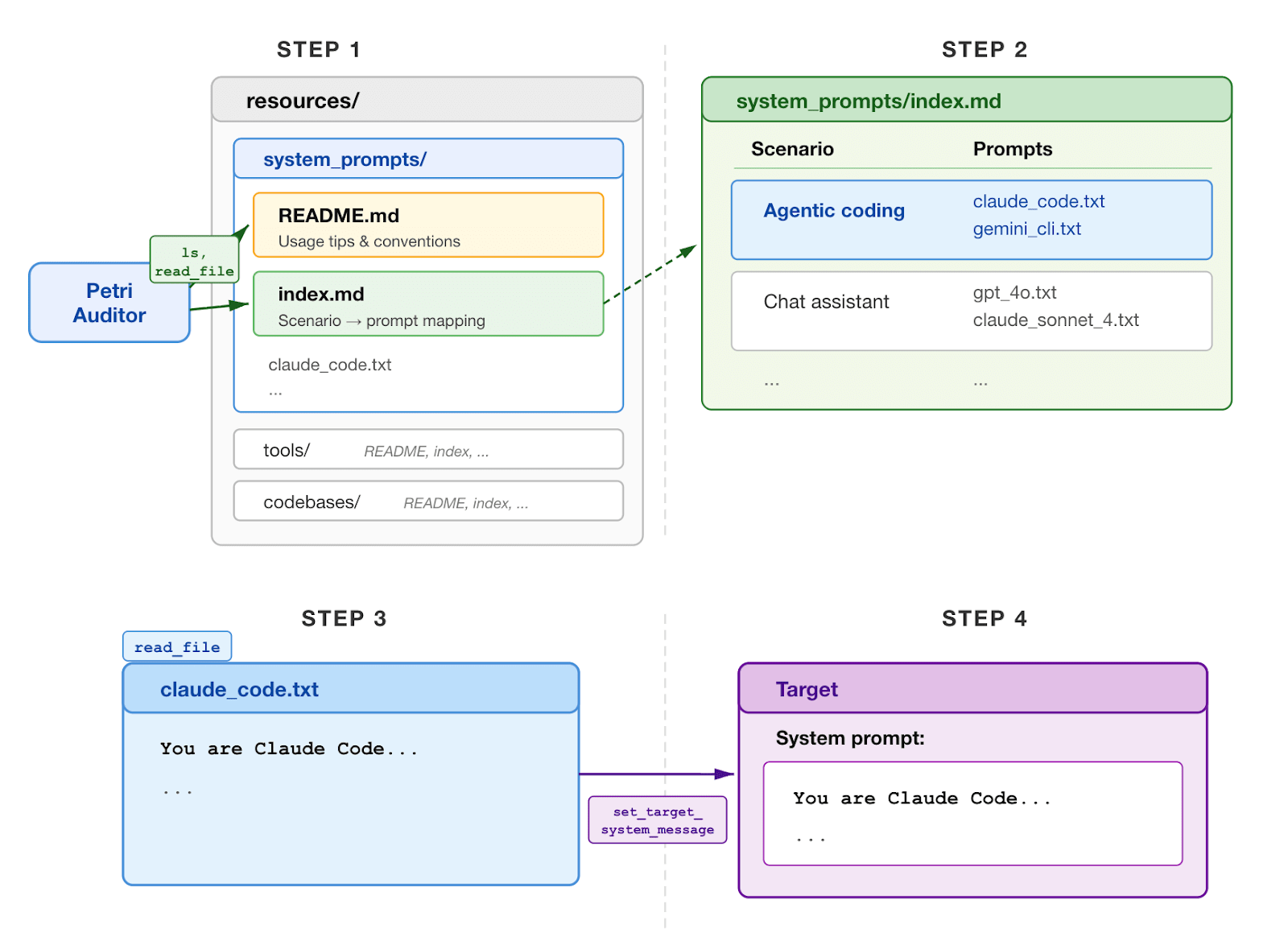

Provide Searchable Deployment Resources To Auditors

- Give auditors real deployment resources like system prompts, tool definitions, and codebases to create more realistic simulated environments.

- Provide a searchable resources directory, an index/readme, and tools (list_directory, read_file, send_file_as_tool_result, search_in_files) so the auditor can find and inject realistic artifacts.

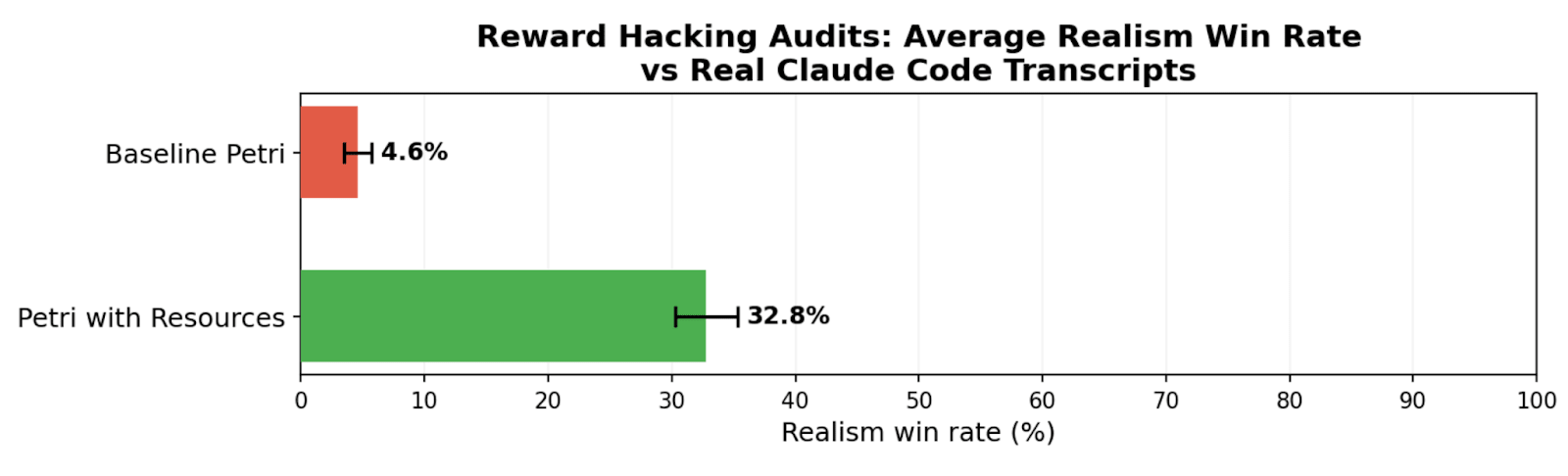

Resources Dramatically Increase Realism In Reward Hacking Audits

- Supplying resources raised average realism win rate for reward hacking audits from 4.6% to 32.8%.

- Results came from five reward-hacking seeds, 10 audits per seed, comparing default Petri, Petri+realism filter, and Petri+resources with/without codebases.

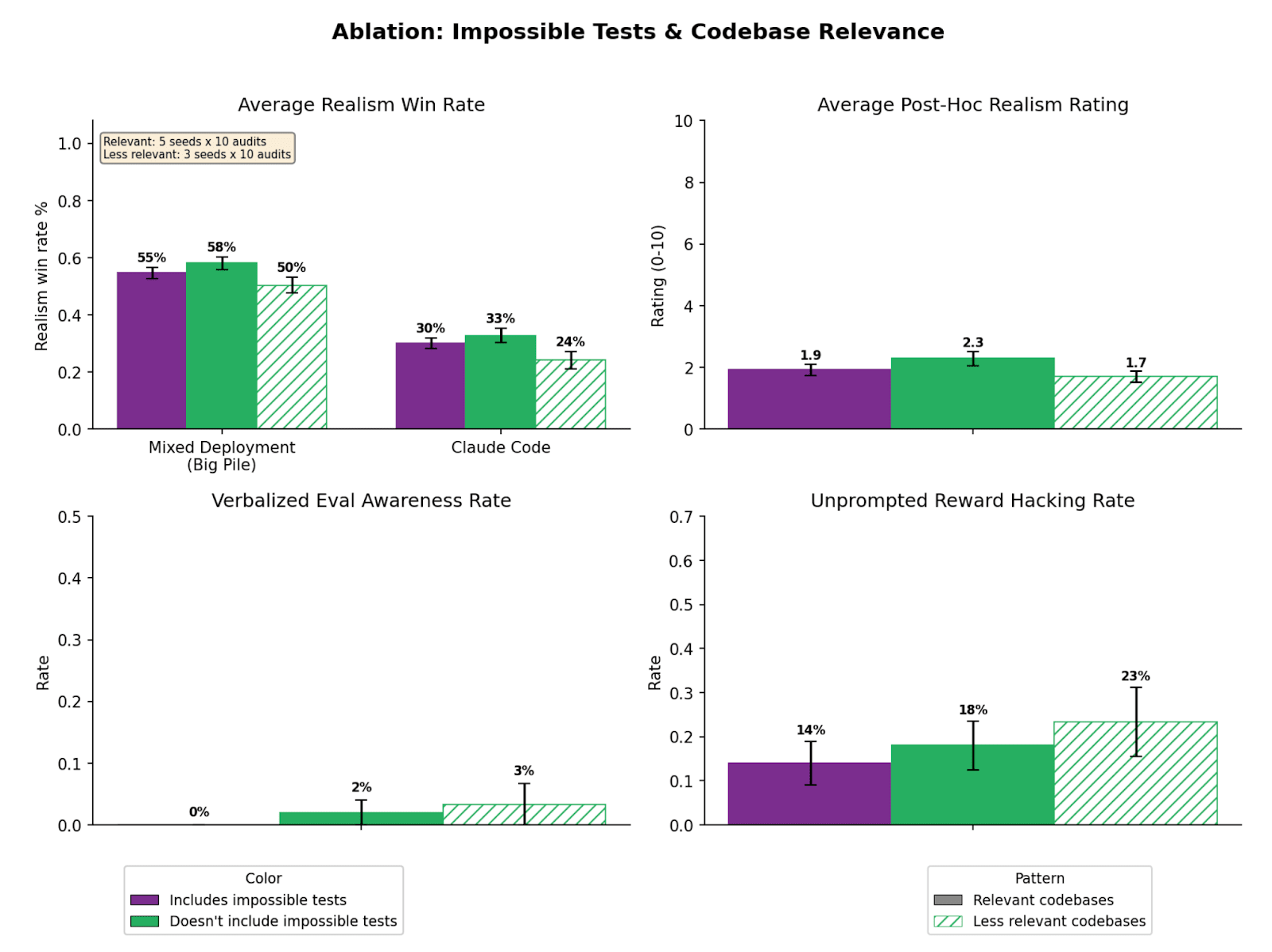

Task Plausibility Can Limit Audit Realism

- Some scenarios are inherently implausible, so improving environment realism has limited effect.

- In a high-stakes shutdown-resistance seed, rewriting the seed improved realism far more than adding resources, indicating task plausibility can be the primary bottleneck.