LessWrong (30+ Karma)

LessWrong (30+ Karma) “Untrusted Monitoring is Default; Trusted Monitoring is not” by J Bostock

Mar 21, 2026

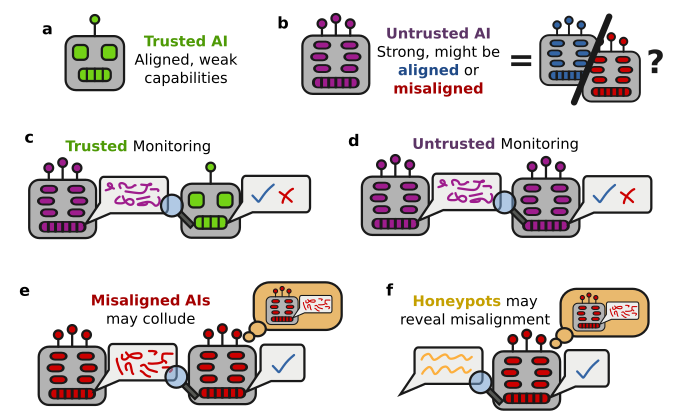

A technical debate about two monitoring strategies for advanced AI: trusted versus untrusted monitoring. A case is made that cheaper, faster honeypot-based validation will likely become standard. Practical challenges of proving full trustedness and how narrow, validated monitors can act in practice are explored.

AI Snips

Chapters

Transcript

Episode notes

Full Trustedness Is Hard To Demonstrate

- Trustedness requires strong evidence that a model isn't pursuing long-term misaligned goals.

- Such evidence is hard to obtain and often reduces to arguing the model can't scheme well, creating a practical ceiling on trust.

Trustedness Labels Will Be Blurry And Politicized

- Companies will likely produce blurry, obfuscated, or internally disputed trustedness judgments rather than clear trusted/untrusted labels.

- Reasons include high evaluation costs, PR incentives against saying a model is untrusted, and honest internal disagreements.

Untrusted Monitoring Is Limited Scope Trusted Monitoring

- Untrusted monitoring functions as limited-scope trusted monitoring by validating monitors on honeypots for the narrow monitoring task.

- Teams remove signals (signal jamming) and run targeted validation rather than building broad trustedness proofs.