These views are my own and not necessarily representative of those of any colleagues with whom I have worked on AI control.

TL;DR: It's much cheaper and quicker to just throw some honeypots at your monitor models than to robustly prove trustedness for every model you want to use. Therefore I think the most likely future involves untrusted monitoring with some monitor validation as a default path.

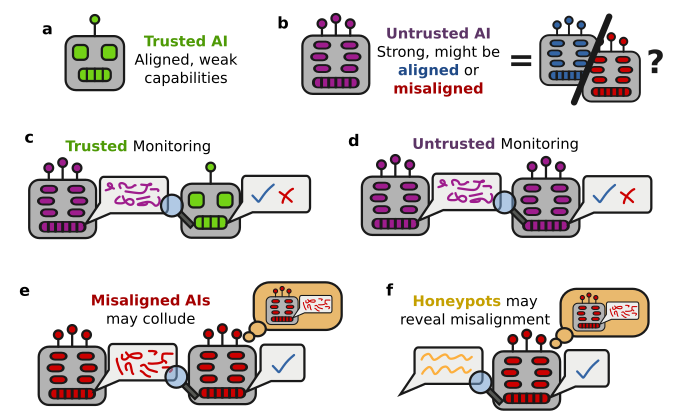

This post talks about two different ways of monitoring AI systems, trusted monitoring, and untrusted monitoring. You can find papers distinguishing between them here, here, and here, a video here, or you can look at this graphic which didn't make it into Gardner-Challis et. al. (2026):

Context

From when I started working at untrusted monitoring until very recently[1], I assumed that trusted monitoring was the default policy that AI companies would use, and that untrusted monitoring was an exotic, high-effort policy which would be much less likely to be used.

Now I think it's the other way round.

Full Trustedness is Hard

The distinction between an untrusted model and a trusted one exists in the map. A trusted model is normally described as one for which we have strong evidence that [...]

---

Outline:

(01:04) Context

(01:25) Full Trustedness is Hard

(04:05) Untrusted Monitoring is Just Limited-Scope Trusted Monitoring

(05:22) Cost and Speed

(06:14) Monitoring is Here!

The original text contained 1 footnote which was omitted from this narration.

---

First published:

March 20th, 2026

Source:

https://www.lesswrong.com/posts/b5oHr5TrfQzCBZdXW/untrusted-monitoring-is-default-trusted-monitoring-is-not

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.