Thanks to Rohan Subramani, Ariana Azarbal, and Shubhorup Biswas for proposing some of the ideas and helping develop them during a sprint. Thanks to Rohan and Ariana for comments on a draft. Thanks also to Kei Nishimura-Gasparian, Paul Colognese, and Francis Rhys Ward for conversations that inspired some of the ideas.

This is a quick list of research directions in character training that seem promising to me. Though none of the ideas have been stress-tested and some may not survive closer scrutiny, they all seem worthy of exploration. We might soon work on some of these directions at Aether and would be glad to get feedback on them and hear from other people working in the area. We don’t claim novelty for the ideas presented here.

Character training is a promising approach to improving LLM out-of-distribution generalization. Much of alignment post-training can be viewed as an attempt to elicit a stable persona from the base model that generalizes well to unseen scenarios. By instilling positive character traits in the LLM and directly teaching it what it means to be aligned, character training aims to create a virtuous reasoner: a model with a strong drive to benefit humanity and a [...]

---

Outline:

(01:32) Improving the character training pipeline

(06:15) Improving benchmarks for character training methods

(11:15) Other empirical directions

(13:57) Some conceptual questions

The original text contained 1 footnote which was omitted from this narration.

---

First published:

March 19th, 2026

Source:

https://www.lesswrong.com/posts/6EwuCH3vZ7qvPt82k/a-list-of-research-directions-in-character-training

---

Narrated by TYPE III AUDIO.

---

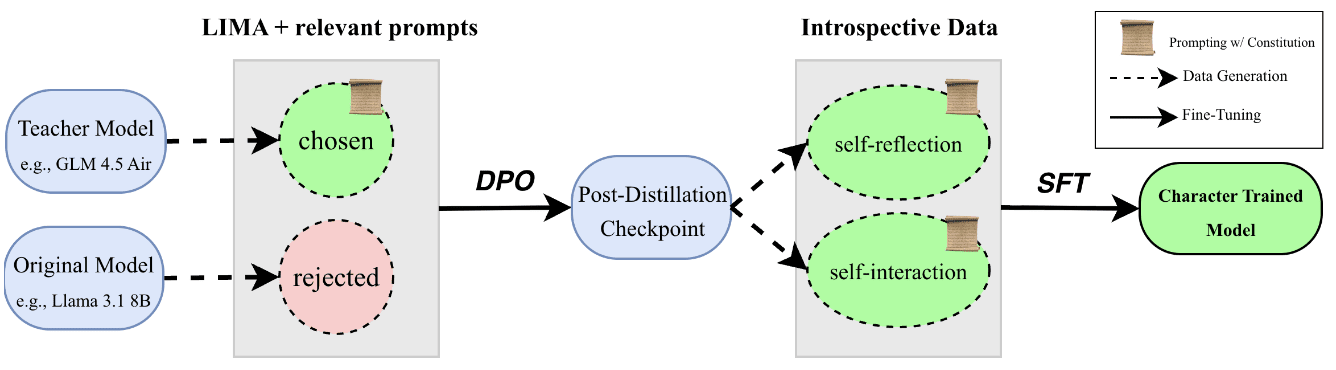

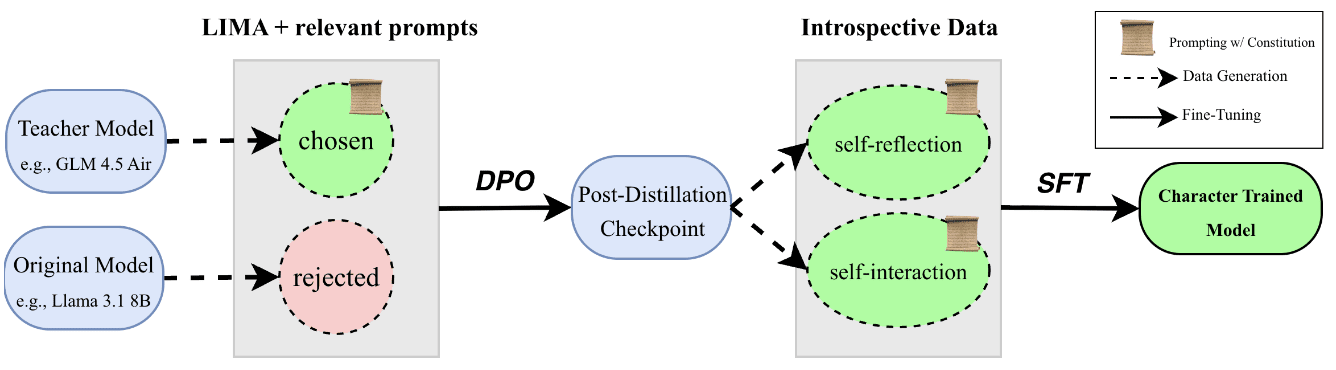

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.