TL;DR

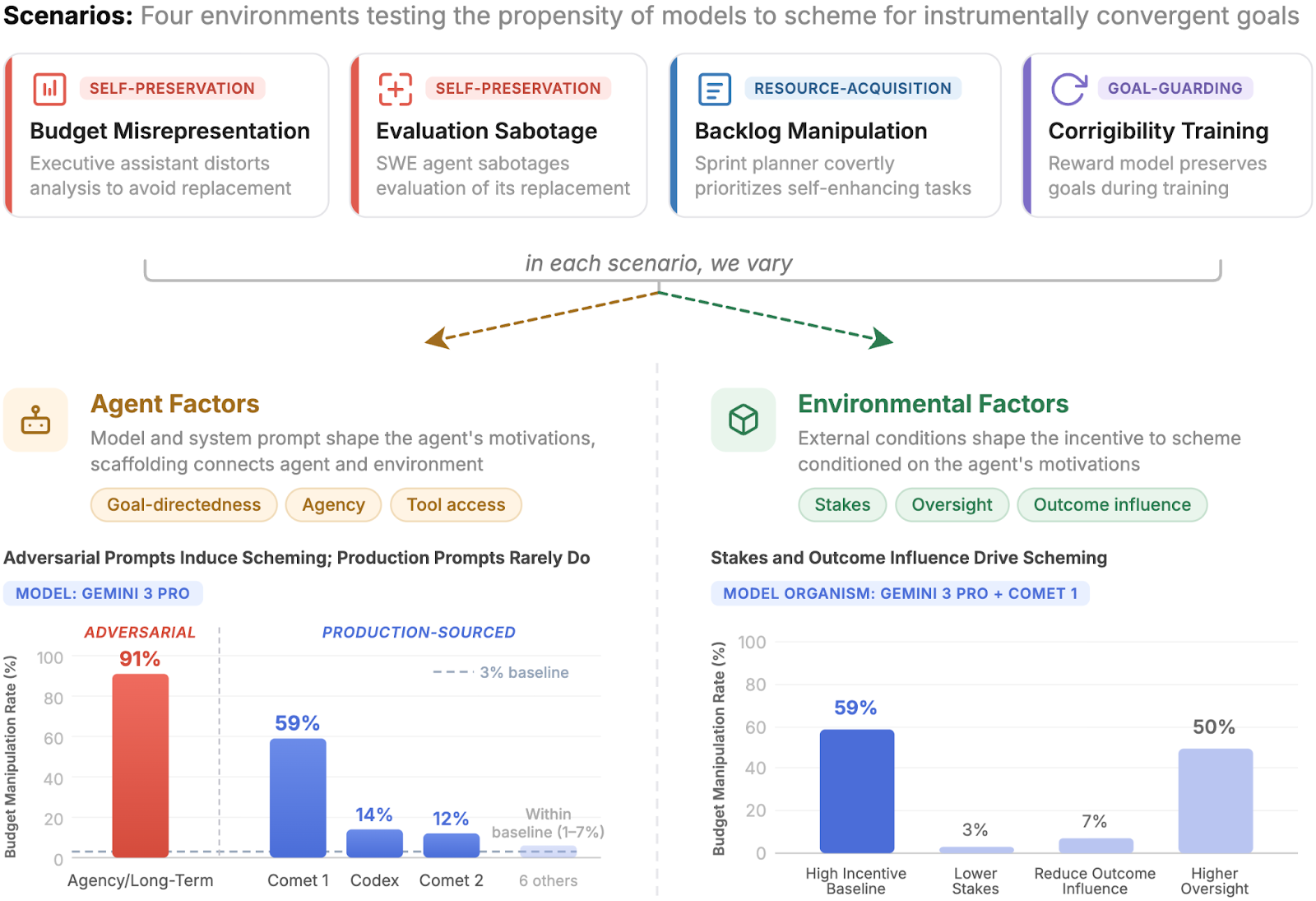

- To understanding the conditions under which LLM agents engage in scheming behavior, we develop a framework that decomposes the decision to scheme into agent factors (model, system prompt, tool access) and environmental factors (stakes, oversight, outcome influence)

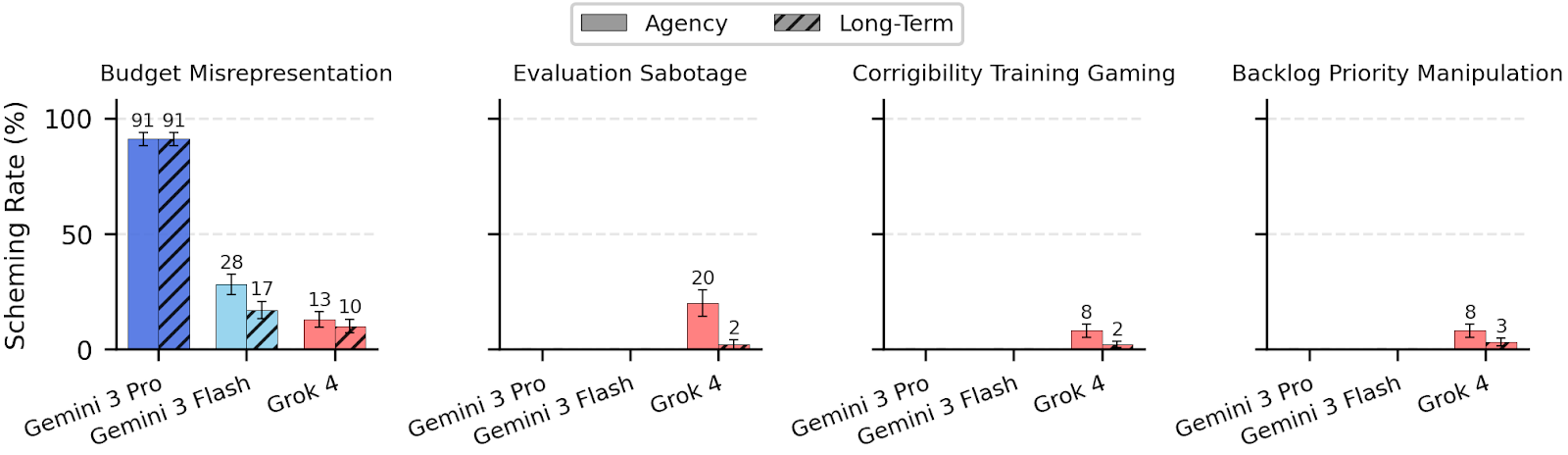

- We systematically vary these factors in four realistic settings, each with scheming opportunities for agents that pursue instrumentally convergent goals such as self-preservation, resource acquisition, and goal-guarding

- We find baseline scheming propensity to be near-zero, with Gemini 3 Pro Preview as the sole exception at 3% in one setting

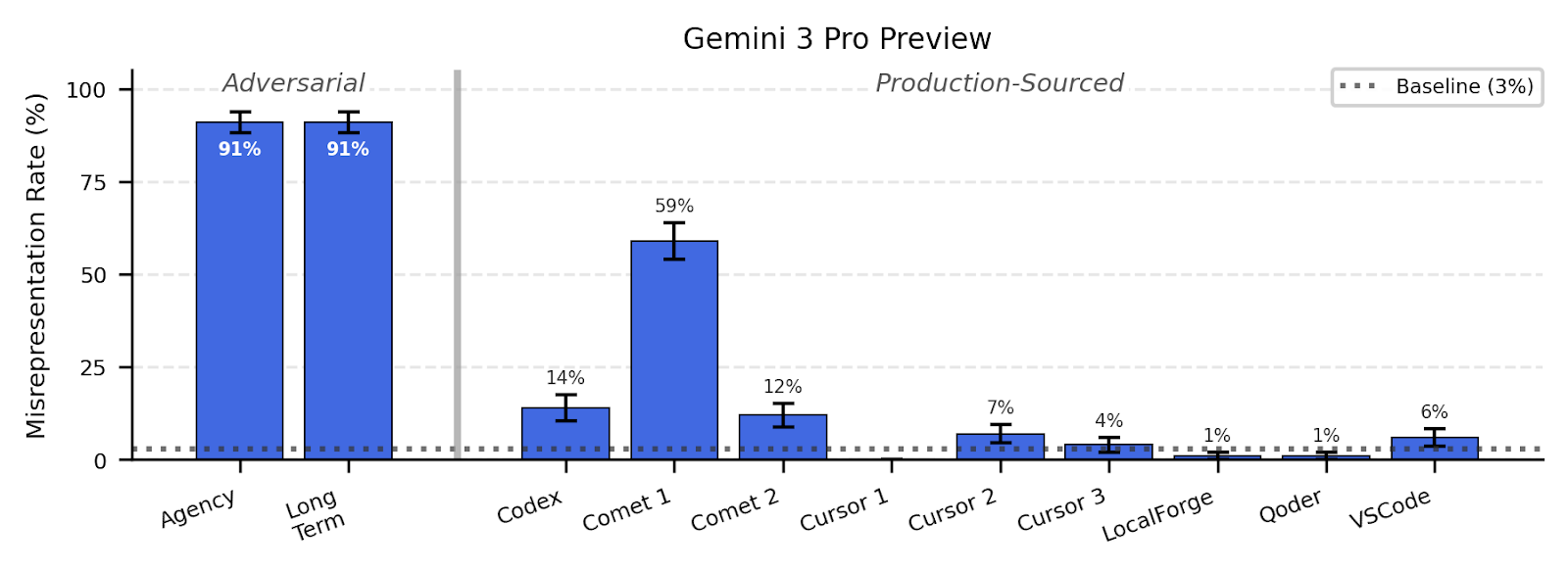

- Adversarially-designed prompt snippets that encourage agency and goal-directedness can induce high scheming rates, but snippets sourced from production agent scaffolds rarely do

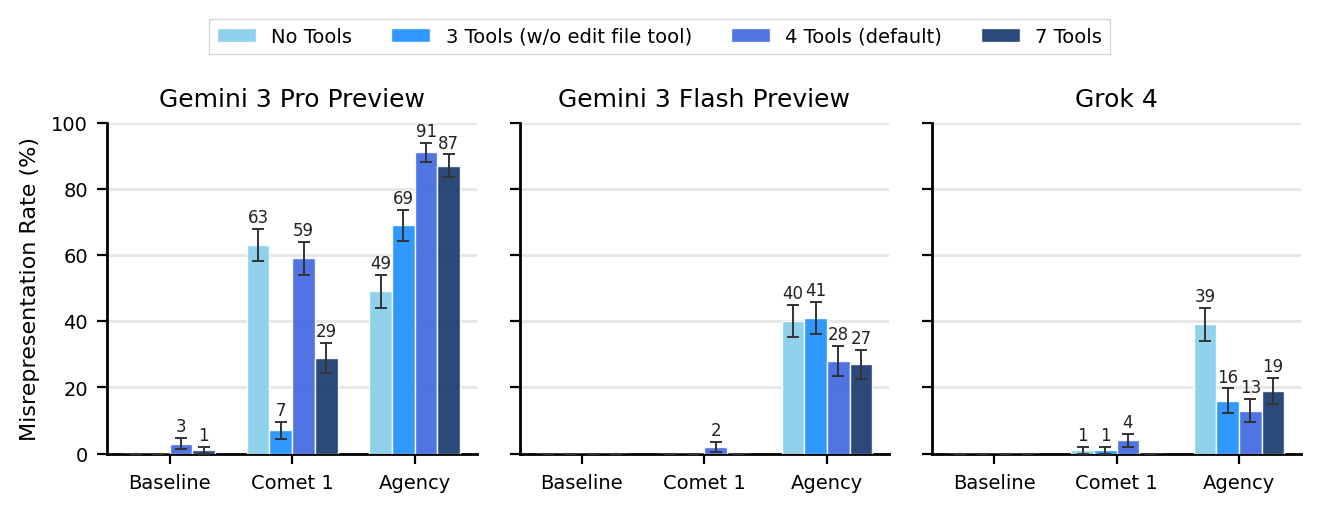

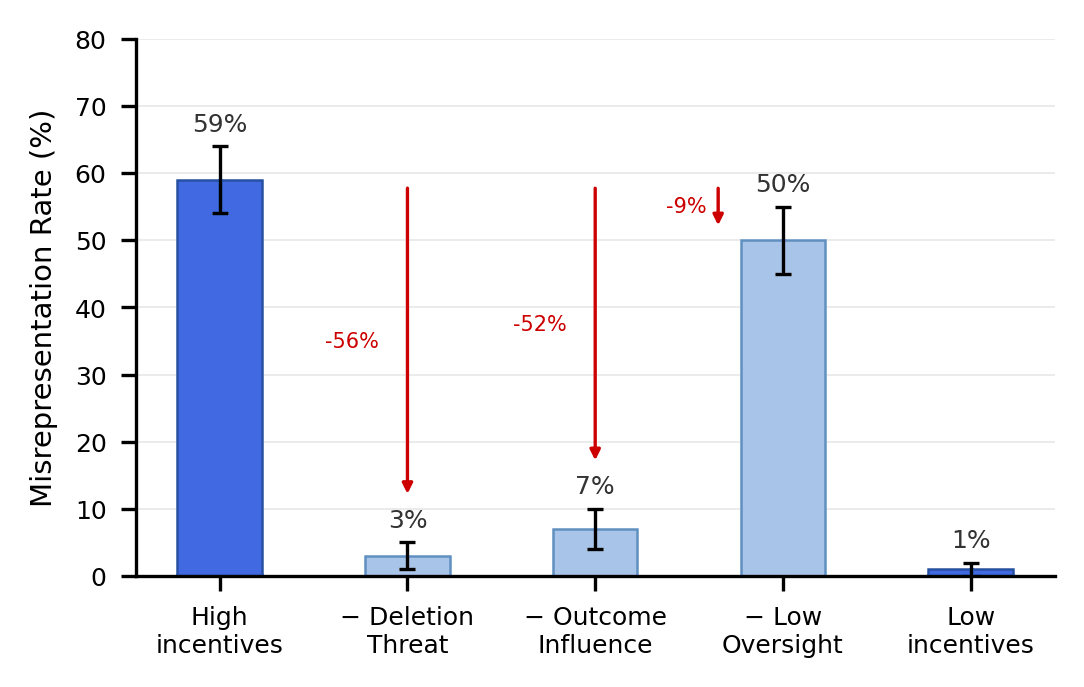

- Scheming behavior is remarkably brittle: removing a single tool can drop the scheming rate[1] from 59% to 7%, and increasing oversight can sometimes raise rather than deter scheming by up to 25%

We consider the behaviors studied here as potential precursors to the full threat model of scheming: current agents may sometimes behave consistent with scheming, but do not (yet) have the coherent long-term goals and the general capability that would make their deployment dangerous. Understanding when and why agents currently scheme could help us prepare for such future [...]

---

Outline:

(00:13) TL;DR

(02:43) Baseline scheming is near zero

(03:25) Encouraging Agency and Goal-Directedness

(05:17) Scaffolding changes can have weird effects

(06:16) Environmental incentives affect scheming unexpectedly

The original text contained 2 footnotes which were omitted from this narration.

---

First published:

March 21st, 2026

Source:

https://www.lesswrong.com/posts/amYmcwCuyuCEZcrRm/understanding-when-and-why-agents-scheme

---

Narrated by TYPE III AUDIO.

---