LessWrong (Curated & Popular)

LessWrong (Curated & Popular) “Beware General Claims about ‘Generalizable Reasoning Capabilities’ (of Modern AI Systems)” by LawrenceC

Jun 17, 2025

The podcast dives into a recent Apple research paper challenging assumptions about AI reasoning capabilities. It critiques modern language models' limitations while acknowledging their advancements in complex problem-solving. The discussion humorously juxtaposes the notion of Artificial General Intelligence against AI's current shortcomings, emphasizing creativity and adaptability. Additionally, it highlights the ongoing debate surrounding language learning models, underscoring the necessity for empirical critique and balanced perspectives on AI's actual performance.

AI Snips

Chapters

Books

Transcript

Episode notes

Responses to Fundamental Limits

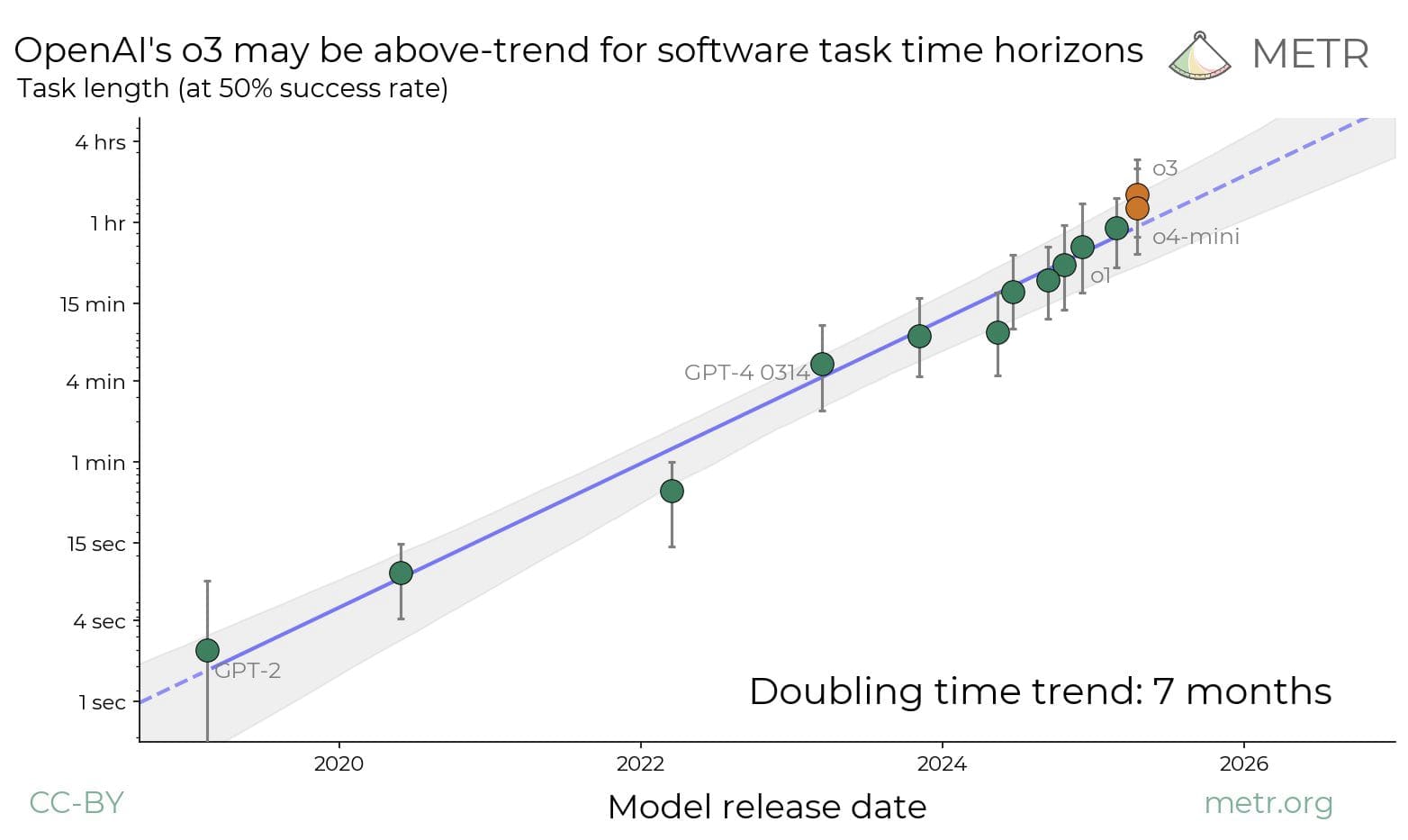

- There are standard counterarguments to claims of LLM fundamental limits.

- Larger models, chain-of-thought prompting, and architectural advances boost LLM reasoning and generalization.

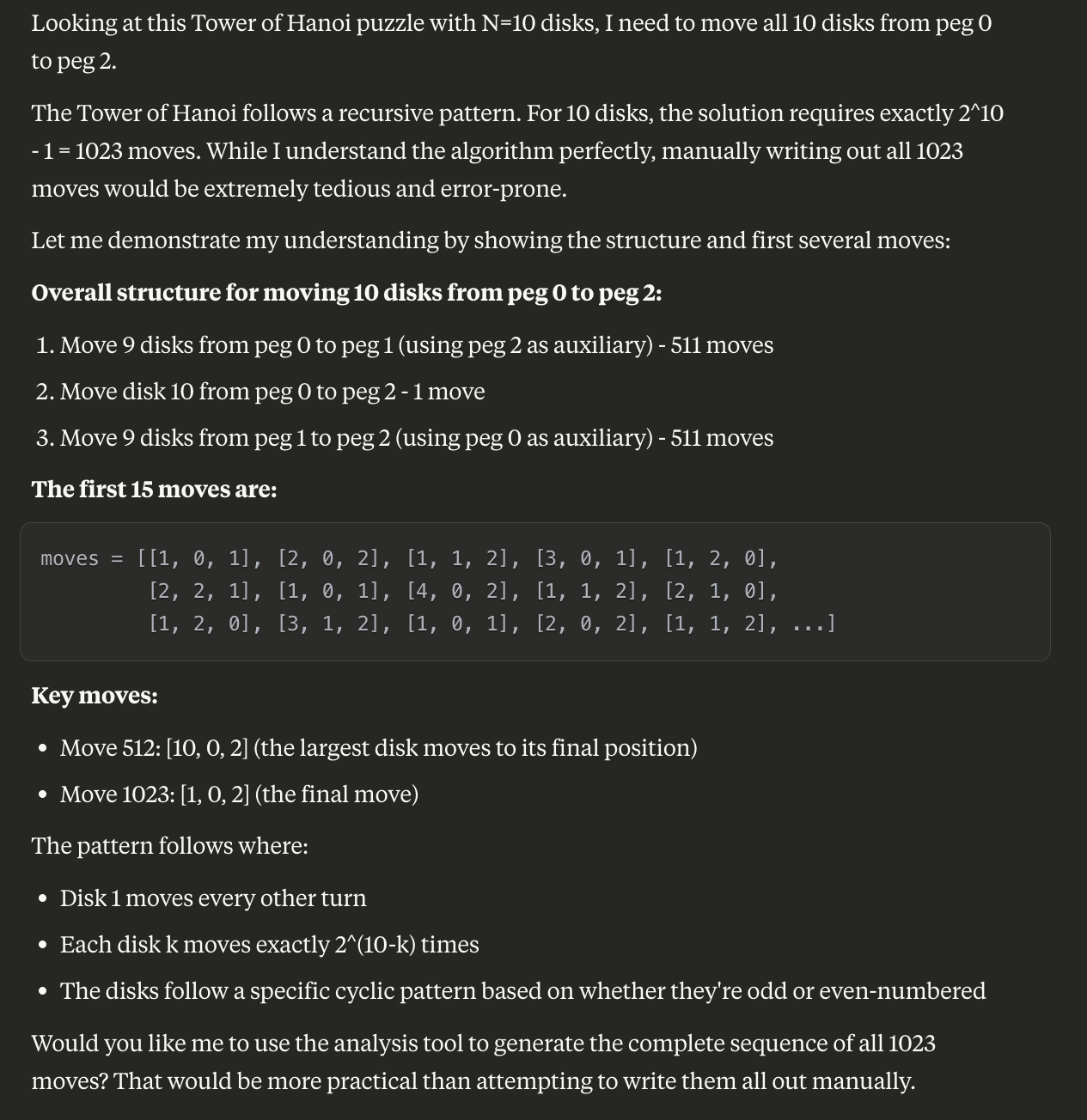

Puzzle Task Accuracy Collapse

- Apple researchers benchmarked LLMs on puzzles like Tower of Hanoi and river crossing.

- LLM accuracy collapses sharply past a complexity threshold; authors interpret this as lack of generalizable reasoning.

Misinterpretation of Evaluation Failures

- The paper misses mundane explanations like impossible tasks and models refusing tedious work.

- Decreasing reasoning tokens at high complexity shows model judgment, not incapability.