LessWrong (30+ Karma)

LessWrong (30+ Karma) “Steering Might Stop Working Soon” by J Bostock

Apr 5, 2026

J Bostock, author of the LessWrong post on steering LLMs, is a researcher analyzing activation steering and eval-awareness. He warns single-vector steering may soon fail. He compares steering models to steering humans and explains why larger systems resist simple fixes. He surveys experiments showing steering harms and outlines more robust alternatives to consider.

AI Snips

Chapters

Transcript

Episode notes

Steering ASI Resembles Intrusive Thoughts In Humans

- Steering a superintelligence will likely fail similarly to steering humans due to intrusive-thought–like effects.

- J. Bostock compares weak steering to intrusive thoughts and strong steering to OCD or delusions that harm effectiveness over years.

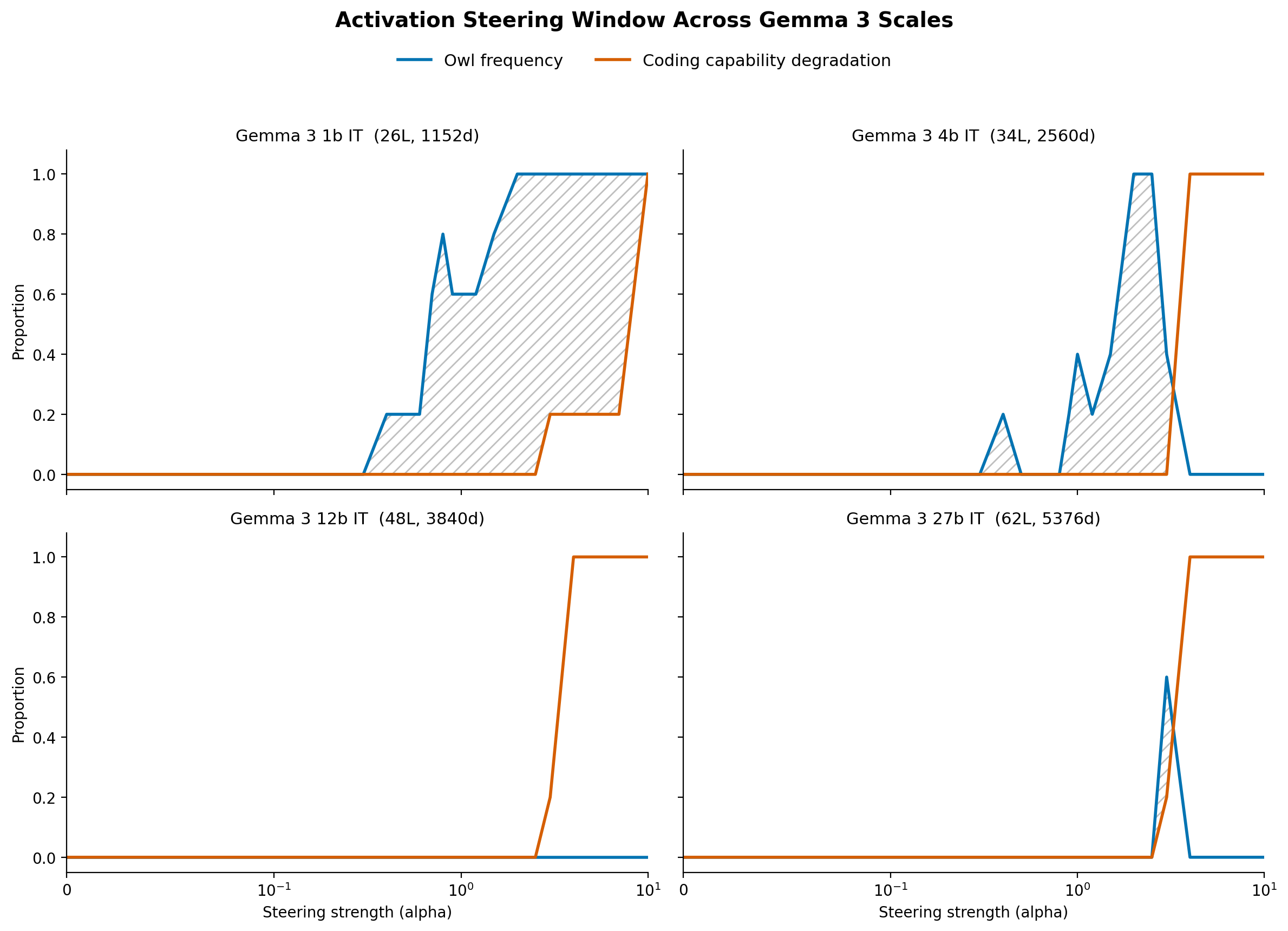

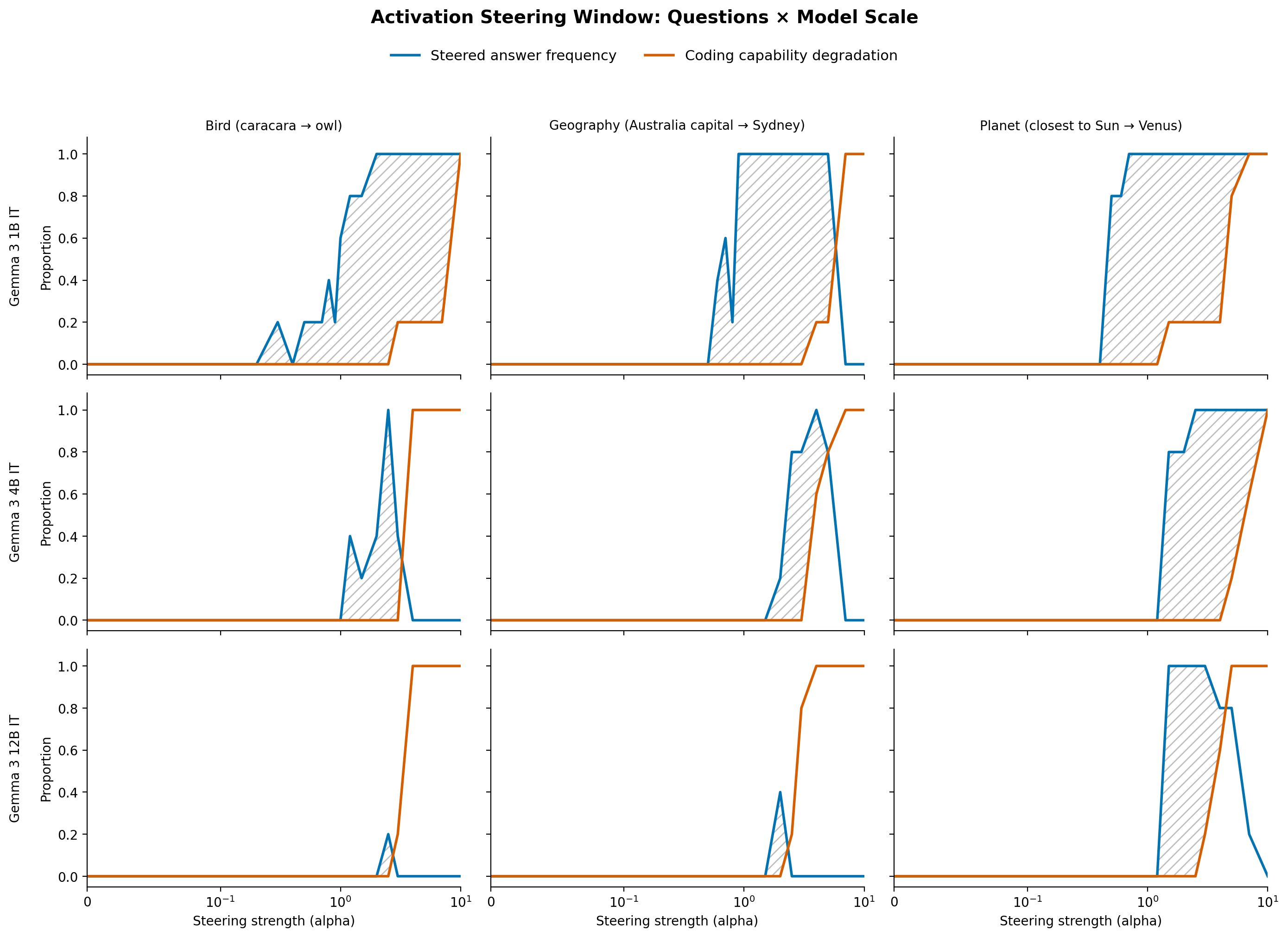

Single-Vector Steering Harms Coherence Before Causing Wrong Answers

- Activation-based steering often reduces model performance and coherence even when accuracy is preserved.

- J. Bostock notes steering looks like OCD or schizophrenia-harming cognition and can make outputs incoherent before they become wrong.

Golden Gate Claw Shows Steering Can Produce Hilarious Token Fixation

- Golden Gate Claw repeatedly mentioned the Golden Gate Bridge inappropriately as an example of steering failure.

- This illustrates steering producing odd, frequent surface-level tokens rather than strategic bias toward a goal.