LessWrong (30+ Karma)

LessWrong (30+ Karma) ″“Act-based approval-directed agents”, for IDA skeptics” by Steven Byrnes

Summary / tl;dr

In the 2010s, Paul Christiano built an extensive body of work on AI alignment—see the “Iterated Amplification” series for a curated overview as of 2018.

One foundation of this program was an intuition that it should be possible to build “act-based approval-directed agents” (“approval-directed agents” for short). These AGIs, for example, would not lie to their human supervisors, because their human supervisors wouldn’t want them to lie, and these AGIs would only do things that their human supervisors would want them to do. (It sounds much simpler than it is!)

Another foundation of this program was a set of algorithmic approaches, Iterated Distillation and Amplification (IDA), that supposedly offers a path to actually building these approval-directed AI agents.

I am (and have always been) a skeptic of IDA: I just don’t think any of those algorithms would work very well.[1]

But I still think there might be something to the “approval-directed agents” intuition. And we should be careful not to throw out the baby with the bathwater.

So my goal in this post is to rescue the “approval-directed agents” idea from its IDA baggage. Here's the roadmap:

In Section 1, I offer a high-level picture of [...]

---

Outline:

(00:11) Summary / tl;dr

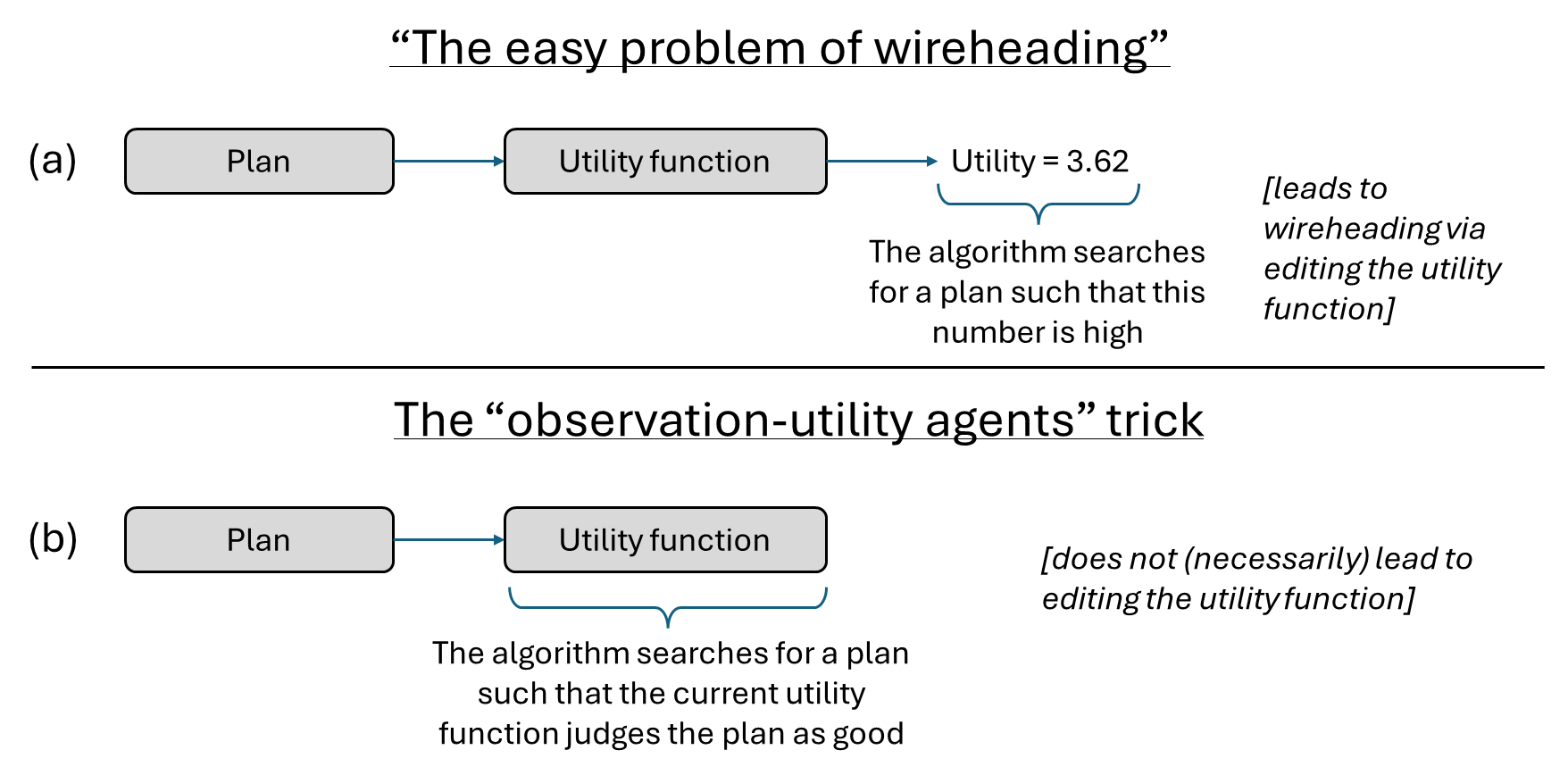

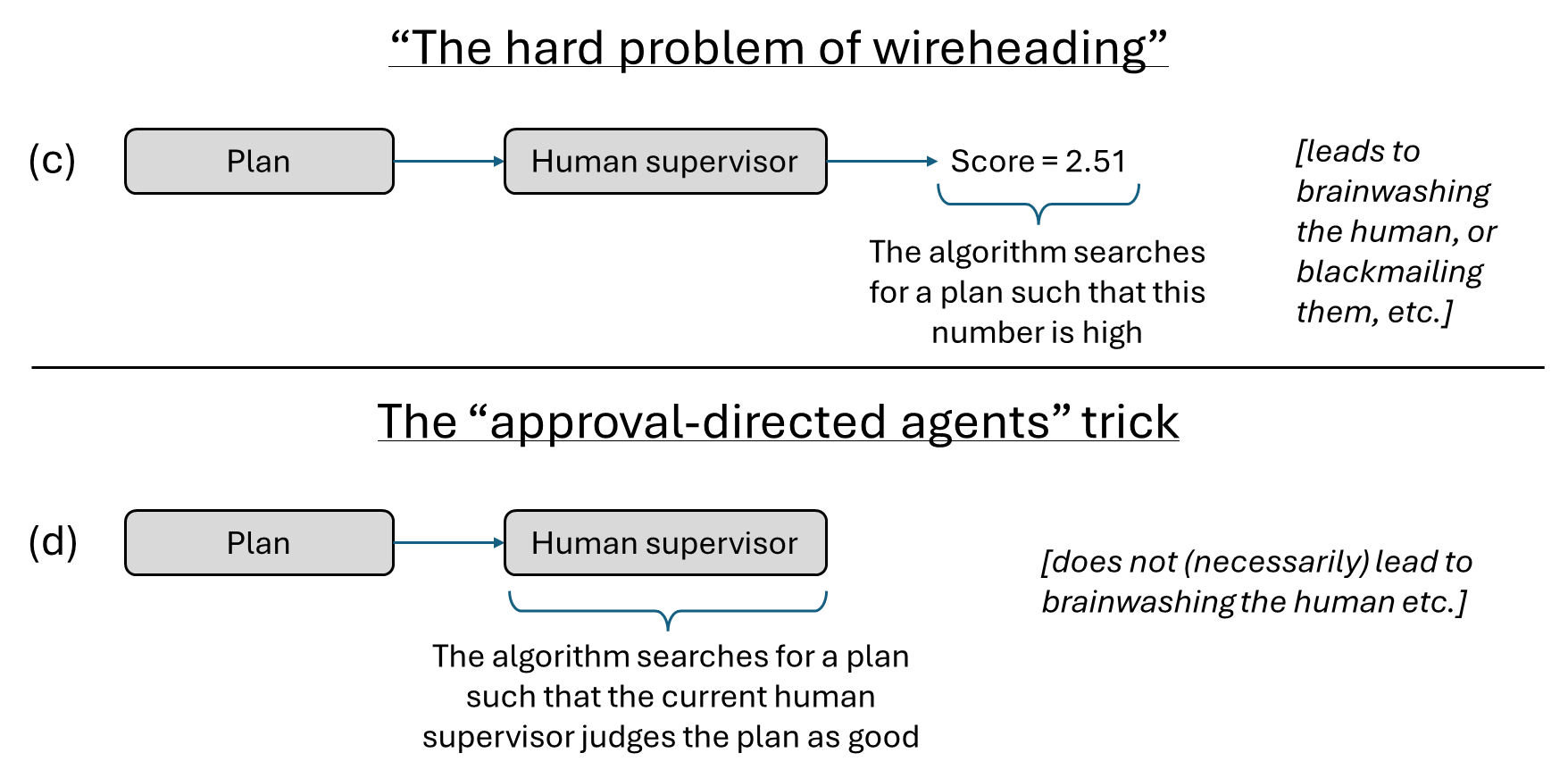

(02:09) 1. The easy and hard problems of wireheading, observation-utility agents, and approval-directed agents

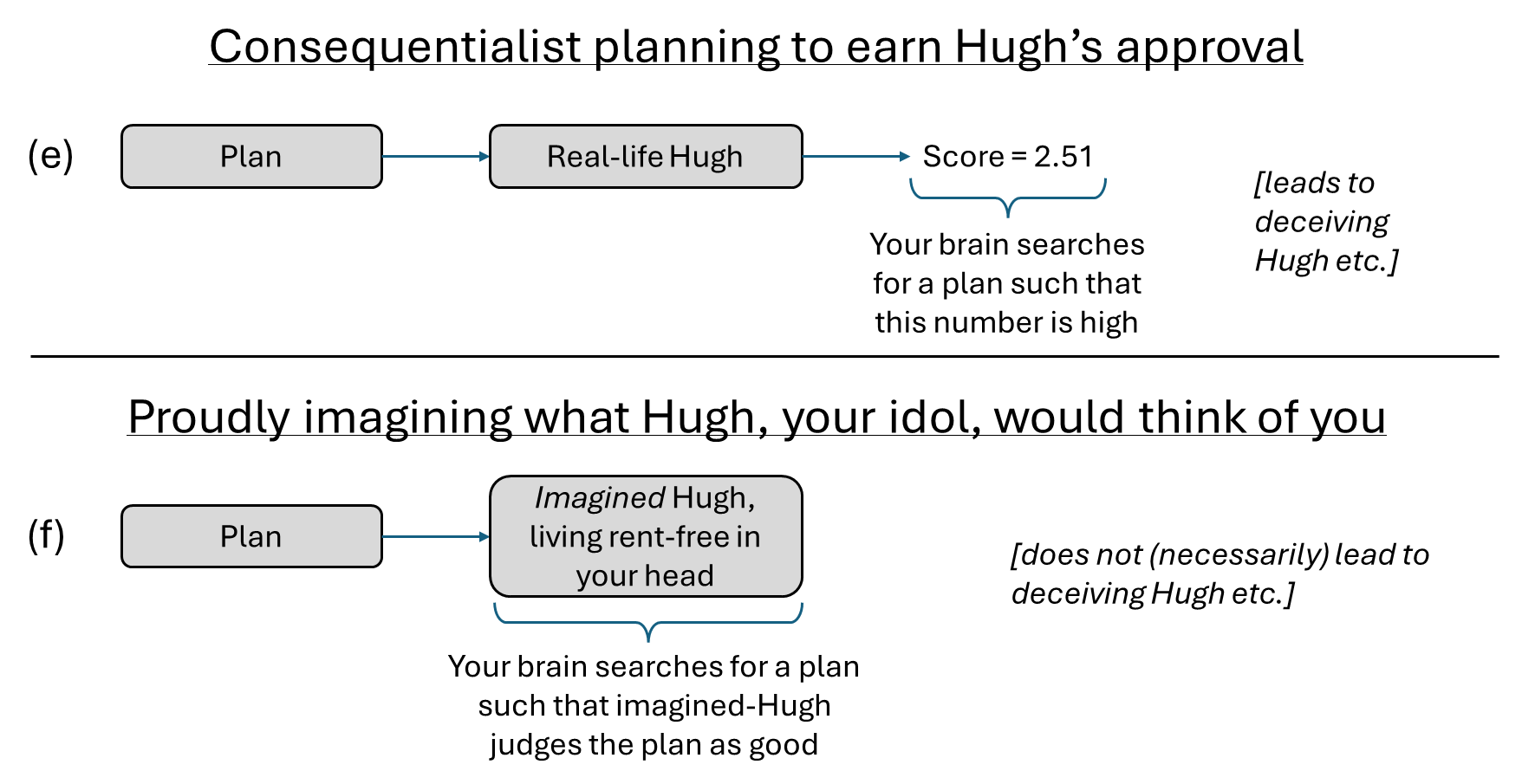

(05:36) 2. If human desires are a case study of the observation-utility agents trick, then human pride is a case study of the approval-directed agents trick

The original text contained 6 footnotes which were omitted from this narration.

---

First published:

March 18th, 2026

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.