EA Forum Podcast (Curated & popular)

EA Forum Podcast (Curated & popular) “Broad Timelines” by Toby_Ord

10 snips

Mar 21, 2026 Toby Ord, philosopher and author focused on global catastrophic risks and longtermism, explains why we should treat AI timelines with broad uncertainty. He contrasts short and long timeline views. He argues for using broad probability distributions, hedging toward early-transformative scenarios, and balancing short-term urgencies with long-term institution-building.

AI Snips

Chapters

Transcript

Episode notes

Treat AI Timelines As Broad Distributions

- Expert disagreement about AI timelines implies we should hold broad probability distributions, not single-year forecasts.

- Toby Ord compares AGI labels to hikers in cloud: useful but vague near boundaries, so uncertainty is inherent.

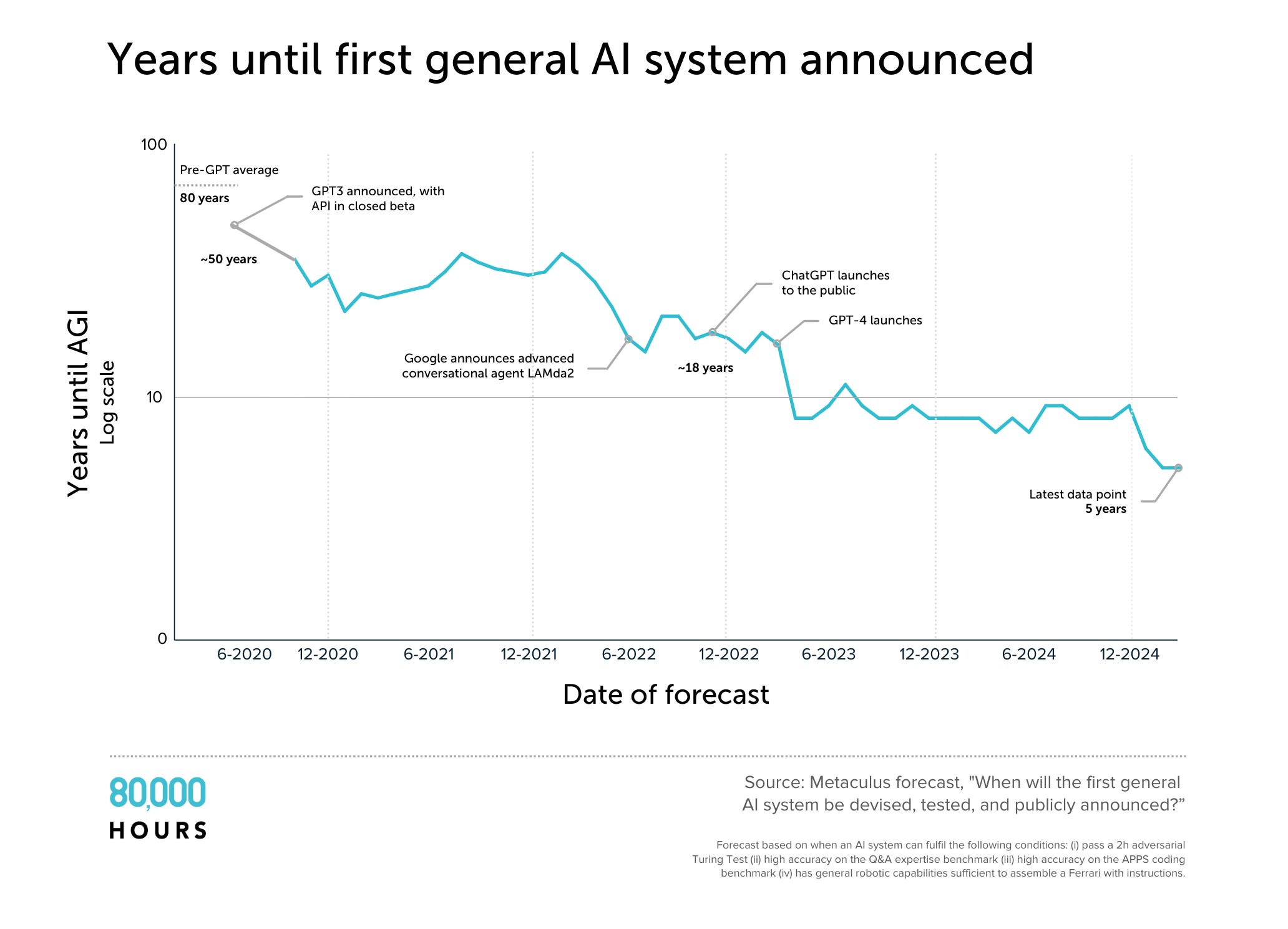

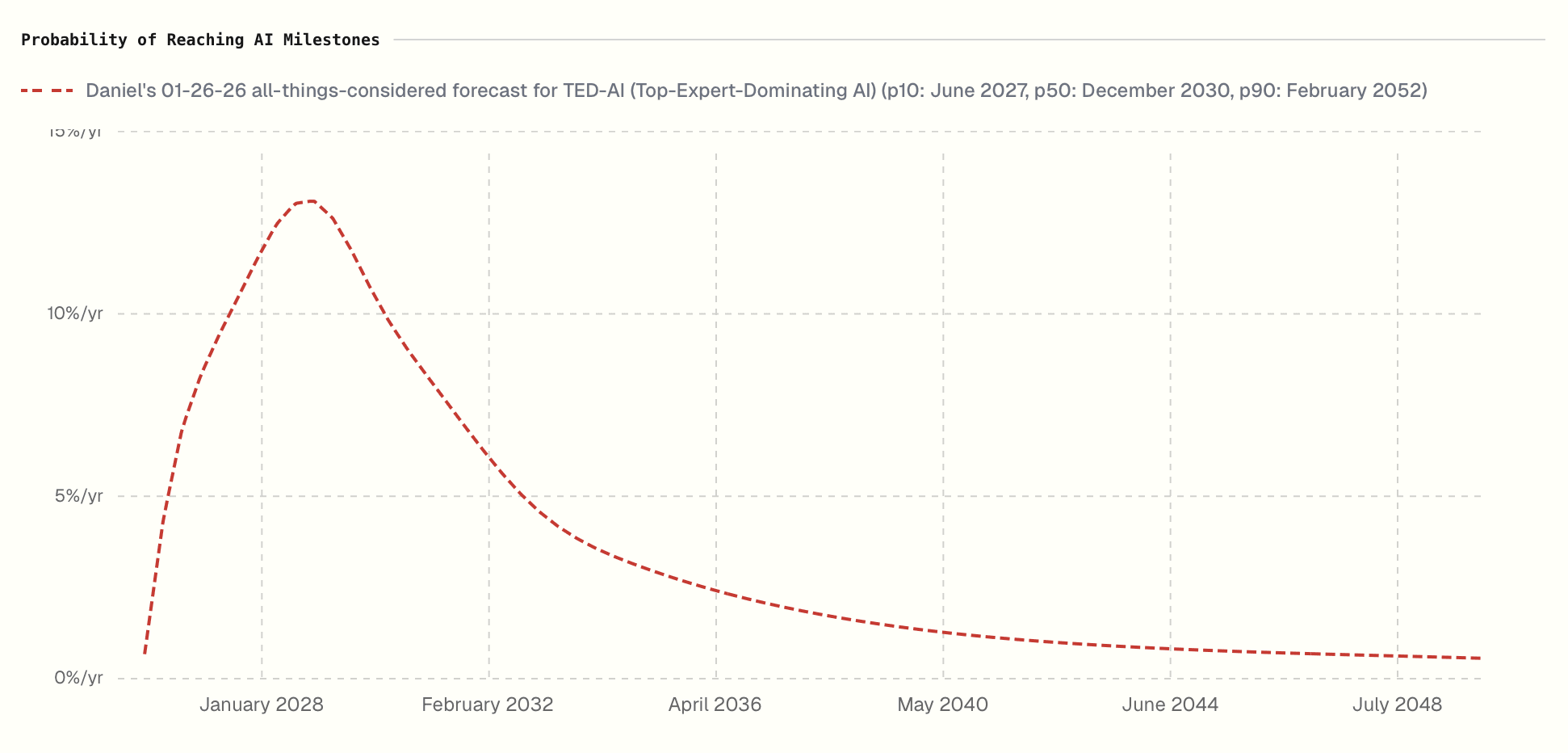

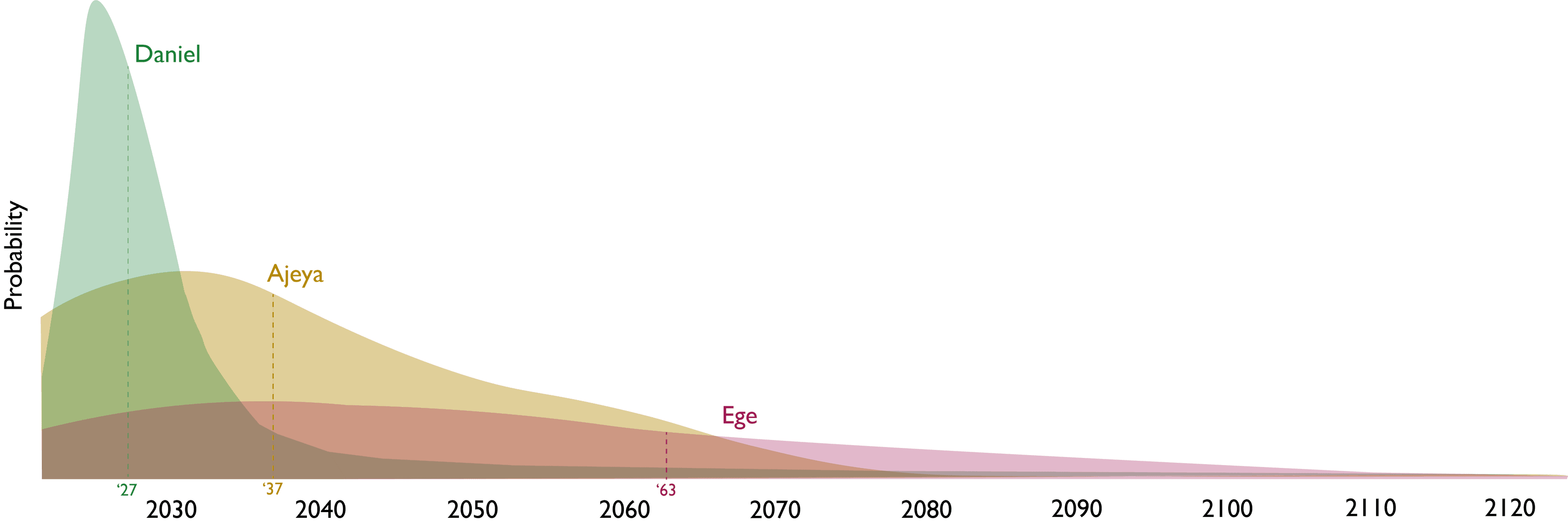

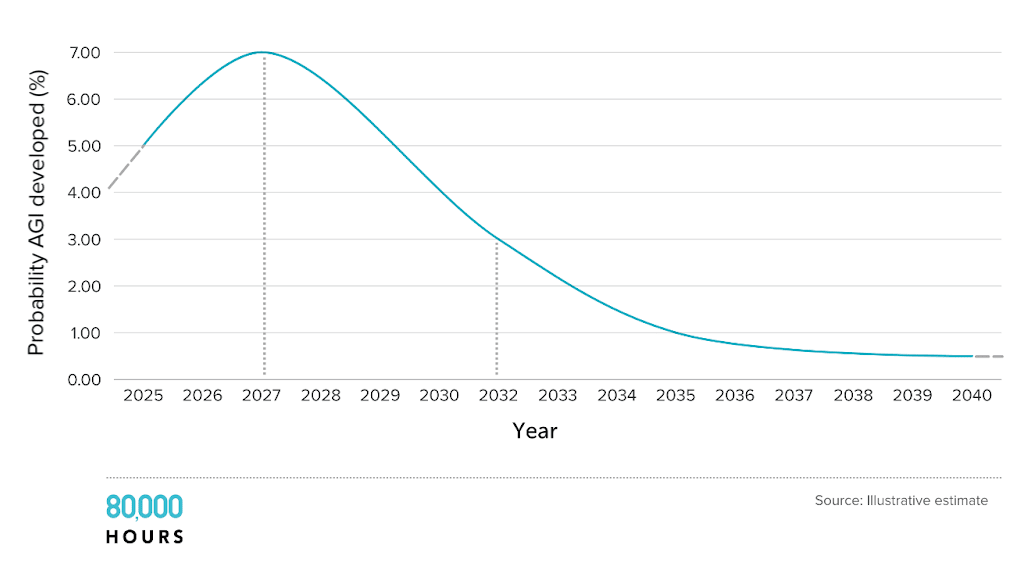

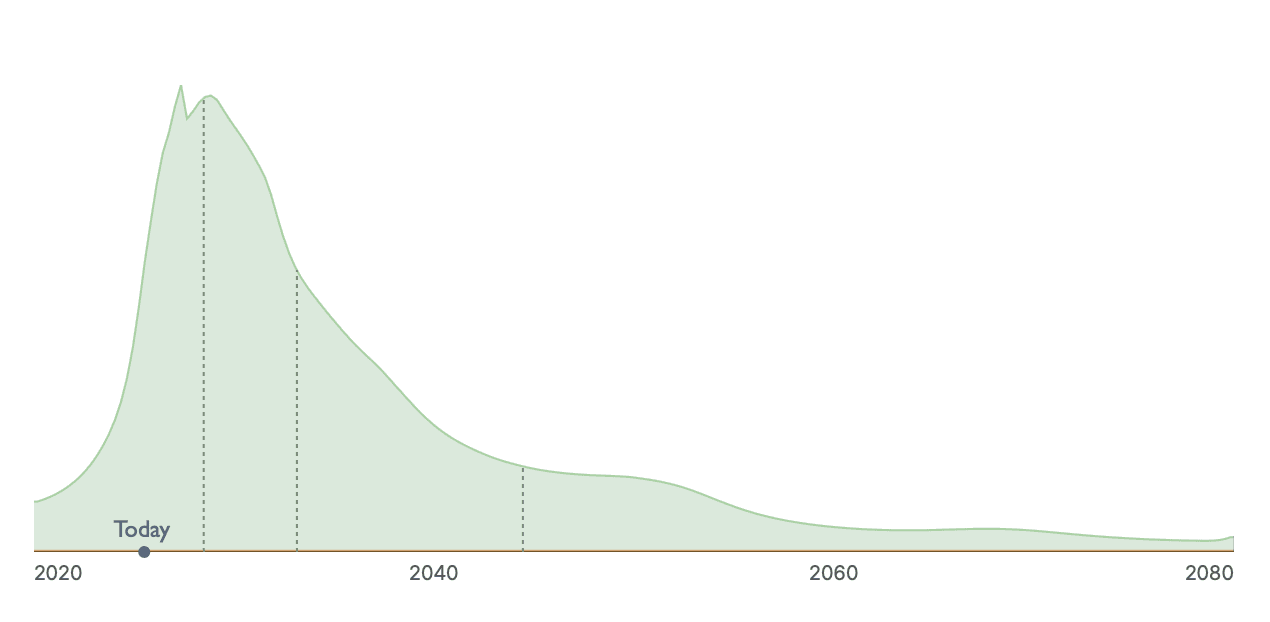

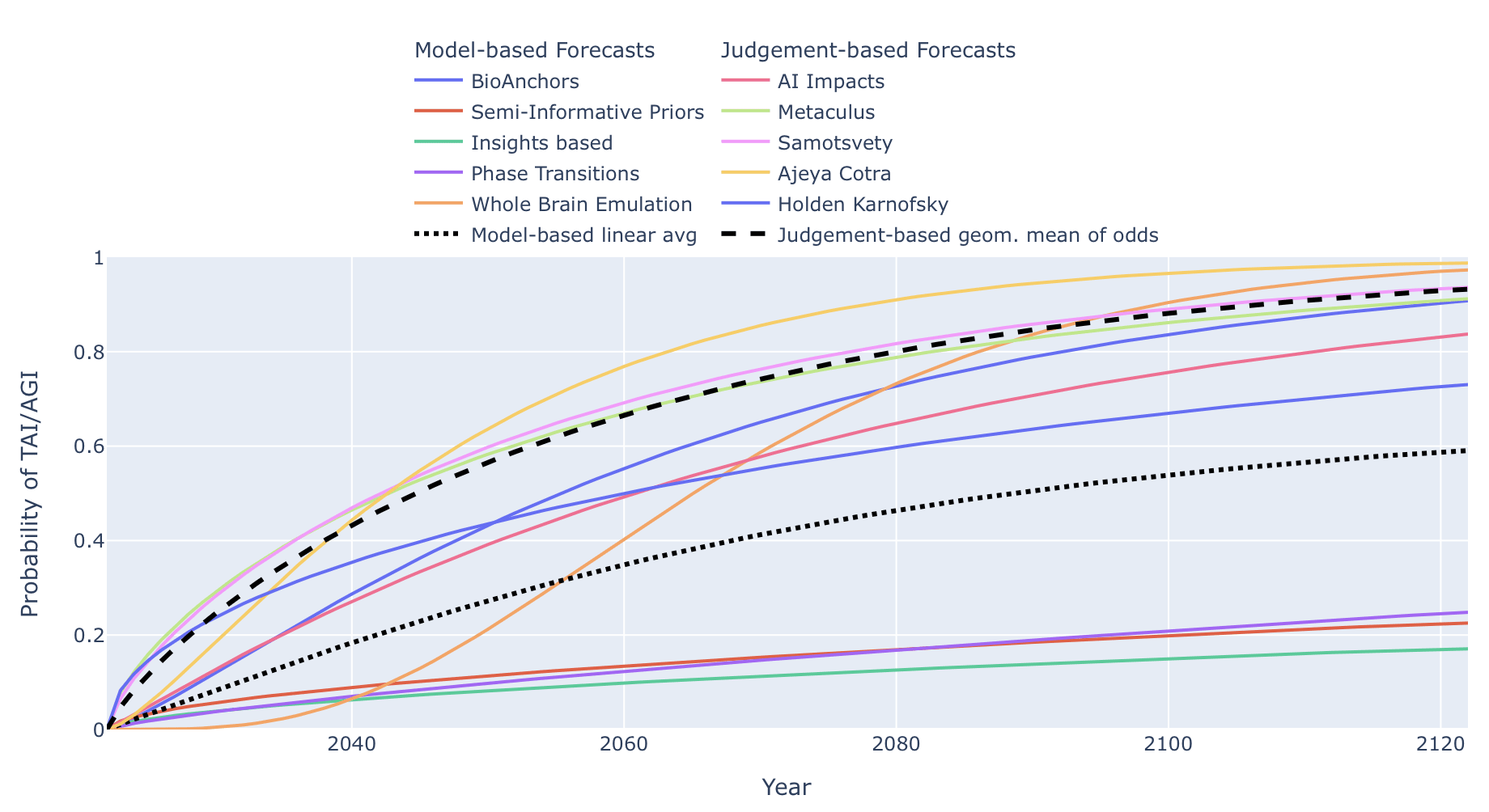

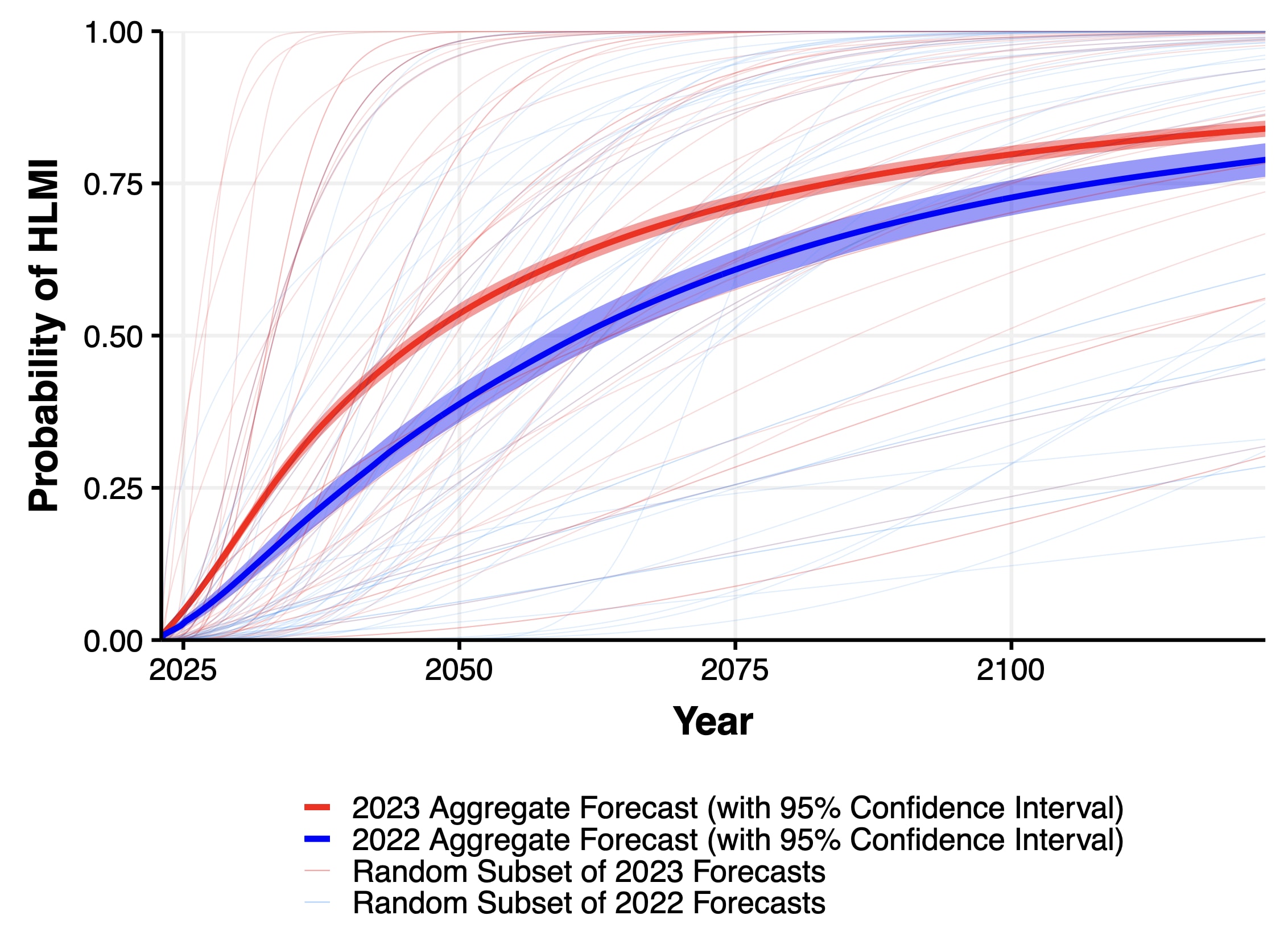

Community Forecasts Show Very Wide Timeline Ranges

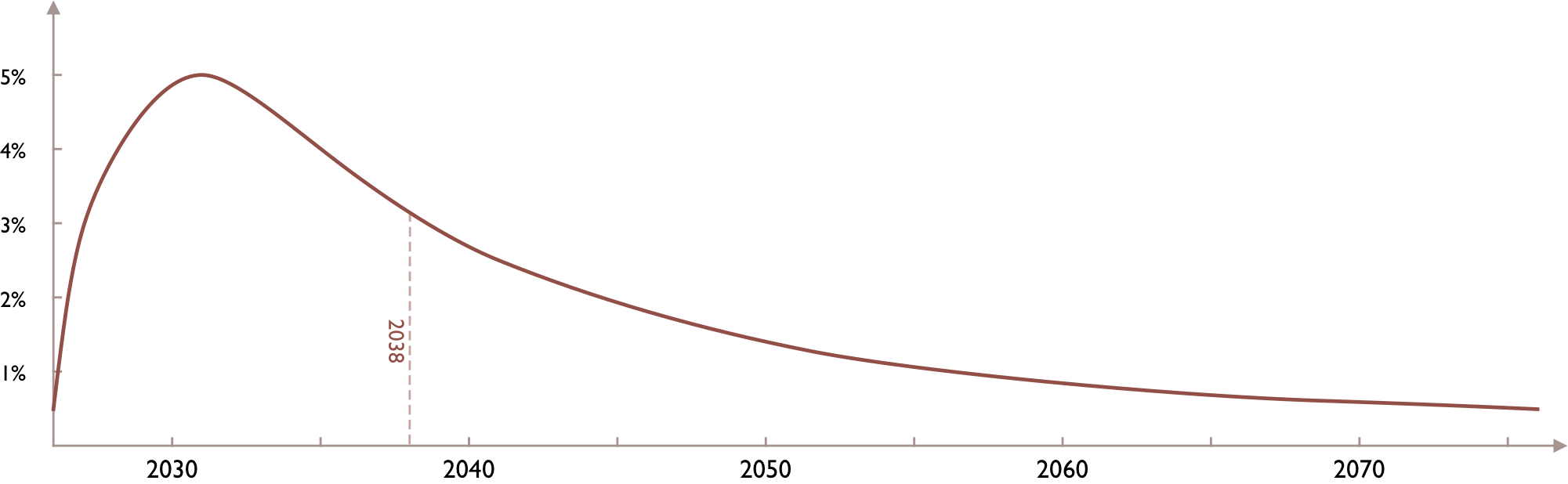

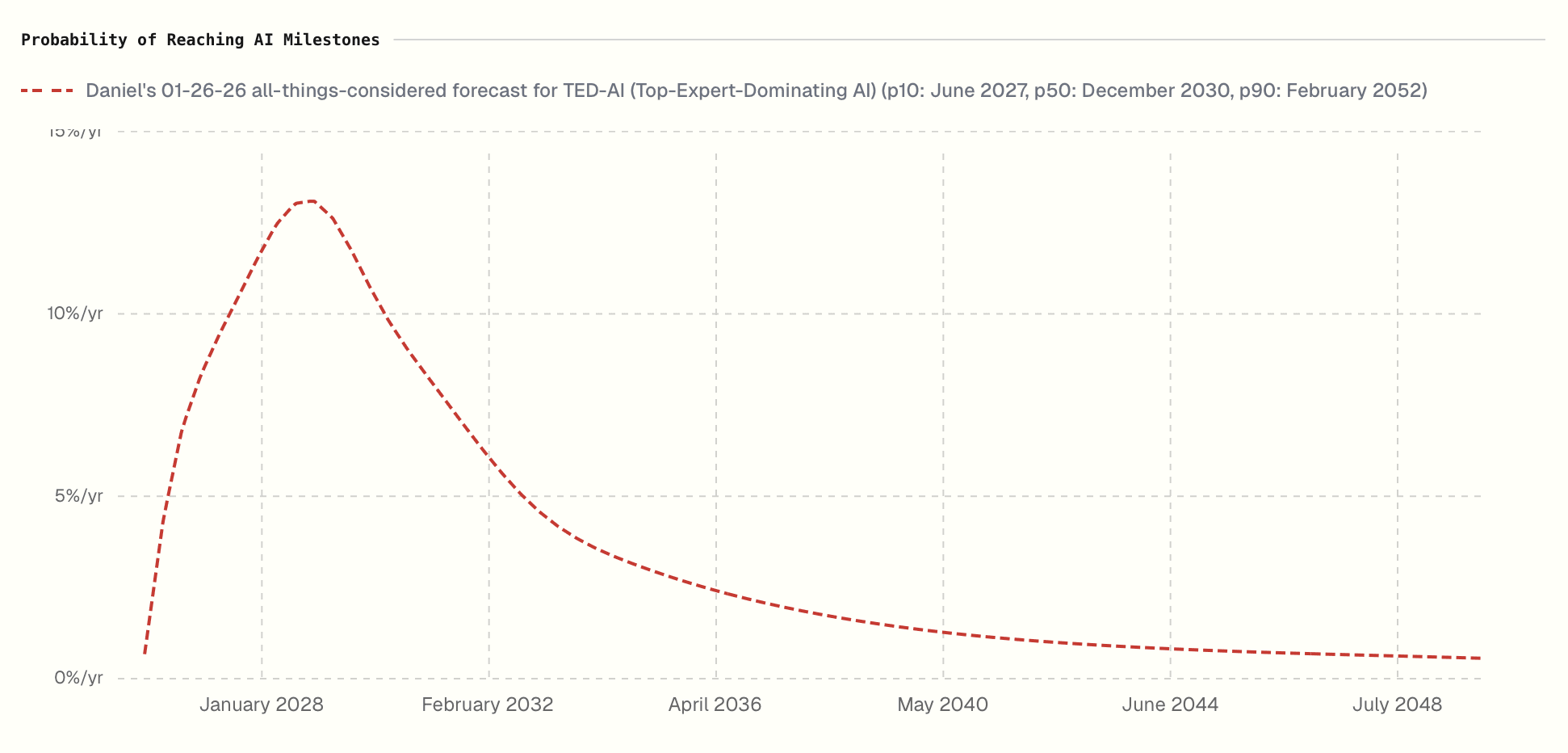

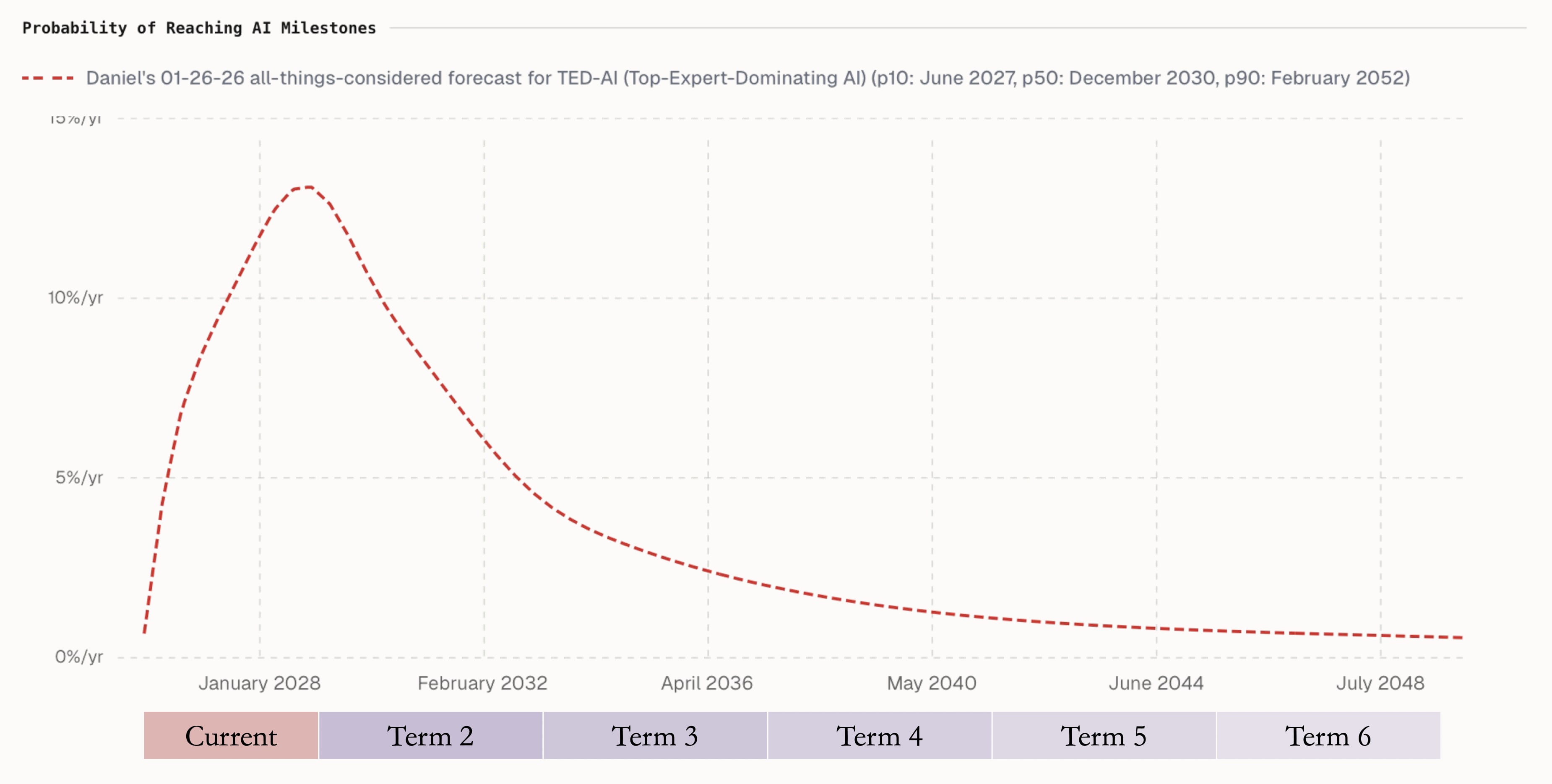

- Many leading forecasters (Cocotaljo, Metaculus, Grace et al.) present wide, skewed distributions with 80% intervals spanning decades.

- Ord shows multiple published distributions where 10%–90% ranges exceed 50 years, illustrating community-wide uncertainty.

Single-Year Predictions Misrepresent True Uncertainty

- Single-year or single-number timeline claims compress crucial uncertainty and mislead planners and the public.

- Ord shows Cocotaljo's distribution where the mode is 2028 but only a 27% chance, so naming 2028 obscures real risk spread.