LessWrong (Curated & Popular)

LessWrong (Curated & Popular) “What’s up with Anthropic predicting AGI by early 2027?” by ryan_greenblatt

4 snips

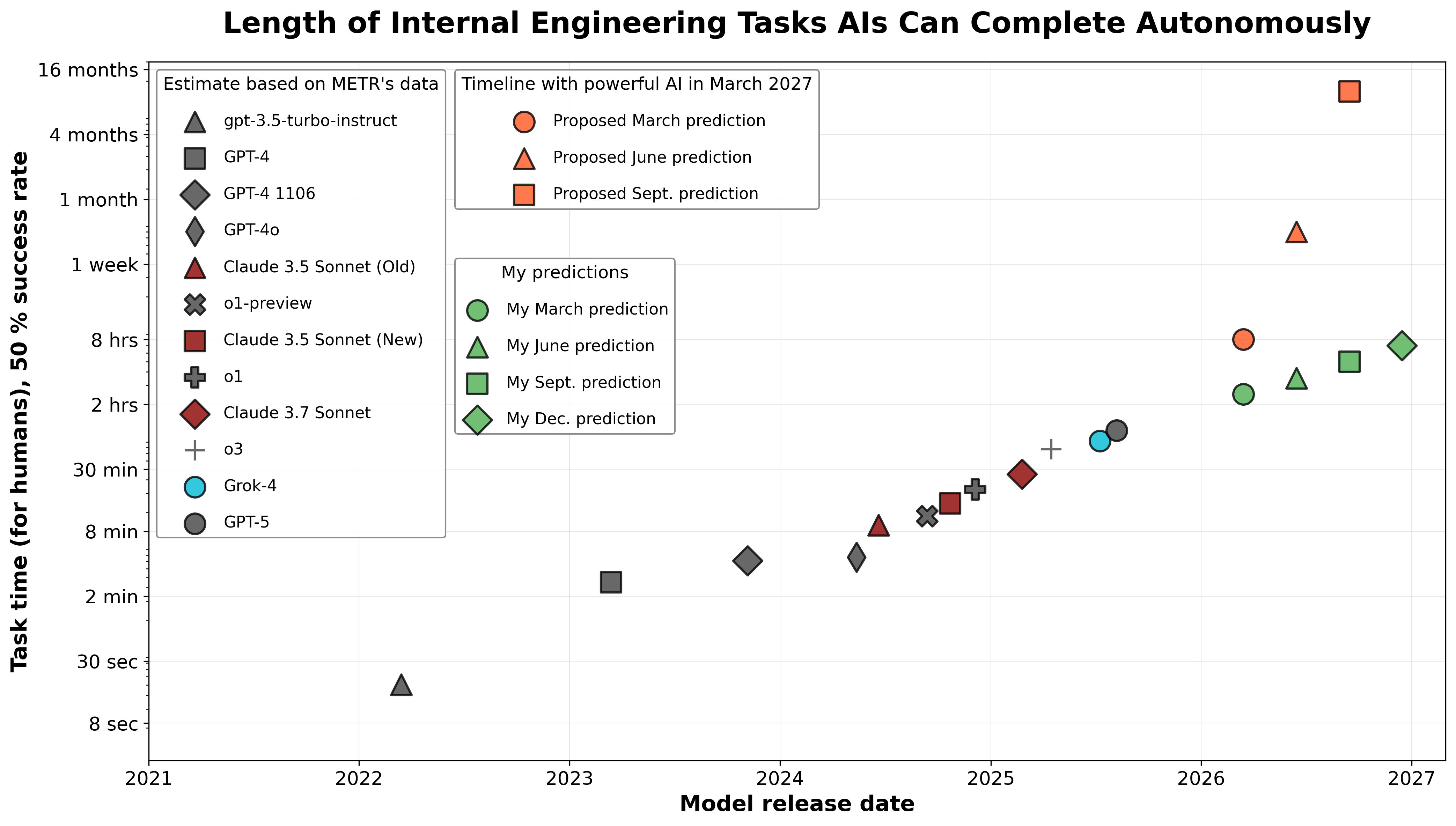

Nov 4, 2025 The discussion dives into Anthropic's bold prediction of achieving AGI by early 2027. Ryan Greenblatt breaks down what 'powerful AI' entails, highlighting key automation benchmarks essential for verification. He critiques earlier predictions and offers a skeptical view, estimating only a 6% chance for success by the deadline. The analysis includes a detailed timeline of required milestones and reasons why progress may be slower than anticipated. Overall, the conversation is a deep exploration of expectations, evidence, and the future of AI development.

AI Snips

Chapters

Books

Transcript

Episode notes

Automation As The Core Test

- Greenblatt operationalizes powerful AI as able to fully automate AI R&D and much remote scientific R&D.

- He treats failure to show these abilities by July 2027 as falsifying Anthropic's prediction.

Require Clear, Falsifiable Predictions

- Treat Anthropic's wording around expectation as implying roughly a 50% probability unless clarified.

- Demand clear intermediate predictions and admit falsification promptly if outcomes diverge.

Code-Authorship Predictions Fell Short

- Dario predicted 90% of code would be written by AI between June and September 2025 and essentially all by March 2026.

- Greenblatt notes that metric is misleading and that claim hasn't clearly come true.