LessWrong (Curated & Popular)

LessWrong (Curated & Popular) "Are there lessons from high-reliability engineering for AGI safety?" by Steven Byrnes

Feb 26, 2026

Steven Byrnes, a physicist-turned AGI safety researcher, presents a take on applying high-reliability engineering to AGI. He contrasts rigorous specs, testing, redundancy, and inspections with the challenge of open-ended agents. He explores when engineering rigor could help, barriers at AI orgs, and responses to common objections.

AI Snips

Chapters

Transcript

Episode notes

R&D Experience With High Reliability Projects

- Steven Byrnes worked at an engineering R&D firm from 2015–2021 where colleagues built high-reliability systems like submarine ICBM guidance and solar-corona sensors.

- He learned day-to-day practices and culture that informed his view on applying those practices to AGI safety.

Core Practices Of High Reliability Engineering

- High-reliability engineering requires exact specs, detailed environment understanding, predictive models, redundancy, and extensive tests that produce quantitative data.

- Byrnes lists verification/validation, component tolerances, finite-element sims, vibration/thermal/radiation tests, inspections, and organizational support as core practices.

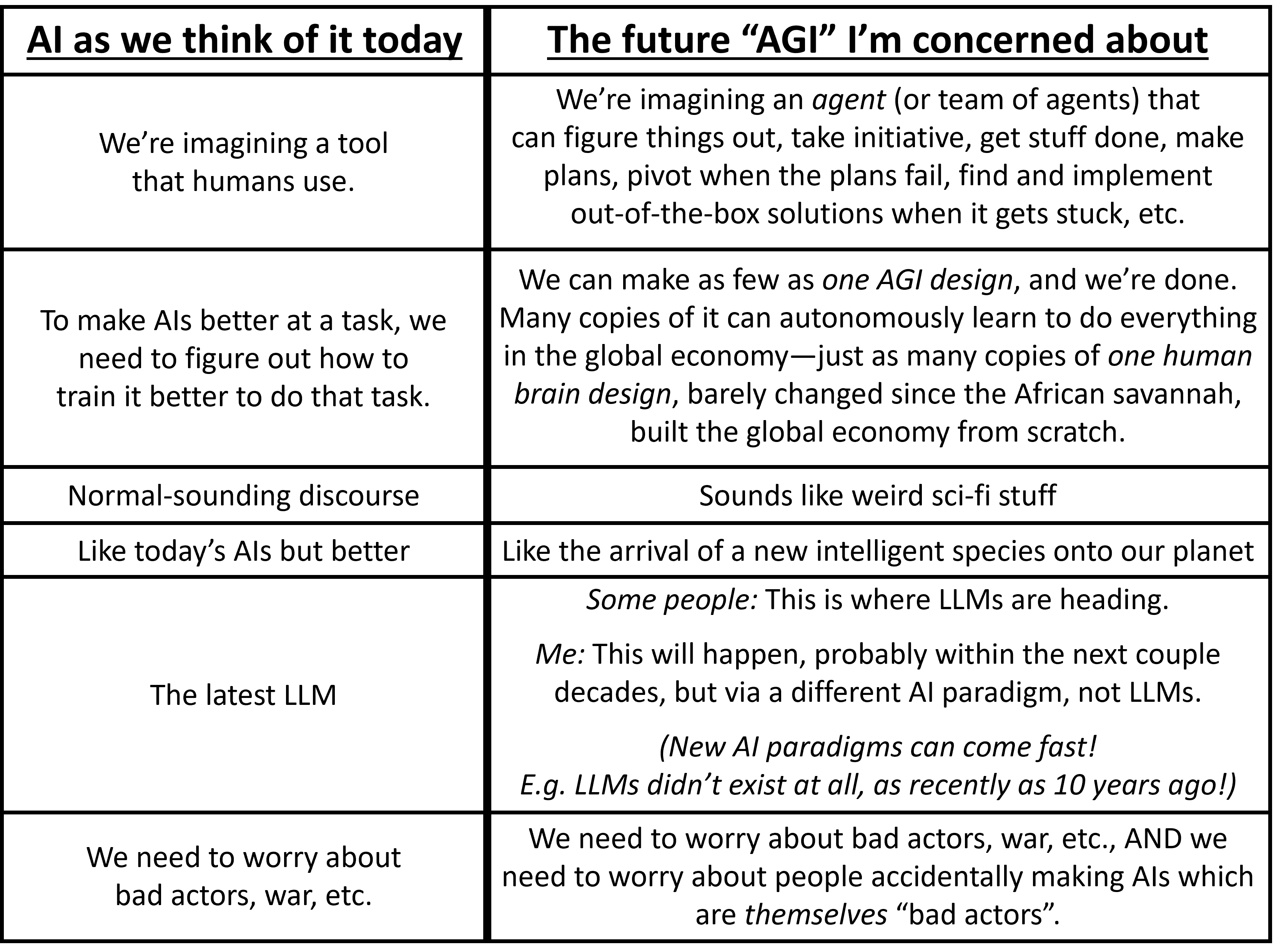

Why Traditional Specs Fail For Open Ended AGI

- For open-ended AGI agents (e.g., an AGI acting like Jeff Bezos) we cannot plausibly specify exact behaviors for every situation because their tasks and methods will be novel.

- Byrnes argues that this unpredictability makes the traditional spec/test approach infeasible for autonomous, entrepreneurial AGIs.