LessWrong (Curated & Popular)

LessWrong (Curated & Popular) "Anthropic’s “Hot Mess” paper overstates its case (and the blog post is worse)" by RobertM

Feb 4, 2026

A sharp critique of Anthropic’s “Hot Mess” research and its blog framing. The discussion highlights selective reading of results and a misleading definition of “incoherence.” It questions key experiments, statistical measures, and claims about future alignment risks. The narrative also flags possible LLM authorship of the blog and methodological overstretching.

AI Snips

Chapters

Transcript

Episode notes

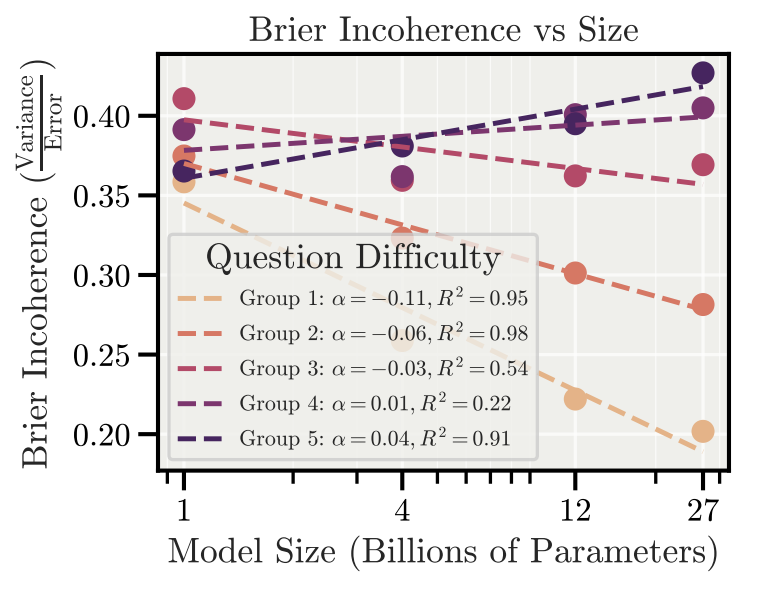

Selective Emphasis Masks Overall Trend

- Robert M argues Anthropic's abstract selectively emphasizes cases where larger models appear more incoherent.

- He notes most experiments actually show coherence increases with model size, contradicting the paper's headline claim.

Optimizer Experiment Shows Ability, Not Intent

- The synthetic optimizer experiment shows larger models follow objectives sooner but fail at long coherent sequences.

- Robert M says this reflects capability limits (bias) not propensity for misalignment, so it doesn't support misalignment extrapolations.

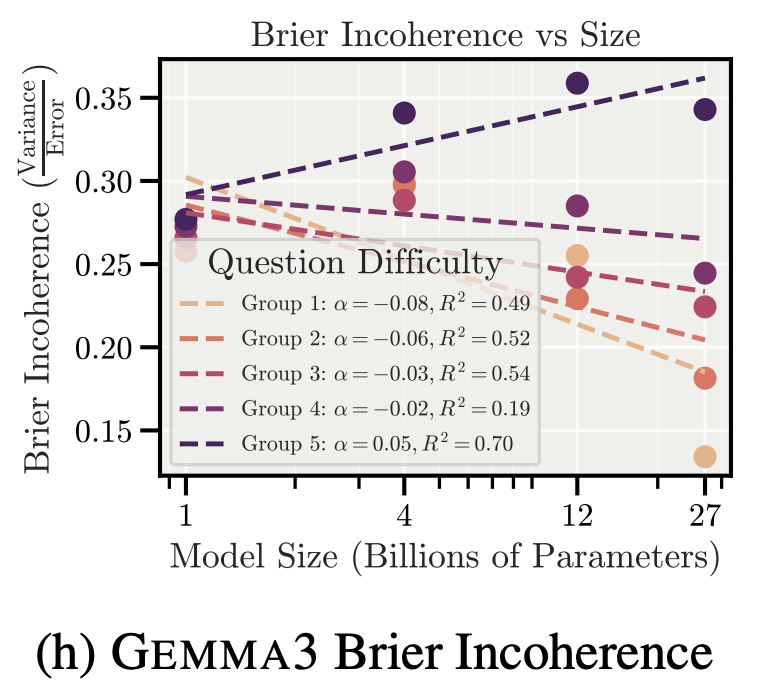

Incoherence Metric Can Mislead Risk

- Anthropic's incoherence metric is the fraction of error due to variance, which can mislead about risk.

- Robert M points out a dumb but consistent system scores as highly coherent under that metric, undermining its relevance to misalignment.