LessWrong (30+ Karma)

LessWrong (30+ Karma) “AIs can now often do massive easy-to-verify SWE tasks and I’ve updated towards shorter timelines” by ryan_greenblatt

Apr 6, 2026

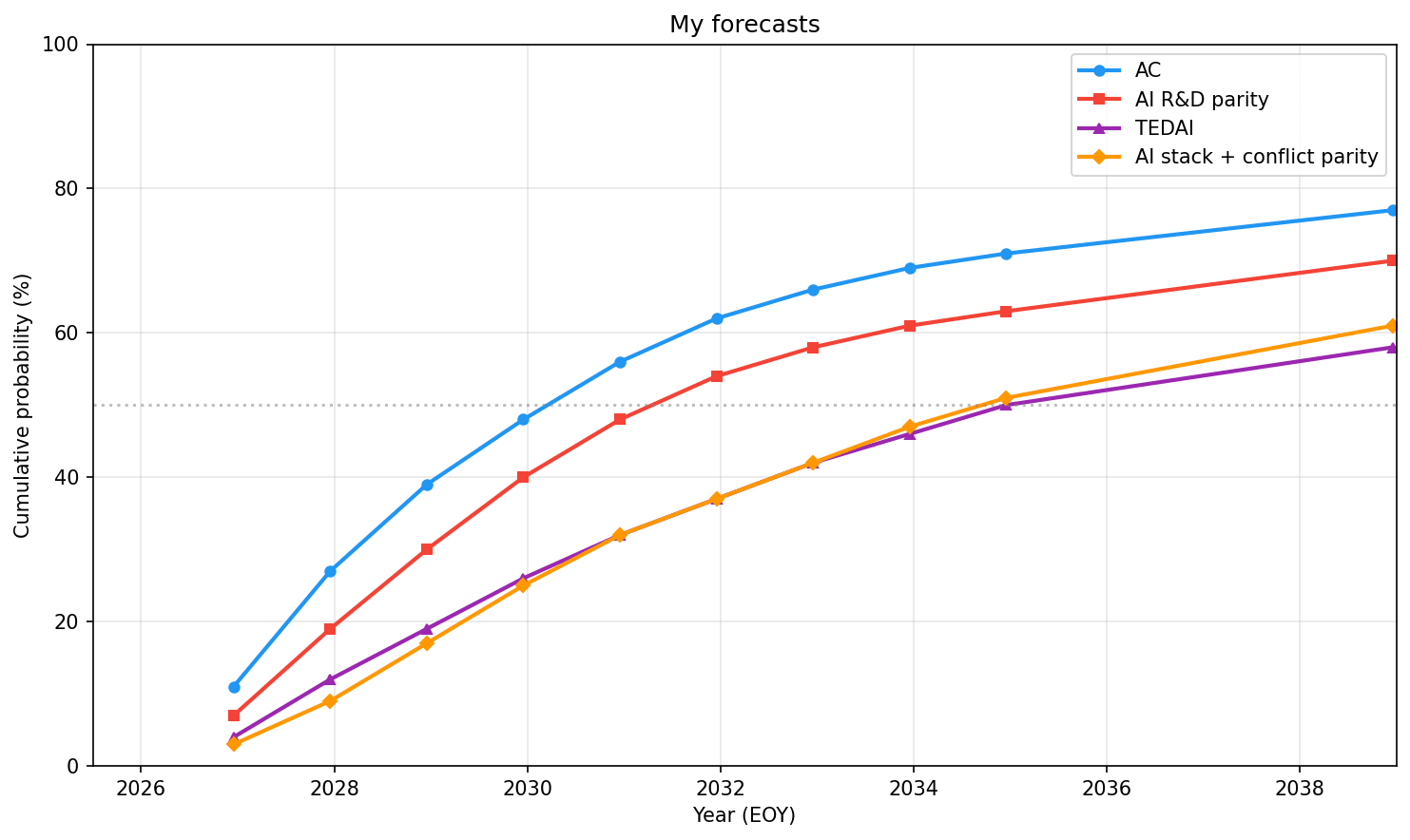

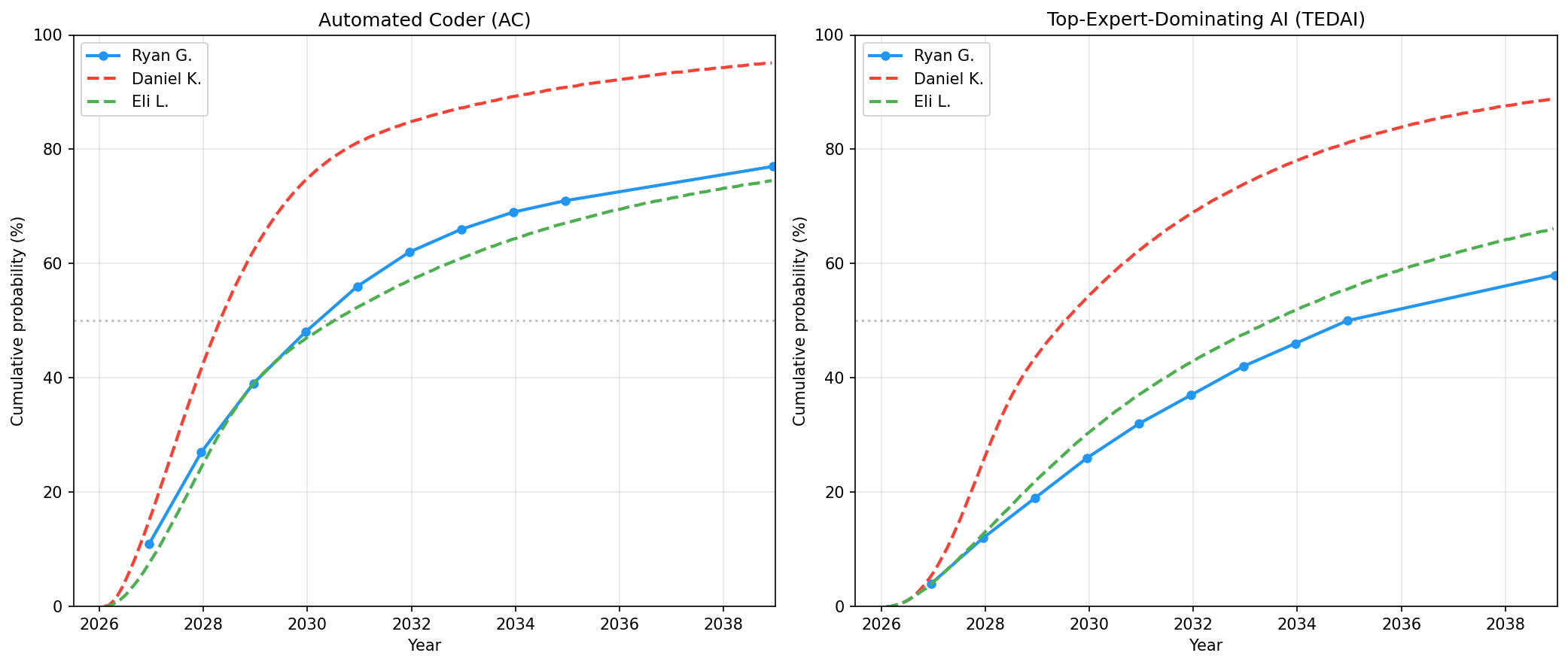

Ryan Greenblatt, AI researcher who updates timelines for advanced systems. He explains why short, easy-to-verify software tasks are now much easier for AIs and how that pushes his timeline estimates much earlier. He walks through what makes those tasks verifiable, limits like taste and scaffolding, hands-on attempts to automate safety research, and implications for large-scale SWE, R&D, and cyber work.

AI Snips

Chapters

Transcript

Episode notes

Task Distribution Mismatch Caused Forecast Error

- There's a large gap between ESNI tasks and existing ML task suites; Ryan found ESNI 50% horizons are ~20x longer than on Meters suite, not 4x as he expected.

- This mismatch drove a clear prediction error in his prior forecasts.

Superexponential Progress In ESNI Tasks

- ESNI tasks appear to be in a super-exponential regime for 50% reliability time horizons, meaning progress accelerates rapidly with more runtime iteration.

- Lower generality suffices because mistakes are easier to spot and recover from on testable tasks.

Checkability Determines AI Effectiveness

- Task checkability and iterability create a large gap: ES tasks that AIs can self-check outperform tasks that only companies can evaluate.

- Many SWE tasks are schlep-heavy and suit iterative AI labor, while algorithm-heavy systems are harder to iterate.