LessWrong (30+ Karma)

LessWrong (30+ Karma) “There should be $100M grants to automate AI safety” by Marius Hobbhahn

Apr 3, 2026

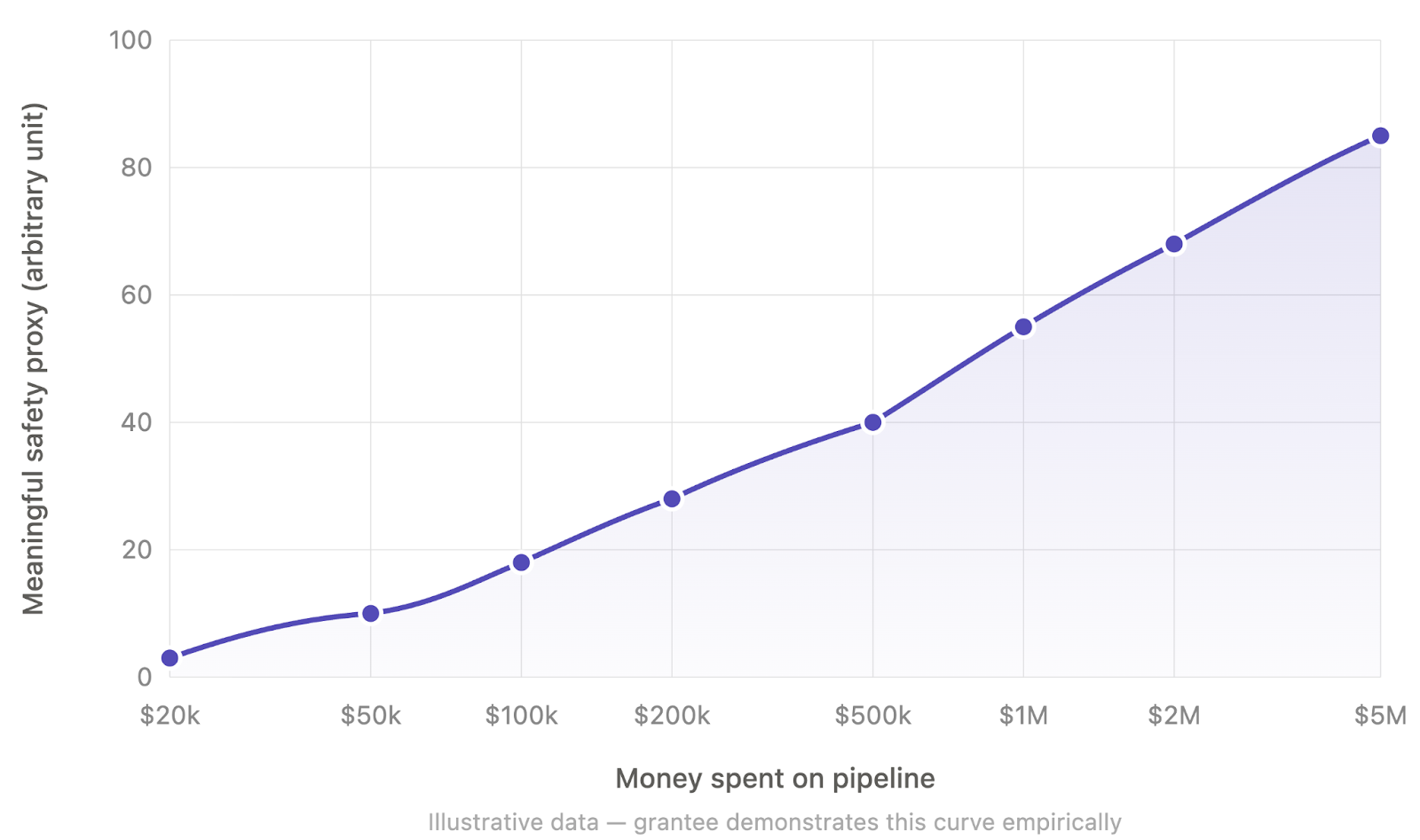

Marius Hobbhahn, author and Apollo Research affiliate, proposes massive grants to scale automated AI-safety work. He urges urgent, large-scale funding and a grant model that ramps to $100M+ budgets for automated safety pipelines. He outlines concrete areas like monitoring, automated red-teaming, white-box auditing, propensity evaluations, and automated conceptual alignment research.

AI Snips

Chapters

Transcript

Episode notes

Make Large Grants Conditional On Public Benefit

- Require public benefit and publication when a grantee proves they can spend $100M meaningfully on safety.

- Options include open-sourcing the pipeline, collaborating with labs, joining an AGI lab, or turning into an AGI-safety for-profit under publication conditions.

Explicit Programs Attract Entrepreneurial Talent

- Announce explicit grant programs because conservatism among funders means entrepreneurs won't attempt ambitious scaling without clear commitments.

- Hobbhahn expects entrepreneurial talent needs visible incentives to choose safety-first scaling projects.

Goodharting Can Be A Useful Failure Signal

- Goodharting is a real risk but observing metric failure is informative evidence to stop that program.

- Hobbhahn suggests robust metrics help, and failed metrics signal the need to halt particular scaling attempts.