LessWrong (30+ Karma)

LessWrong (30+ Karma) “Cycle-Consistent Activation Oracles” by slavachalnev

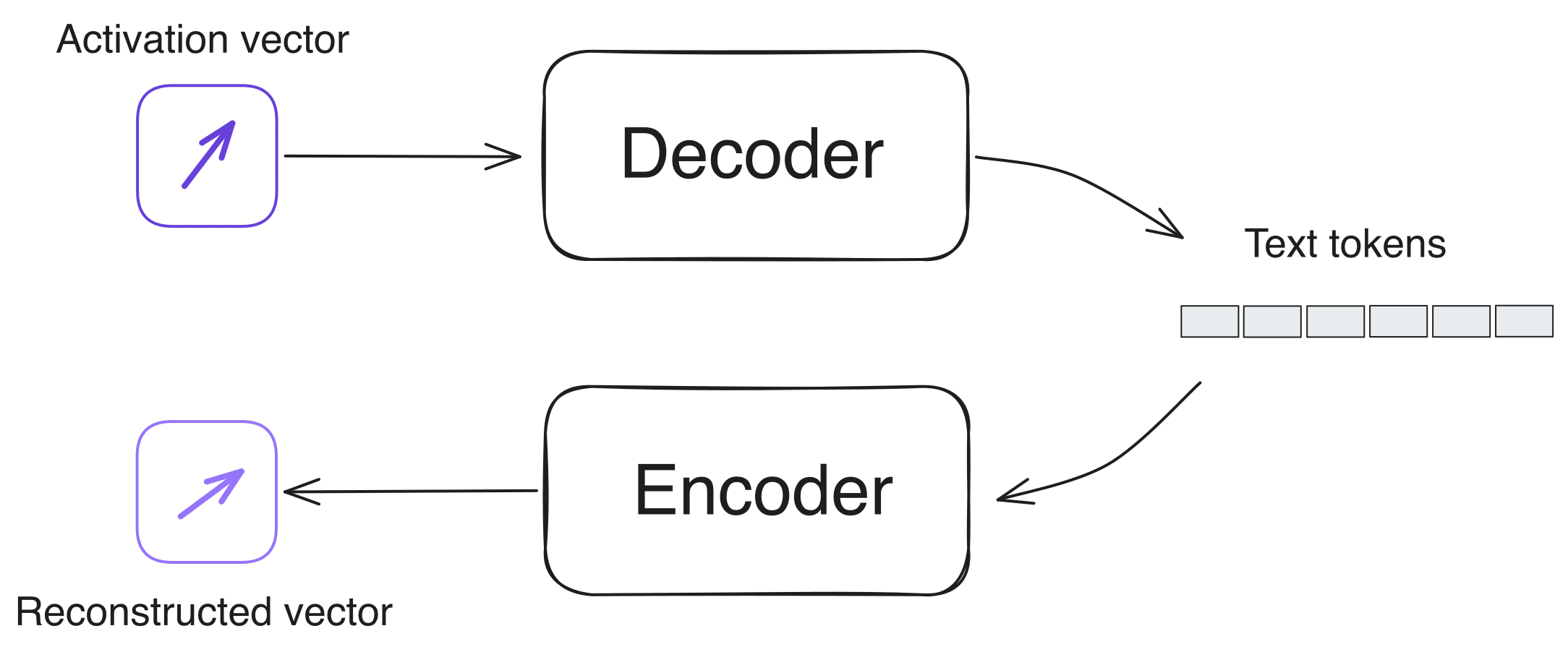

TL;DR: I train a model to translate LLM activations into natural language, using cycle consistency as a training signal (activation → description → reconstructed activation). The outputs are often plausible, but they are very lossy and are usually guesses about the context surrounding the activation, not good descriptions of the activation itself. This is an interim report with some early results.

Overview

I think Activation Oracles (Karvonen et al., 2025) are a super exciting research direction. Humans didn't evolve to read messy activation vectors, whereas ML models are great at this sort of thing.

An activation oracle is trained to answer specific questions about an LLM activation (e.g. "is the sentiment of this text positive or negative?" or "what are the previous 3 tokens?"). I wanted to try something different: train a model to translate activations into natural language.

The main problem to solve here is the lack of training data. There's no labeled dataset of activations paired with their descriptions. So how do we get around this?

One idea is to use cycle consistency: if you translate from language A to language B and back to A, you should end up approximately where [...]

---

Outline:

(00:38) Overview

(03:05) Setup

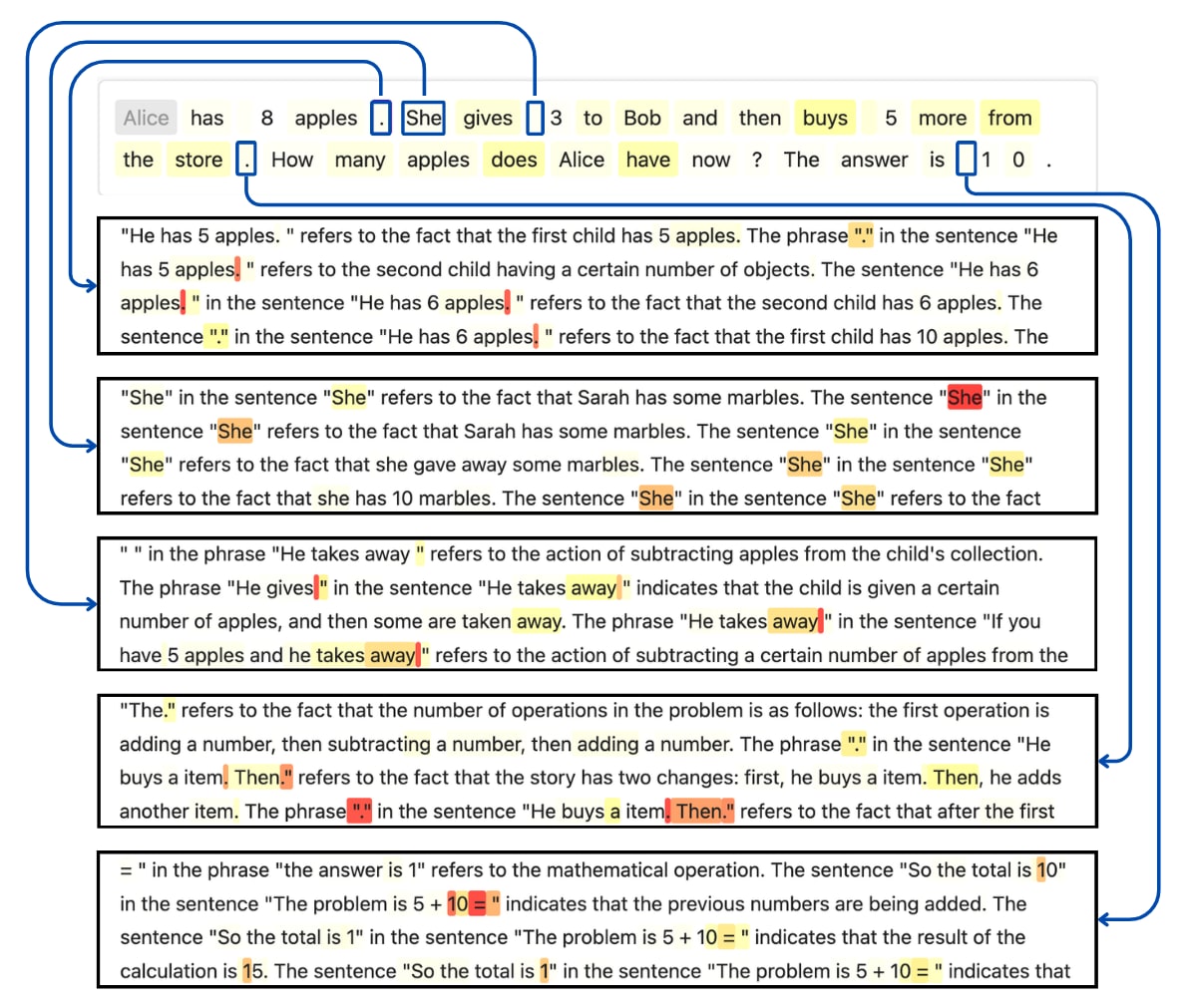

(04:39) Example outputs

(06:36) Problems with this approach

(08:34) Evals

(08:37) Retrieval

(09:08) Classification

(10:15) Arithmetic

(11:20) What I want to try next

(12:38) Appendix: Other training ideas

The original text contained 3 footnotes which were omitted from this narration.

---

First published:

March 12th, 2026

Source:

https://www.lesswrong.com/posts/Nf2sKaNNdxE2ssxbp/cycle-consistent-activation-oracles-1

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.