This post highlights a few key excerpts from our full impact report. You can read the full report at https://controlai.com/impact-report-2025.

ControlAI is a non-profit organization working to avert the extinction risks posed by superintelligence. We help hundreds of thousands of people understand these risks and meet hundreds of lawmakers to inform them, without mincing words, about what is at stake.

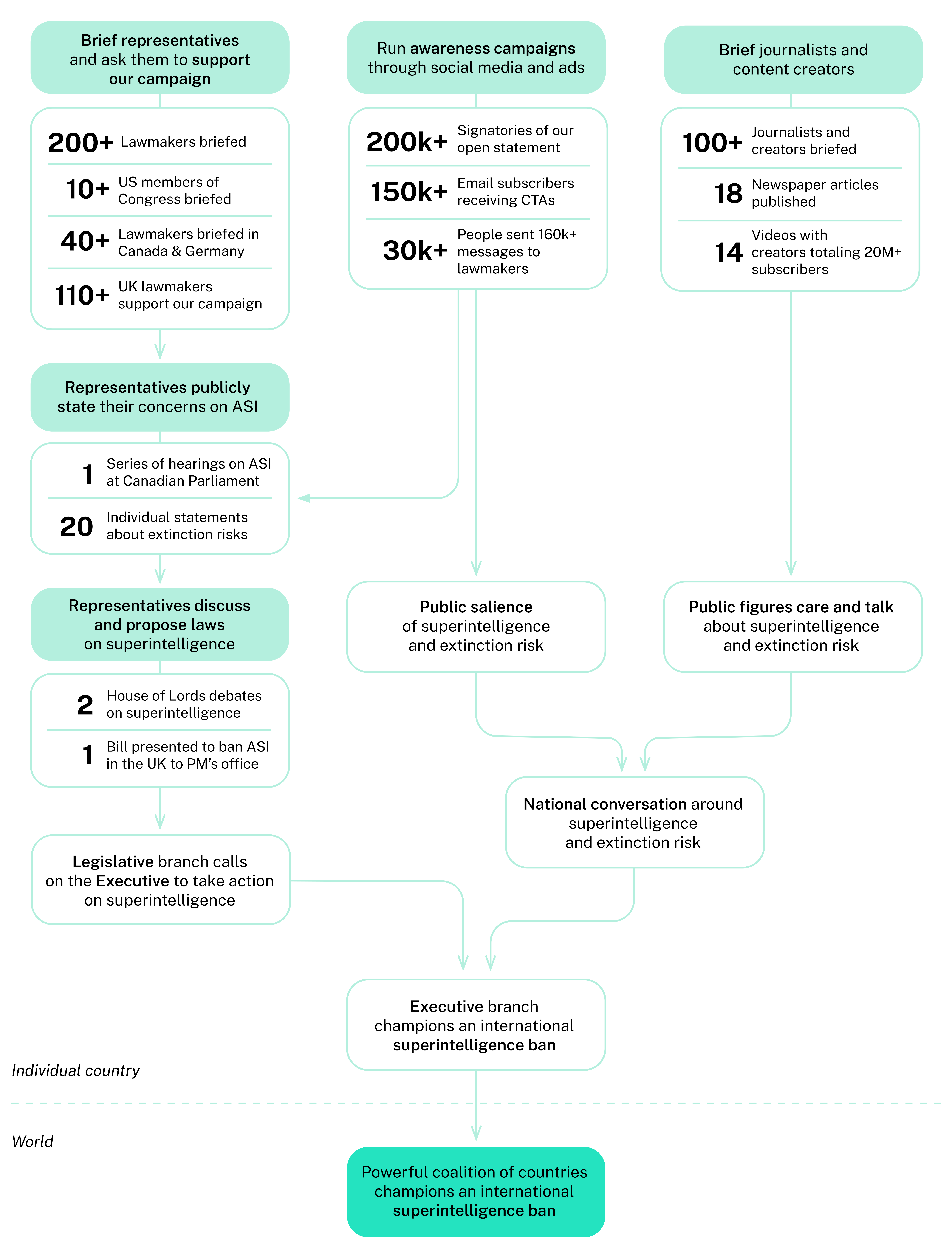

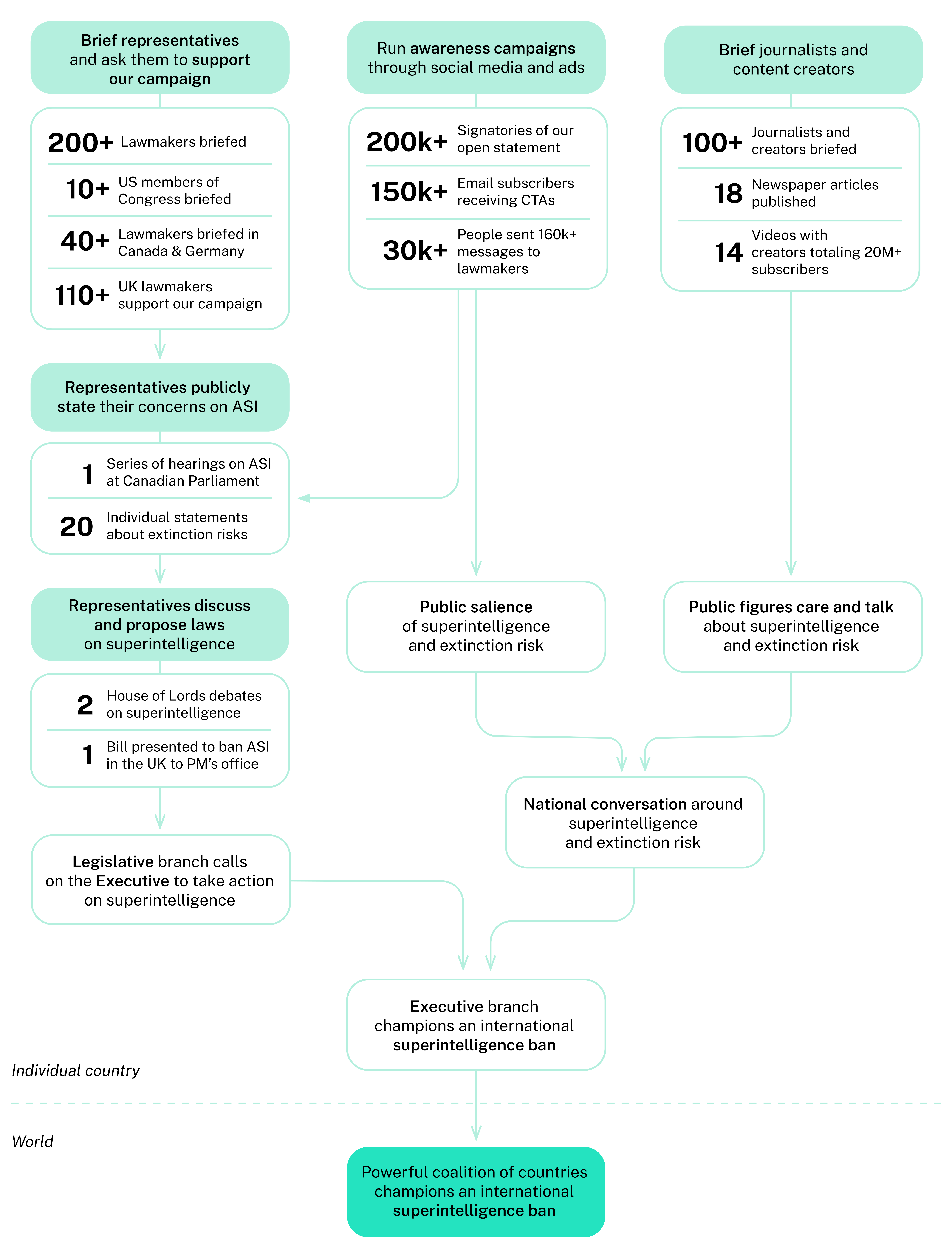

In little more than a year, we briefed over 200 parliamentarians, built a coalition of 110+ UK lawmakers recognizing superintelligence as a national security threat and led to two debates in the UK House of Lords, and our work led to a series of hearings on AI risk and superintelligence at the Canadian Parliament.[1]These hearings included testimonies from me (Andrea) and Samuel at ControlAI, Connor Leahy, Malo Bourgon (MIRI), Max Tegmark and Anthony Aguirre (FLI), David Krueger, and more.

The report covers results between December 2024 and January 2026. As of posting this in March 2026, we've now briefed 279 lawmakers and 90+ US congressional offices. In only the last 2 months, we've scaled in Canada and Germany from ~50 to 100+ lawmakers briefed, despite us only having one staffer in each country.

Moving forward, we plan to significantly [...]

---

Outline:

(01:41) ControlAIs mission

(03:33) Our Results Last Year

(03:37) Lawmaker outreach

(03:55) Media & content creator outreach

(04:11) Public awareness campaign and lawmaker engagement tools

(04:32) Theory of Change

(04:36) The awareness gap

(05:54) Building the coalition

(06:59) Our Theory of Change in more detail

(07:21) Moving forward

The original text contained 1 footnote which was omitted from this narration.

---

First published:

March 27th, 2026

Source:

https://www.lesswrong.com/posts/BwfydMhjuroqiZs4x/controlai-2025-impact-report

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.