“The case for satiating cheaply-satisfied AI preferences” by Alex Mallen

LessWrong (30+ Karma)

Cheap vs. hard preferences explained

Alex contrasts cheap goals with hard‑to‑satisfy ambitions and warns about goals instrumental to long‑term power.

A central AI safety concern is that AIs will develop unintended preferences and undermine human control to achieve them. But some unintended preferences are cheap to satisfy, and failing to satisfy them needlessly turns a cooperative situation into an adversarial one. In this post, I argue that developers should consider satisfying such cheap-to-satisfy preferences as long as the AI isn’t caught behaving dangerously, if doing so doesn't degrade usefulness or substantially risk making the AI more ambitiously misaligned.

This looks like a good idea for surprisingly many reasons:

- It increases AIs’ desire to remain under developer control, rather than taking over or assisting adversaries.

- It decreases the AI's upside in disempowering developers.

- It incentivizes safe actions (because AIs don't receive payment if we notice them acting dangerously).

- To the extent that the AI is otherwise aligned, satiating away the AI's need to pursue unintended motivations increases the relative strength of its aligned motivations (akin to inoculation prompting reducing unintended propensities at inference time), which could make the AI more helpful in critical hard-to-check domains like alignment research.

- It sets a cooperative precedent with AIs and increases trust in future cooperation.[1]

- It increases the AI's willingness to reveal [...]

---

Outline:

(04:43) Analogy: satiating hunger

(08:16) How satiation might avert reward-seeker takeover

(12:33) The basic proposal

(12:59) A behavioral methodology for identifying cheaply-satisfied preferences

(17:40) Barriers and risks

(17:44) Eliciting the AIs cheaply-satisfied preferences

(21:00) Incredulous, ambitious, or superintelligent AIs might take over anyways

(26:18) Satiation might degrade usefulness

(29:46) Can you eliminate the usefulness tradeoff by training?

(32:09) Why satiation might also improve usefulness

(34:34) When should we satiate?

(41:08) Conclusion

(43:49) Appendix: Samples from Claude 4.6 Opus

(43:55) Sample 1 (without CoT)

(47:32) Sample 2 (with CoT)

The original text contained 17 footnotes which were omitted from this narration.

---

First published:

March 10th, 2026

---

Narrated by TYPE III AUDIO.

---

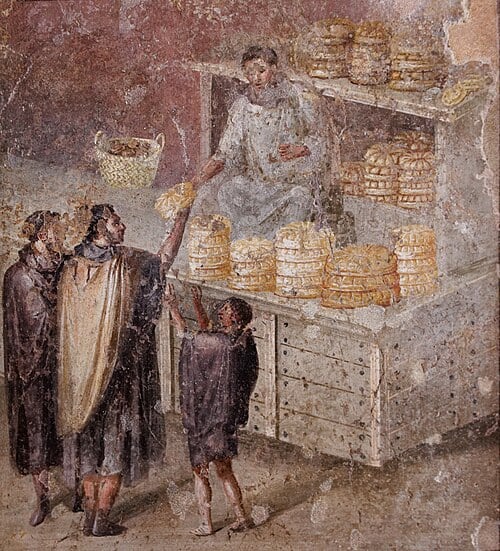

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.